AWS has launched a new service that uses artificial intelligence to convert video into vertical formats optimized for mobile and social platforms in real time.

The company is touting its new AWS Elemental Inference, as a tool that will help broadcasters and streamers reach audiences on social and mobile platforms like TikTok, Instagram Reels, and YouTube Shorts without manual post-production work or AI expertise.

Although today’s viewers consume content differently than they did even a few years ago, most live broadcasts are still produced in landscape format for traditional viewing. Converting these broadcasts into vertical formats for mobile platforms typically requires time-consuming manual editing that causes broadcasters to miss viral moments and lose audiences to mobile-first destinations, according to AWS.

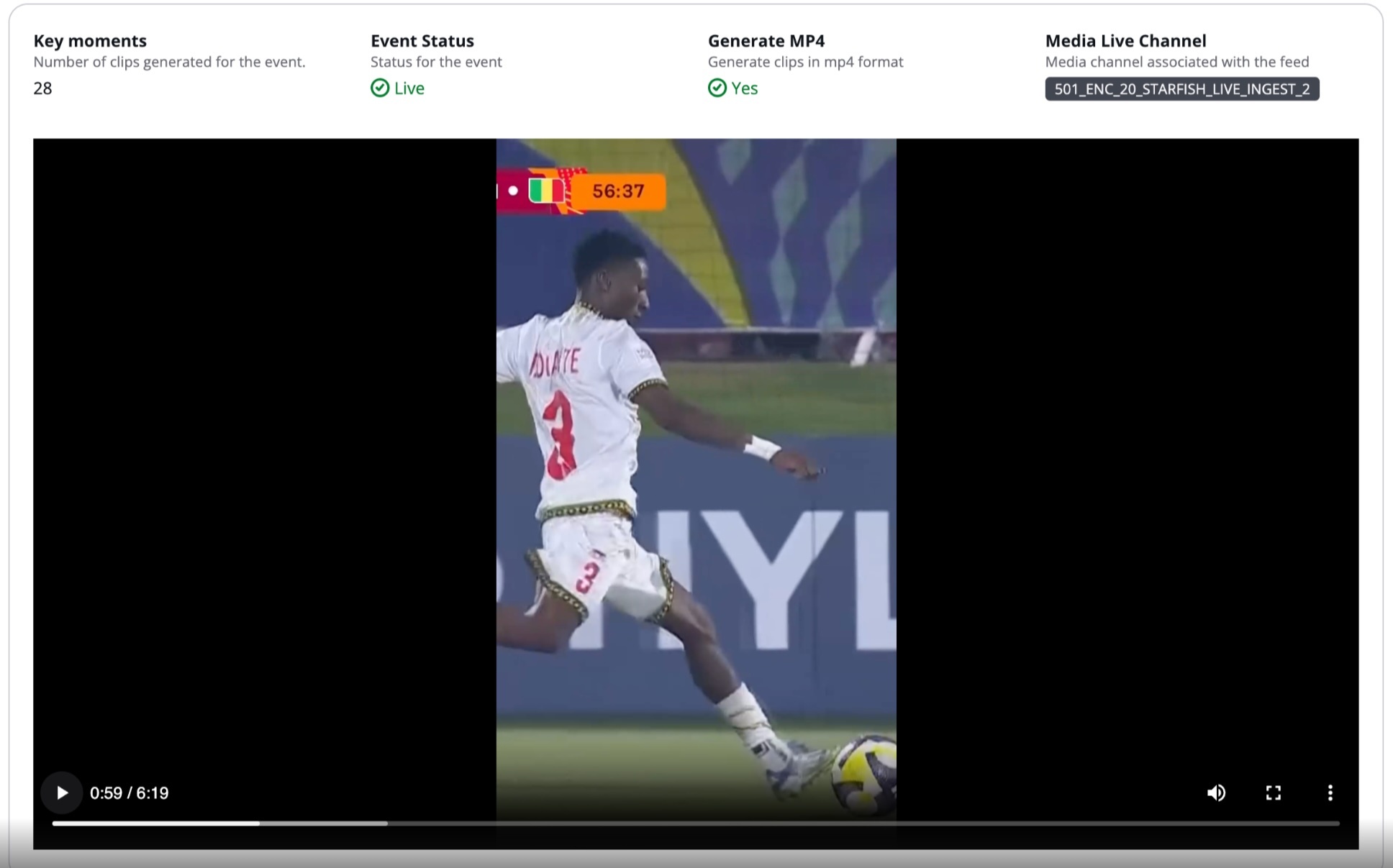

AWS Elemental Inference uses an agentic AI application by analyzing video in real time and automatically applies the right optimizations at the right moments. Detection of vertical video cropping and clip generation happens independently, executing multistep transformations that require no human intervention to extract value.

AWS Elemental Inference analyzes video and automatically applies AI capabilities with no human-in-the-loop prompting required. IT applies AI capabilities in parallel with live video, achieving 6-10 second latency compared to minutes for traditional post-processing approaches. This “process once, optimize everywhere” method runs multiple AI features simultaneously on the same video stream, eliminating the need to reprocess content for each capability.

The service integrates seamlessly with AWS Elemental MediaLive, so AI features can be enabled without modifying existing video architecture. AWS Elemental Inference uses fully managed foundation models (FMs) that are automatically updated and optimized, so no dedicated AI teams or specialized expertise are needed.

Regina Rossi, head of product for AWS Media Services explained how development of the tool was driven in large part by live sports and the importance of using metadata in the conversion process.

The professional video industry's #1 source for news, trends and product and tech information. Sign up below.

“One of the things we’ve been hearing from our customers is that they need help when it comes to understanding the context of what's happening in their video—in particular, live sports which is their most valuable content,” Rossi said. "So we wanted to provide them with the appropriate metadata that gives them the ability to transform their video in a way that's meaningful.

“They want to meet their viewers where they are more and by that I mean, a lot more of their viewers were watching content on mobile phones as an example,” she added. “And for them to be able to be formatted for that mobile native viewer, they had to convert their horizontal video into vertical video. And so what we wanted to be able to do was actually use the right set of x, y coordinates, and be able to create things like a saliency map so that the focus of that video is going to be in that vertical frame.

“So when you're watching it on a phone, it actually moves with the action, so you're not missing out on what's happening in a scene.”

One of AWS’s earliest customers, Fox Sports Digital, reinforced how important vertical video is to the network, Rossi said, adding that “90% of their content is actually consumed vertically.”

Rossi emphasized that unlike earlier efforts in this space, AWS Elemental’s AI architecture is not just about “following the ball” during live play. The company has tested the technology on a variety of sports and found that a lot of them actually lend itself well to a vertical format, according to Rossi.

“Ones that might be harder are things like tennis, where you have a ball that's traveling really fast between opposite sides of the court. But even then, one of the things that we do is we make adjustments to some of the foundation models that we use,” she said. “So as an example, we're not doing just object tracking. We're not just following a ball back and forth. What we're doing is actually creating a saliency map, so understanding where the action is happening in a scene, and so we're able to then follow the action, not just the ball.”

Key Features at Launch

- Vertical video creation – AI-powered cropping intelligently transforms landscape broadcasts into vertical formats (9:16 aspect ratio) optimized for social and mobile platforms. The service tracks subjects and keeps key action visible, maintaining broadcast quality while automatically reformatting content for mobile viewing.

- Clip generation with advanced metadata analysis – Automatically detects and extracts clips from live content highlights for real-time distribution. For live broadcasts, this means identifying game-winning plays, touchdowns, and emotional peaks with precise start and end points—reducing manual editing from hours to minutes.

Now Available

AWS Elemental Inference is available today in 4 AWS Regions: US East (N. Virginia), US West (Oregon), Europe (Ireland), and Asia Pacific (Mumbai). AWS Elemental Inference can be activated through the AWS Elemental MediaLive console or integrate it into workflows using the APIs.

Customers pay only for the features used and video processed, with no upfront costs or commitments, meaning that customers can scale during peak events and optimize costs during quieter periods. More features and capabilities will be introduced throughout this year, including tighter integration with core Elemental services and features to help customers monetize their video content better.

Big Blue Marble has been named as Launch Partner with AWS Elemental Inference integrated into Big Blue Marble’s cloud-native Cloud Video Kit platform

The company will demo AWS Elemental Inference at its Booth (W1701) in the West Hall during the 2026 NAB Show, April 18-22 in Las Vegas.

To learn more about AWS Elemental Inference, visit the AWS Elemental Inference product pag

Tom has covered the broadcast technology market for the past 25 years, including three years handling member communications for the National Association of Broadcasters followed by a year as editor of Video Technology News and DTV Business executive newsletters for Phillips Publishing. In 1999 he launched digitalbroadcasting.com for internet B2B portal Verticalnet. He is also a charter member of the CTA's Academy of Digital TV Pioneers. Since 2001, he has been editor-in-chief of TV Tech (www.tvtech.com), the leading source of news and information on broadcast and related media technology and is a frequent contributor and moderator to the brand’s Tech Leadership events.