Better media search through phonetics

The professional video industry's #1 source for news, trends and product and tech information. Sign up below.

You are now subscribed

Your newsletter sign-up was successful

Despite the advances of the various media asset management systems on the market today, quickly searching and retrieving specific pieces of content stored on large archives with hundreds of hours of content continues to be challenging at best. The success of keywords to find video clips is highly reliant on the amount of metadata attached to that asset at the time it was logged into the system — and whether metadata was assigned at all.

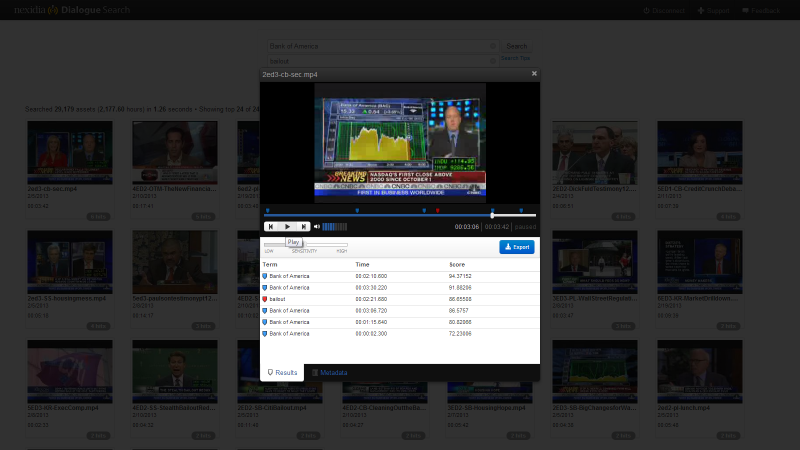

A company called Nexidia, based in Atlanta, has developed specialized software called “Dialogue Search” that eschews keywords to find audio or video clips and instead uses phonetic sounds found on that clip. Don’t get this confused with the various speech-to-text applications that have been used by broadcast media organizations — and even Microsoft and Google — to limited success. Most agree that this type of technology, if you can get it work to your requirements, is about 60 percent accurate most of the time.

That’s not good enough, said Drew Lanham, senior vice president and general manager of Nexidia’s Media & Entertainment division. Since 2008 the company also serves the military and the retail call center industry.

“What we’re trying to replace is that high initial cost associated with database searches with a solution that does not rely on metadata, but the spoken word,” he said. “The software searches the database for phonetic key phrases, instead of key words. That make it faster and much more accurate than what is currently available today.”

In only seconds, Nexidia Dialogue Search can comb through hundreds of thousands of hours of audio and video to find and preview any spoken word or phrase. This dramatically reduces logging and transcription costs, and uncovers valuable assets that traditional metadata could never find. Users can further narrow or broaden their results by using standard search operators, or filter dialogue-based search with standard metadata.

Lanham said Nexidia’s Dialogue Search software searches a database at 50 times real time per CPU core, meaning you can peruse an archive with 100,000 hours of content in 5 seconds or less. The company has a demo where they grabbed a million assets and found specific clips in a matter of seconds.

And search criteria can be filtered to find “hits” of any percentage you need. For example, you can ask for matches with 70 percent accuracy, meaning there’s a big likelihood that that word or phrase you are looking for is in that specific clip. When working with extremely large databases, like that at Turner Network Broadcasting, in Atlanta, this makes a huge difference.

The professional video industry's #1 source for news, trends and product and tech information. Sign up below.

Customers license the Dialogue Search software per seat and then load it onto a standard Microsoft Windows 2008 server. Nexidia also recommends installing a small SSD to allow fast database searches. Users can preview results in a video player window without having to scroll through numerous clips to find a specific sound bite.

Facilities with MAM system can also make use of Dialogue Search as an additive tool, said KJ Kandell, Senior Director, Media & Entertainment Product Management, at Nexidia. Dialogue Search software can automatically access any files stored on any third-party MAM system. It also allows the export of search results as time-coded markers to MAM systems and video editing applications. Thus far the company has written APIs for Square Box’s CatDV, Apple Final Cut Pro 7 and Adobe Premiere Pro 5.5 (and newer). Avid Interplay and Dalet’s newsroom system is next on their list of media management systems to support, with more to come.

“It does depend on what the customer has in terms of existing metadata,” Kandell said. “If they have extensive metadata information and word-for-word scripts that can be searched among thousands of digital files, it lessens the need for Dialogue Search. But we’re finding that most media organizations do not have the best solutions out there to find and repurpose material in their archives. They simply do not have the money or manpower to do it comprehensively. Dialogue Search eliminates those barriers and helps broadcasters repurpose those assets and generate new revenue.”

Kandell came to work for Nexidia in August of 2012, after 10 years managing the MAM archives at CNN and Turner.

“I saw first-hand the problems people have with media searches and the resources needed to do a good job,” he said. “At CNN they have dozens of people dedicated to logging in metadata, at a cost of millions of dollars per year. Not many broadcasters have those types of resources at hand. Dialogue Search is a much more cost-effective way to get the same, or better search results. We regularly run demos where the MAM finds certain files, and we find the same files, but we also finds other files that the MAM didn’t pick up.”

With Dialogue Search, media organizations can make better use of their existing media libraries by easily, precisely and quickly finding unique assets without being overwhelmed by irrelevant results.

“Dialogue Search is really a different way to attack this problem, and on a scale and accuracy no one else has been able to come close to,” Kandell said. “We don’t try to figure out what the exact word was that was spoken and store that because we think that’s a slow and highly inaccurate way to do it. We create a phonetic index. It’s just the sounds we’re after. If you say, when did someone say “banana”, we say well banana sounds like this, so we pull up every reference to that sound and we give you a confidence score. Then you can decide that you don’t want to see anything below 60 percent, or whatever number your organization is most comfortable with using to find content quickly. You can decide “don’t even show me anything below 60 percent.’”