Metadata interchange

Metadata has been a popular topic for several years, but in many cases, the discussions have fallen short. Frequently, the topic of preserving metadata during file transfer is discussed, but this use case does not encompass a facility-wide view, nor does it address critical differences in types of metadata and how this metadata can be used outside of simple file transfer.

Metadata interchange must go beyond simple exchange of information about a piece of video or audio in a file. It must become a part of a higher level system workflow.

In 1998, an EBU/SMPTE Task Force directly addressed the issue of metadata. While SMPTE and other organizations have done a lot of work regarding metadata, there is still much to be done.

Essential metadata

Let's begin with some important concepts. Perhaps you want to send a 30-second spot from one place to another as a file. In this scenario, there are some things that the receiving equipment must know to correctly play the spot. I am not talking about the name of the spot. You could play the spot without any difficulty even if you did not know its name. I am talking about the technical information about the content, such as the frame rate or compression parameters. While you might be able to guess at these parameters, it would be easier to play the spot if this information was included when you sent the file.

Metadata critical for proper content reproduction at the receiving end is known as essential metadata. It is important to keep this metadata tightly bound to the content. To achieve this, essential metadata is frequently included in the data area of the video or audio format (this area is known as the ancillary data space in serial digital video), or it is sent as part of the data stream in the case of compressed packetized content (MPEG program clock reference or program transport stream, for example). Some system information may also be essential — for example, time code or unique material identifiers (UMIDs).

Data essence

The professional video industry's #1 source for news, trends and product and tech information. Sign up below.

Essential metadata should not be confused with data essence. Data essence is a piece of content that appears in the form of data, such as closed captioning or interactive cues. Just like video and audio, it is part of the program. Data essence is not essential metadata because it is not information about the program, but rather a part of the program itself.

Compositional metadata

Another common type of metadata is compositional metadata. This metadata, which is frequently encountered in editing environments, describes how various pieces of video, audio and data essence are related in time, so as to create a finished program.

Compositional metadata is not as critical as essential metadata because it is not required to view a piece of video. It is, however, important when describing how various pieces of video and audio should be edited together to make the final version of a program.

Standardized wrappers

The Task Force recognized that there was a need for a standardized wrapper to hold various pieces of content and metadata. It would be much simpler to handle a 30-second spot as a single file rather than as four separate files (a video file, an audio file, a closed-captioning file and a metadata file).

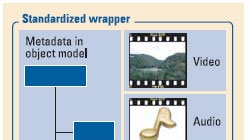

The wrapper is a container that can hold any of the many different video, audio and data types in common use today, along with essential metadata, compositional metadata and other metadata. Examples of standardized wrappers include the Media eXchange Format (MXF) and the Advanced Authoring Format (AAF).

These wrappers include the concept of a common object model. (See Figure 1.) A common object model means that there is a universal way of storing metadata within these wrapper files and that the place where the metadata is stored conveys information about the metadata. In other words, a time code associated with a particular video track is stored in a specific location in the data model so that someone looking at this time code knows that it is associated with a video track and not an audio track that might also be contained in the wrapper.

All of this work is well advanced, and the industry is now focusing on how to use these wrappers and metadata to develop solutions in particular application areas.

The next step: application workflows

Exchanging files that contain video, audio and metadata is certainly a critical application in broadcast facilities, but it is important to recognize that this only scratches the surface of where broadcasters want to go. The next critical area of work involves moving metadata and passing messages at the system level.

For example, let's say that a broadcaster is ingesting several commercials at the same time. Wouldn't it be beneficial if there was a common way to indicate to the automation system that the ingest was complete?

Here is another example: A station group has several servers loaded with MXF files. A program management system needs to know what programs are available on the servers. It sends a message to a Web service, which returns a list of the available programs.

The first example involves the exchange of event-related metadata or messaging across a network. The second example involves extracting metadata from the MXF files and sending it across a network to be stored in a database. These are two examples of processes associated with application workflows. The power of these processes extends well beyond simple file transfer, and I contend that this is the level of functionality that users want when converting from analog- to IT-based facilities. Fortunately, work is now under way to deliver this functionality.

If broadcasters want to build application workflows, such as those based on automated commercial delivery or automated content repurposing, then they must establish metadata pipelines that are consistent throughout their facility. Metadata must be available at different steps throughout the workflow process. This metadata should be available through standardized means.

Imagine how difficult and expensive it would be to develop the connections described above between a program management system and server systems if each one of these components spoke a different language. One potential solution is to develop standardized software interfaces to provide the functionality needed to support a particular workflow.

Frameworks

There is good news. The software industry has developed frameworks that help engineers develop and deploy standardized interfaces. One of these frameworks is called software-oriented architectures.

Another important concept is Web services. These services are available on a network that can perform specific tasks on behalf of someone else. For example, a Web service might automatically contact all MXF servers on a network and prepare a list of content available on that network.

In the example above, the program management system could contact the Web service and ask it what content was available without having to contact each server itself. Furthermore, it could make this query through standardized software calls. Other systems could make the same inquiry of the same Web service using the same software function calls. This is an extremely powerful concept.

Conclusion

SMPTE's S22-10 group is standardizing the Broadcast Exchange Format (BXF), which embodies many of these concepts. (To learn more about BXF, read “Modern automation” on page 44.) The Media Dispatch Group is developing the Media Dispatch Protocol (MDP) to standardize system functions to allow devices to request delivery of content and monitor the progress of the transfer at a system level.

This is a great new area of study for the industry. While it may take some time for the benefits of this work to reach the broadcaster, when they do, they will have a profound effect on workflows and facilities.

Brad Gilmer is executive director of the Advanced Media Workflow Association, executive director of the Video Services Forum and president of Gilmer & Associates.

Send questions and comments to:brad.gilmer@penton.com