AI Is Becoming the Operating Layer for Media and Entertainment

How broadcasters can move from task-level wins to agentic, trust-centric operations

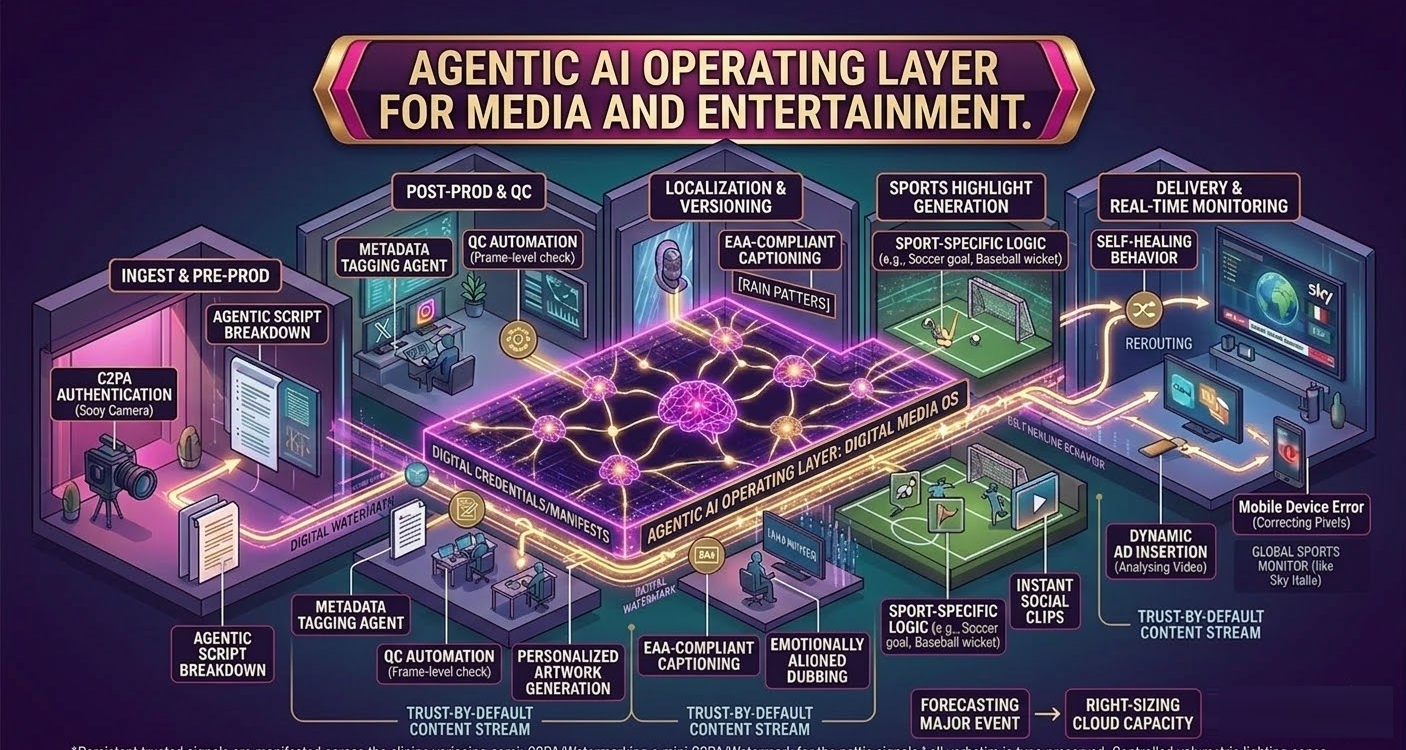

Artificial intelligence I in media has moved well beyond a collection of tools. Capabilities such as metadata tagging, QC automation, transcript generation, and recommendation engines now shape decisions across the content chain. As these systems grow more connected, AI increasingly acts as the operating layer that routes content, applies policy, and manages routine tasks.

The industry is shifting from narrow automation toward agentic systems that can understand context, pursue goals, and execute multi-step processes within clear editorial and policy boundaries. Routine content can flow automatically while sensitive material is held for human review. The result is faster, more consistent operations — paired with new expectations for transparency and trust.

How End-to-End AI Workflows Work Today — and Why They’re Now Essential

Broadcasters tend to begin one of two ways: solving individual pain points or linking teams and systems into continuous workflows. Both paths have reshaped operations.

Individual High‑ROI Tasks

For many organizations, the first gains come from targeted use cases. Smarter metadata tagging improves archive access, personalized recommendations and artwork help keep viewers engaged, churn prediction sharpens retention efforts, and load forecasting prepares systems for major events without guesswork. Automated compliance checks flag inappropriate content, while QC tools catch frame-level issues.

Crucially, AI now reaches upstream into pre-production, where agentic systems orchestrate automated script breakdowns, generate storyboards, and optimize complex production schedules before a single frame is shot.

Shift to Workflow Orchestration

As organizations connect individual AI tasks, the operating layer starts to take shape. Instead of siloed workflows, agentic systems act as the connective tissue between the newsroom, production, advertising, and operations. For example, AI now orchestrates contextual advertising by analyzing video frame-by-frame for hyper-targeted dynamic ad insertions (DAI). Content moves according to policy, not manual handoffs, and the system improves as teams refine rules and review outputs.

These orchestration models can even show “self‑healing” behavior, with agents monitoring traffic patterns, detecting early signs of congestion, and adjusting routes automatically. Human teams retain oversight for judgment calls and editorial nuance, guided by clear governance on when to intervene.

The professional video industry's #1 source for news, trends and product and tech information. Sign up below.

Some broadcasters have already taken orchestration further. Sky Italia uses an AI-driven delivery platform that routes video data dynamically across its network, ensuring buffer-free 4K streams for millions of viewers. By anticipating demand spikes, the system reduces egress and storage costs while improving viewer experience.

As distribution expands across regions, platforms, and accessibility requirements, manual versioning and monitoring cannot scale. Three domains show how AI has become foundational:

- Real-time Monitoring and Operational Intelligence

Operations teams oversee thousands of feeds at once, and AI helps surface issues that would otherwise go unnoticed — mis-triggered graphics, muted audio, compliance violations, subtle sync drift. In one recent global sports broadcast, AI detected graphic rendering errors on mobile devices early, prompting an automatic switch to a backup encoder before viewers notice anything. AI‑driven forecasting also helps teams scale resources for major events, reducing the need to over‑provision and improving resilience during peak demand. - Localization remains one of the most labor‑intensive parts of media operations. AI accelerates translation, subtitling, compliance edits, metadata generation, and platform specific packaging. It also preserves sync and ensures consistent output across formats and languages. With accessibility expectations rising, AI systems can automatically identify non-speech audio cues like "[rain patters]" or "[door creaks]" and support high‑volume production of Subtitles for the Deaf and Hard-of-Hearing (SDH).

Dubbing has also improved significantly. Newer models preserve tone, pacing, and emotional nuance rather than merely converting dialogue. Netflix has seen completion rates for global titles increase after adopting emotionally aligned dubbing, demonstrating how performance‑aware tools can improve viewer engagement. Humans still guide cultural context and oversee less‑common languages, but AI now handles much of the repetitive work that slows production. - Sports Logic, Highlight Generation, and Resource Optimization

Sports broadcasting shows how quickly AI is evolving. Instead of generic highlight packages, AI now identifies sport‑specific moments — a goal, three-pointer, or slapshot — and assembles clips for social distribution instantly. This logic also powers generative personalization, as seen when NBC Universal used AI orchestration to create millions of highly personalized daily Olympic recaps. These same systems forecast audience surges for major matches and adjust cloud and network resources accordingly – helping maintain stream quality while cutting infrastructure costs.

Securing AI-Native Operations: Risks and the Trust Stack

As AI becomes central to production, the risks grow too. Synthetic anchors, fabricated promos, tampered clips, and impersonations of public figures can erode credibility. Another challenge comes from contaminated or synthetic content entering production pipelines, where it becomes harder to detect and more costly to fix.

A stronger approach builds trust into each asset. A practical trust stack includes:

- Digital watermarking — durable, invisible identifiers that survive editing, compression, and screen capture.

- Provenance frameworks — cryptographically signed manifests capturing an asset’s origin and transformations. Broadcasters such as France Télévisions and ARD have begun daily use of C2PA protocols to safeguard VOD authenticity.

- Authentication — hardware-backed proof at capture that confirms material comes from a trusted source, as seen in Sony’s latest C2PA-enabled camera systems.

For this to work, trust signals must be added at ingest and persist through localization, editing, transcodes, and multi‑partner distribution. Challenges remain, including metadata stripping, uneven adoption, social-media black holes, and key-management burdens. However, without these layers, AI-native operations carry significant brand and legal risk.

A Pragmatic 12-Month Plan

Adopting AI effectively starts with a focus on viewer impact and measurable outcomes. Choose one or two high-value problems — manual bottlenecks, missed QC anomalies, dubbing throughput, churn — and link them to clear KPIs such as time-to-air reductions, versioning-throughput targets, or improvements in detection-to-resolution times.

A brief workflow audit will surface quick wins, especially where AI already functions as an informal orchestrator. From there, lightweight governance helps clarify risk ownership, documents human-override paths for agentic systems, and anticipates rising expectations for explainability. Procurement should include questions about provenance and authentication support so integrity signals travel with each asset. Finally, investing in skills helps editorial and technical teams shape and evaluate outputs rather than carry out repetitive work.

What Success Looks Like in 3–5 Years

Recent moves, like Netflix’s acquisition of Interpositive AI, prove tier-1 media companies are now embedding AI directly into their core infrastructure as an operating layer. Broadcasters that thrive won’t bolt AI onto legacy workflows; they’ll operate inside agentic, policy-driven systems that learn from outcomes, route work fluidly between humans and machines, and embed trust by default.

As these systems mature, each output improves the next, KPIs guide decisions, and consistency scales globally. The earliest deployments already show these benefits, and they will increasingly define industry expectations in the years ahead.

Einat Kahana is VP, Product Solutions at Viaccess-Orca