SMPTE ST 2110-20: Pass the Pixels, Please

This is the second installment in a series of articles about the newly-published SMPTE standard covering elementary media flows over managed IP networks. This month, the focus is on video transport, specifically the packet format that will replace uncompressed SDI (Serial Digital Interface) signals that are currently in widespread use for many different kinds of video production.

UNCOMPRESSED ACTIVE VIDEO

The full title of SMPTE ST 2110-20 is “Professional Media Over Managed IP Networks: Uncompressed Active Video,” and that is a good indication of what is contained in the standard. Specifically, uncompressed video images are transported (just like SDI), but only the data that make up the “active” portion of each video frame. In other words, just the image samples (also called pixels) that comprise the pictures to be delivered to viewers are transported within the new IP video format.

This method significantly reduces the amount of data required to represent each frame of video. For example, a 59.97 Hz 720p video signal that uses a 1.483 Gbps SDI signal can be carried as an IP packet stream at less than 1.18 Gbps, for a 20 percent savings.

However, this method has side effects, including eliminating the HANC (Horizontal Ancillary) space commonly used for embedded audio signals as well as eliminating the VANC (Vertical Ancillary) space frequently used for transporting other related information such as time code, format description, advertising triggers, captions, and many other types of useful information. Audio and other non-video data types are transported as separate IP streams as defined in other parts of the ST 2110 series (which will be discussed in future installments of this article series).

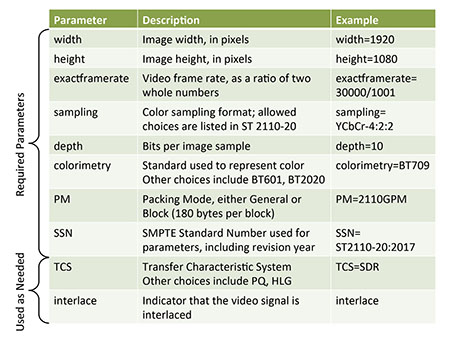

Fig. 1: Table of SDP Parameters Defined in SMPTE ST 2110-20

To avoid wasting bandwidth, data samples from adjacent pixels are packed directly next to each other, without any interspersed header information. The packing is organized through the use of pixel groups (also called “pgroups”) whose size and data layout vary depending on the specific video format being transported. Tables inside ST 2110-20 define a wide range of different video sampling formats that can be transported, including most (if not all) of the formats that are used in professional video production, such as RGB, Y’C’BC’R, ICTCP, XYZ, video key and other varieties. Since the pgroup size varies with the number of bits per sample and with the color subsampling format (4:2:2, 4:2:0, etc.), each allowed format is specified in the document.

The order of the various color samples in each pgroup is also specified, to enable interoperability. For example, the pgroup for a 4:2:2 10-bit video (normal HD-SDI) would be 5 bytes (40 bits) long and contain four 10-bit samples representing two pixels in the order C’B Y0’ C’R Y1’. Up to 286 of such pgroups can fit into one standard UDP datagram, carrying 572 pixels. Other sampling formats will have different numbers of pgroups and pixels per datagram.

The professional video industry's #1 source for news, trends and product and tech information. Sign up below.

DEFINING IMAGES IN FEWER THAN A THOUSAND WORDS

Most of the remainder of the ST 2110-20 document is devoted to defining the exact format of the video signal that is being transported. Session Description Protocol as specified in RFC 4566, provides a machine-readable layout for this information.

THE RIGHT PICTURE

One benefit of the full complement of video format specifications defined in ST 2110-20 is the elimination of the ambiguities that have been prevalent in many industry documents. For example, it doesn’t take too much searching to find spec sheets for some devices listing “1080i59.94” and other devices listing “1080i/29.97,” sometimes from one manufacturer! With a complete set of SDP required for every video signal, it will be easier to get the right picture.

Wes Simpson is President of Telecom Product Consulting, an independent consulting firm that focuses on video and telecommunications products. He has 30 years experience in the design, development and marketing of products for telecommunication applications. He is a frequent speaker at industry events such as IBC, NAB and VidTrans and is author of the book Video Over IP and a frequent contributor to TV Tech. Wes is a founding member of the Video Services Forum.