OTT Video Demands New Test Techniques

Sometime during the past five years, streaming video on demand from the Internet became a respectable delivery method.

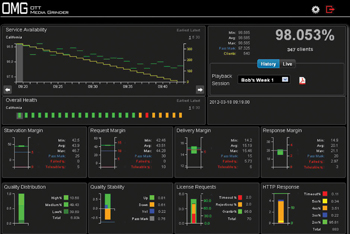

Pixelmetrix OMG dynamic dashboard gives an overview of OTT infrastructure performance Today, traditional broadcasters, networks and many upstarts deliver a breathtaking number of online playbacks every day, and suddenly there needed to be methods for monitoring, testing and measuring the stability and reliability of this new delivery medium. There’s even a new name for video that’s delivered online on demand to viewers: over-the-top (OTT) video.

Broadcasters are great at creating high quality content, but delivering the content online has been a puzzle as far as ensuring that viewers got an experience as good as the broadcast signal. Fortunately, several companies responded with a variety of products to allow just about every facet of online video to be tested and measured.

“The traditional methods of video delivery over IP are fundamentally different from OTT,” said Danny Wilson, president and CEO of Pixelmetrix. “OTT video delivery uses regular HTTP servers and network infrastructure for delivering video as small files over reliable TCP connections, as opposed to streaming packets over unreliable UDP connections.”

Being a continuous stream separates video from most other Internet traffic.

“The traditional metrics used to measure HTTP traffic, such as the delivery of HTML-like files, are not enough because they lack visibility into the continuity aspect of video,” Wilson said. “This calls for a new approach to monitoring such delivery mechanisms.”

Tyler Deneui, a sales engineer at Sencore, a signal processing and test and measurement provider in Sioux Falls, S.D., said that understanding the basics of OTT explains how it is different from other forms of streaming video.

The professional video industry's #1 source for news, trends and product and tech information. Sign up below.

“The concept for OTT is that the end user is able to view continuous video no matter what network bandwidth is available or how it changes,” Deneui said. “The OTT protocols overcome many of the negative characteristics that plagued previous generations of streaming video such as rebuffering and loss of video, but they are still susceptible to many of the same network-related issues that can affect any network traffic.”

POINTS OF FAILURE

The traditional broadcast chain and the impairments that can occur at each step are well known, but OTT video has its own chain with its own points of failure.

“OTT service could go wrong at multiple points in the delivery chain, starting from the origin of the content,” Wilson said “The encoding quality at the origin is very important. Media quality must be maintained at the best possible level for each bit-rate variant generated at the origin.

Tektronix Sentry monitors the output of the

master encoders. “During delivery, the availability, distribution and performance of cache servers, usable network bandwidth and transit time all contribute to the final video quality,” Wilson added. “The way in which the client works could also affect the viewer’s experience, particularly the management of player buffer, pre-fetch of content before playing, dynamic management of consumed media quality, as well as the timeliness of fetching the next block.”

Although the recent growth of OTT may have caught some off-guard, some of the underlying technology—and its failure modes—is familiar to the digital video universe.

“Broadly speaking, OTT video is sensitive to the same types of impairment as broadcast video using the H.264/MPEG-4 Part 10 or AVC video codec,” said Paul Robinson, chief technology officer for video at Tektronix in Beaverton, Ore. “That is macroblocking caused by elementary stream syntax errors and visible compression artifacts caused by over compression of the original content.”

HIGH-DEMAND VIDEO

It’s a given that some content will have explosively high viewing demand for a short time, such as a repeat of the Super Bowl’s infamous “wardrobe malfunction” or a hot “Saturday Night Live” skit.

“In the case of high-demand content, viewer experience is always going to be limited by the bandwidth available to the servers and the number of total viewers the servers are designed to handle,” Deneui said. “It’s imperative that content creators understand these limitations and prepare accordingly for the release of content that may be high-demand.”

Manufacturers have responded with a variety of test and measurement solutions, many of which focus on a specific part of the signal chain. Tektronix has two T&M products: Sentry, which is designed to test “linear” content, and Cerify, which tests file-based content prepared for use in ondemand applications. Sentry monitors the output of the master encoders for video and audio impairments that might impact the viewers’ Quality of Experience, and Cerify is used to verify that on-demand (file-based) content will be correctly decoded by the playing device.

“Sentry and Certify are designed from the outset to test and monitor the actual video and audio content, rather than relying on network, transport layer or other statistics to estimate content quality,” Robinson said. “Although this is difficult to do, it is the only way to ensure that the viewers’ Quality of Experience is accurately represented by the measurement results.”

Pixelmetrix, which has a history of assigning witty names for its products, recently developed the OTT Media Grinder… OMG, for short.

“Pixelmetrix has developed a comprehensive set of metrics, called VideoMargin, to monitor OTT video delivery,” Wilson said. “OMG is the first product in the family of OTT technology solutions that Pixelmetrix is developing.”

OMG can emulate thousands of OTT clients with widely different behaviors, such as iPhones, smart TVs, home computers and tablets. These simulated devices consume content in a preprogrammed manner, while reporting the VideoMargin parameters and any statistically significant deviations from the expected values.

“VideoMargin metrics provide a single ‘five-nines’ type of measurement to indicate the overall efficacy of the service,” Wilson said.

Sencore’s VideoBRIDGE product line includes a family of devices to provide statistical QoS metrics for the smallest-to-largest networks.

“The entire collection of products has been created to easily scale with the size of the network and provide a clear ‘at-a-glance’ understanding of current and historical OTT network performance,” Deneui said.

Sencore’s VideoBRIDGE products monitor the network delivery from the origin server, through the content delivery networks (CDNs), to the end devices. The company also has the CMA1820 compressed media analyzer and MPEGScan media file verification system, which are used to monitor and analyze content prior to its entry into the OTT signal chain.

DIFFERENT SKILLS

At this early point in the life of OTT video, it’s clear that the skills and knowledge necessary to ensure the cleanest possible viewer experience are in many ways very different than traditional broadcasting.

“If content providers continuously educate themselves on the technology and troubleshooting techniques of OTT video, they will be able to provide the highest-quality product and user experience,” Deneui said. “The OTT video world contains a variety of standards, both proprietary and open, that are ever-changing and require continuous study and awareness by content creators. There are also many adjacent pieces of the OTT puzzle that require content creator awareness and understanding, such as transcoding, fragmentation, packaging, origin server roles and CDNs.”

Tektronix’s Robinson agrees. “Operators are still learning about OTT video, and widespread experience of using streaming video for ‘broadcast’ applications is still evolving.”

A decade ago, one of the buzzwords in the industry was “convergence,” meaning that the worlds of television and computers were someday going to merge into a single, more powerful entity. Although no one then could have predicted exactly what we have today with streaming video and OTT, it’s clear we are now converged.

Bob Kovacs is a television engineer and video producer/director. He can be reached atbob@bobkovacs.com.

Bob Kovacs is the former Technology Editor for TV Tech and editor of Government Video. He is a long-time video engineer and writer, who now works as a video producer for a government agency. In 2020, Kovacs won several awards as the editor and co-producer of the short film "Rendezvous."