NAB: What’s New in the Field of Play

LAS VEGAS—Another NAB, means another few trips around North and Central Halls and both levels of South Hall to check out a sample of what new technologies are leading us to the future of sports broadcasting. A big trend this year was the continued emergence of products that combined the power of AI with metadata to enhance and expand the fan experience.

HIGHER RESOLUTION, HIGHER FRAME RATES

On the image capture front, Emergent Vision Technologies (EVT), based in Maple Ridge, B.C., released a 25GigE version of its Bolt camera, and what it claims to be the world’s first 25GigE camera. 25GigE is the successor to 10GigE, a rapidly growing interface for machine vision applications. It provides the same benefits of 10GigE, but with a 2.5x increase in data rate, which leads to a 2.5x increase in frame rate.

As with 10GigE, 25GigE is a cross-industry standard which has been employed for many years and is managed and/or produced by the IEEE 802.3 working group. The standard is used in various sports applications, among others.

The 25GigE Bolt will be in full production in about two months, according to John Ilett, executive vice president for EVT. He added that the new camera “will take sports television to the next level after we got this point with our HR 10GigE cameras. The Bolt will be an advantage for our customers who need to take a camera into a stadium and connect it with long optical fiber runs, [who also need] higher resolution and higher speeds.”

NATURAL MOVEMENT

Also in focus at NAB was the high-speed Polycam Player, a software solution that integrates MRMC Motion Control, the Chryon Hego TRACAB and Nikon cameras. Clubs, leagues and venues need an automated, high-mounted and wide-angle video analysis solution at game time, and this robotic video capture system offers a high level of automation, flexibility and low-light image quality.

The professional video industry's #1 source for news, trends and product and tech information. Sign up below.

Using the TRACAB player tracking solution, Polycam Player physically moves the camera and adjusts the zoom and focus to automatically keep the team or the player in the frame. Unlike other systems which pan and scan footage from very wide angle camera arrays, the Polycam Player mimics the natural movement of a camera operator from locations which would be impossible to physically place a human shooter.

“The Polycam Player gives analysts and broadcasters a definite advantage at game time. The system uses Nikon cameras, which offer superior image quality, optics and low-light capture ability,” said Mark Suban, senior manager of Nikon Professional Services. “These cameras are housed inside MRMC robotic pods which, unlike other systems, actually move, zoom and focus the camera for maximum precision. The combination of [the various] technologies working together helps ensure that there is no other automated system that can offer this level of flexibility and performance.”

TAP A FINGER

Sportscasters have been able to roll the videotape for more than 40 years, but Capticast SHARE, a Microsoft Azure-based broadcast solution, allows a presenter to take a sportscast in directions previously unattainable. Its charm is that, while managed by producers in the control room, it leverages the Surface Hub as a large format, on-air delivery platform.

The technology allows the sportscaster to deliver the news on-air through an intuitive, broadcast-centric, camera-ready front-end, complete with telestration, direct manipulation and navigation tools. Images, videos and maps are delivered in a single application experience to tell a cohesive story. SHARE comes standard with neo 360 AIR, which allows for full control of the speed, direction and frame-by-frame playback of video―with just a swipe or the tap of a finger. Capticast has partnered with Fox Sports to enable fans to play slow-motion video of live events on their smartphones. “For the first time ever, sportscasters (and newscasters) have full control of the video content shown on-air,” said Capticast CEO David Borish, “This comes at a time when broadcast news organizations are fighting to maintain market share in the face of online competition, and need more engaging storytelling that capitalizes on the live nature of the on-air experience.”

TOUCH & PINCH

Along a similar vein is Xeebra, from EVS, which allows not only the gang in the truck but game officials to easily review a play from numerous camera angles, then select the most relevant on the system’s touchscreen or via a dedicated controller. Users can also zoom into selected images with a simple touch-and-pinch gesture to review every angle in detail quickly, and in complete synchronization.

Xeebra’s client/server architecture guarantees flexibility and allows the user to deploy it wherever needed: on the sidelines, in a venue production control room or a remote officiating facility. The system also uses machine learning, enabled by EVS’s new VIA Mind, to calibrate the field of play; that allow users to accurately overlay an offside line for video assistant referee operations in soccer, for instance.

With a flexible client interface allowing multiple display screens, content is always synchronized, with browsing of up to 16 HD cameras. Xeebra also supports the ingest and browsing of high-frame-rate (SuperMotion) cameras.

“Xeebra brings replay technology to video refereeing for the likes of VAR workflows in soccer,” said Sebastien Verlaine, marketing and communications manager at EVS. “Users benefit from the system’s ability to integrate with broadcast-centric workflows already in place, and its built-in artificial intelligence helps operators overlay graphics, like an offside line, with the highest level of precision.

“The machine learning technology implemented into Xeebra 2.0 automatically calibrates the field of play―something that’s time-consuming and subject to error, if not done carefully,” Verlaine continued. “This doesn’t require any pre-game setup or the deployment of additional sensors or cameras; it uses only the images from the live broadcast camera feeds. As a result, operators can confidently add an offside line to show the referee when a player is offside, making sure the right call is made.”

A SMART APPROACH TO LIVE

Artificial intelligence, machine learning’s cousin, plays an important role in the debut of a new platform from Tedial, which launched its SMARTLIVE live sport solution at the show. SMARTLIVE marries AI to an innovative metadata engine to tighten integration between the back office and archives. This allows for more highlights, during or after an event, and allows a specific story to be delivered to a targeted audience via multiple platforms including social media, increasing the potential for significant growth in fan engagement.

SMARTLIVE is 100 percent compatible with PAM providers such as SAM or EVS which means all business processes can be orchestrated on top of an existing PAM, including the use of historical archives in live production. It can then manage the media life-cycle or media movements between different sites.

SMARTLIVE brings historical archives into PAM logs live in the format the PAM can immediately use, fundamentally reducing production preparation time, and allows broadcasters, rights holders, clubs and federations to significantly increase the number of highlights created during or after a sport event with less staff thanks to its automatic highlight engine and AI integration.

“The people in the truck, they’re trying to make 10-15 highlight clips per game,” said Jay Batista, general manager, U.S. for Tedial. “We’re going to try to make that 75-100 so they can use them over and over and create more fan engagement. It takes less than a second to quickly assemble 15 clips. That speed and that nearness to the live production is what separates us from the other vendors.”

THE FUTURE IS NOW

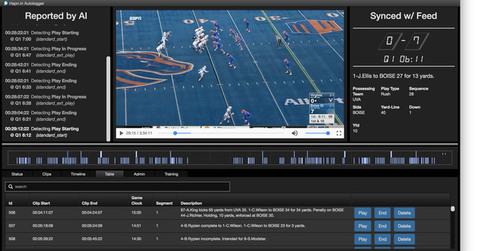

Another company that taking a similar approach to sports production is Pasadena, Calif.-based StainlessCode, which has developed AI that can watch and understand sports media, extracting metadata automatically, in real time.

The software, Stainless Autologger, automatically processes video by training neural networks to identify and extract metadata from the visual content, while simultaneously accessing external data sources describing the content (for example, sports play-by-plays, Twitter and Facebook feeds, robot sensor data logs or even law enforcement reports). The system can then combine the data with the video automatically, providing rich, time-indexed, semantic metadata.

The company has one patent and second pending. “This approach provides a depth and accuracy for video metadata that is not achievable manually,” said Dan Stieglitz, CEO of Stainless Code. “With the explosion of video content that is happening online, some measure of automation is necessary to fully extract the value of the video for publishers and consumers.”

TWO DOZEN ANGLES

With the acceptance of video assistance in making calls on close plays across the sporting world, the pressure to “get it right” is more intense than ever; even while game officials have access to multiple game feeds, however, that bonus still doesn’t always result in the correct call.

Enter the videoReferee, from Torrance, Calif.-based Slomo.tv. The technology can share up to 24 angles to meet even the Fédération Internationale de Football Association’s (FIFA) tight requirements. The system records via multiple cameras, allowing referees to analyze the controversial moments, frame-by-frame, concurrently from several cameras; images can also be magnified. And the slow motion playback can be included in the television broadcast.

The system works by combining multichannel recording with an instant replay system that can obtain scoreboard information to create an interface for video judging. In addition, events can be marked live or recorded, making them instantly available for review. It is used in basketball, soccer, football and many other sports.

“We have a full range of sports video judging solutions, from a budget four cameras to the most powerful 24 channels system,” said CEO Michael Gilman. “We also have HD and 4K systems for broadcasts including special servers that are dedicated to super motion cameras, such as Panasonic’s 4X AK-HC5000.”

Mark R. Smith has covered the media industry for a variety of industry publications, with his articles for TV Technology often focusing on sports. He’s written numerous stories about all of the major U.S. sports leagues.

Based in the Baltimore-Washington area, the byline of Smith, who has also served as the long-time editor-in-chief for The Business Monthly, Columbia, Md., initially appeared in TV Technology and in another Futurenet publication, Mix, in the late ’90s. His work has also appeared in numerous other publications.