HPA 2016: The Internet for the Solar System

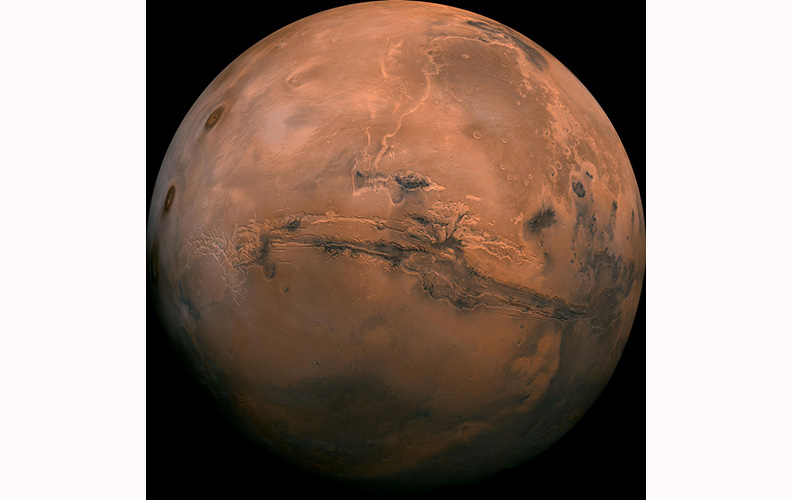

INDIAN WELLS, CALIF.—NASA is now making preliminary plans for deep space human exploration, likely starting with development of a presence at a Lagrange point—a sort of parking place in space—and then, after sorties to the Moon or asteroids, embarking on a crewed mission to Mars or one its moons, said Rodney Grubbs of NASA’s Marshall Space Flight Center in Huntsville, Ala.

Grubbs was on hand at the HPA Technology Retreat to reach out to the imaging community, making space geeks swoon in the process.

“NASA and its international partners would of course want to virtually take everyone on Earth along for the ride,” he said. “But the space environment presents many challenges for the use of commercial motion-image technologies.”

Radiation, operation in a vacuum, and extreme temperatures being just the obvious ones. And then there’s getting the imagery from Mars to the public.

With regard to radiation, Grubbs said hi-res cameras sensors on the ISS have been highly susceptible to ionizing radiation damage. Some cameras can have seven to 10 pixels damaged a day. NASA replaces them about once a year.

“JPL spends a lot of money on cameras,” he said.

There have been efforts to create radiation-hardened cameras.

NASA used a Panasonic 3DA1 on the last ISS flight. It had fewer dead pixels.

“We have absolutely no idea why that camera behaved particularly better than other cameras,” he said.

VR/360 cameras offer the advantage of no moving parts. A Red camera was taken to the ISS. NASA hadn’t really played with Bayer-patterned professional cameras before, Grubbs said.

They’ve found that CMOS is less susceptible than CCD. The Japanese Space Agency had an HD camera on a moon probe, Selene. The camera didn’t suffer as much as was expected. It may have involved proximity to the fuel tanks.

The camera glass can also begin to fade or turn yellow.

Also, the cameras have to work in a vacuum if they’re going to be outside, in which case, heat dissipation is a problem since fans are not practical.

And talk about fickle temperatures—in orbit, in daylight, everything is exposed to 260-degrees plus Fahrenheit, and falls to more than minus 260 degrees in darkness.

Also, there are bandwidth constraints, and video requires orders of magnitude more bandwidth than all other communications. Conventional radio frequency transmission takes power and large antennas. Optical offers more bandwidth in bursts, but has problems with availability and aiming of antennas.

With regard to link integrity, conventional two-way IP connections are not practical due to breaks in links and latency between nodes, Grubbs said.

“If you’re in space, you can have all sorts of disruptions. Solar flares, moons… other planets… all sorts of things,” he said.

On the command and control front, ground command of remote cameras, encoders and related systems typically require two-way communications. Think latency between here and Mars.

“This mission will include relay satellites that pass the data and buffer it. It’s designed with long space links in mind. It’s billed as the Internet for the solar system,” Grubbs said.

The professional video industry's #1 source for news, trends and product and tech information. Sign up below.