Few things in our industry have changed as much as prompting. Until the 1970s, prompting was a mechanical, not electronic, process. Sometimes text was laid up on menu boards — which some readers have likely never seen — by hand, one letter at a time.

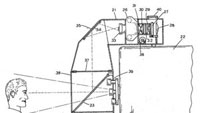

When technology arrived, it was in the form of a continuous script typed on a large mechanical typewriter. The concept was allegedly developed to help Lucille Ball read commercials on television, a claim backed up by a patent issued to the producer of the program in 1959. To quote the patent, “The present invention relates to a novel apparatus for visual presentation of program or speed material to speakers, actors, and in general to individuals who appear in public or before television and movie cameras.” (See Figure 1.)

This invention was novel indeed, and innovation continued, with the paper rolls replaced by CRT display from the output of a camera shooting down at text rolling by on a flatbed track. Though not very portable, it was a major improvement over rolls of paper. Eventually, the progress led to the arrival in 1982 of the first computer-based system from Compu=Prompt. The system ran on an Atari 800 PC. Its innovation was to use the graphics output from a computer, allowing word processing software to write prompting text instead of manual typewriters.

Many variations on the general theme exist. Systems vary in complexity and size to suit field and permanent studio usage. Small, portable and battery-powered systems with lightweight LCD monitors suitable for HDV or similar sized cameras are sometimes used for wraparounds for documentary units. Larger systems suitable for cameras sitting a considerable distance away from presenters are equally important in the market. Though early systems suffered from internal reflections and flare caused by the mechanics of lenses and mirrors available at that time, modern systems present much less of a problem for implementation in normal environments.

The connection between captioning and prompting

Today, prompting has developed into a fixture in all broadcast newsrooms, as well as in almost all venues where public speaking is done. The half silvered mirror of the first patent is still the dominant display method, though CRT monitors have been replaced by LCD monitors that can run off batteries in the field, fed by laptop software packages.

At least one company has put the software in the display, essentially using an integrated PC and display, allowing the text to be delivered by USB thumb drives or even Wi-Fi access. Controls have evolved as well, including wireless handheld units. For example, picture a PDA displaying the text with a simple scroll function controlled from a touch screen. The original innovators would be jealous of the flexibility and capability that has evolved.

The professional video industry's #1 source for news, trends and product and tech information. Sign up below.

More importantly, a symbiotic relationship has developed between prompting and closed-captioning software. The reason is simple: Why type the same text twice, once for captioning and once for prompting? The script, usually written for news programming in a newsroom automation system and fed to the prompting system as a file, can be delivered at the same time to a closed-captioning encoder. This replaces a manual step with an automated and more accurate electronic workflow. Otherwise, a captioning operator would have to listen to the presenter and type the text again, live into a captioning system.

It takes unbelievable concentration to transcribe someone's words accurately and without break. It is no wonder that people trained as court reporters often do this work. The keyboards used for captioning are essentially the same as the transcription keyboards used in courts of law. Companies specializing in the stenographic market also supply software that can output the live stream needed to feed a closed-captioning encoder.

Two standards

Modern captioning requires straddling two worlds, one of which will become less important after Feb. 17, 2009, when analog TV broadcasting stops. Of course, I am referring to the two standards CEA-608-Band CEA-708-B captions. (Other standards defining creation, carrying and delivery of closed captions include, in part, SMPTE 333, SMPTE 334, SMPTE EG 43, ATSC A/65 and A/53, SCTE 43 and SCTE 54.)

Although both CEA standards carry the same kind of data, the systems operate differently. The 608 captions cannot be controlled to any great extent beyond simply on/off capability. The history of the FCC's regulatory statements on closed captioning almost doesn't matter at this point. Suffice it to say, essentially all programming must be delivered with captions, and all set-top boxes and televisions must support 708 captions. The 708 captions, in a fully implemented receiver, allow the viewer to select how the captions will be presented, including, for example, transparency on-screen and color. The broadcaster can choose how the text is presented, including animation options such as rollup, scroll-on and pop-on. This aids with captions that are done live, allowing options that do not delay the delivery to the screen until a full sentence is complete.

Caption complications

Captions can vastly complicate the business of delivering alternate versions of content. For example, content that originates in the United States in English is almost always resold overseas or into Spanish language stations in the United States. Caption data must be added to programs in perhaps many languages on DVDs and other package media. Keeping all of this in sync is not a trivial matter. Synchronizing the insertion of caption data to time code can largely eliminate the need to caption content live. Using time code as a reference, a closed-caption system can pull the data and synchronize it automatically. Of course, this means that a significant amount of metadata must be carried with the essence (content).

As all broadcast systems become more “metadata aware,” there will likely be many instances of precisely this problem. Open titles — such as those “burned in” on-screen, as well as closed captions, hidden or displayed as a user's choice, closing credits, underwriting messages, popup ads, voice-over content and many other program variables — require the same structured data storage and synchronization to effect usage in multiple markets. The data is usually carried in VANC data space in SMPTE 259 and SMPTE 292 Serial Digital Video, and bridged to other transport methods when encoded for compressed transmission.

One last comment

Although created by Congress to enhance the viewing experience for the hearing impaired, one of the most important uses for closed captions is for people whose first language is not the spoken language in a program. Closed captions make content accessible to immigrants as well as those with hearing loss. In emergency situations, this could mean the difference between loss of life and the safety of viewers with limited language skills.

John Luff is a broadcast technology consultant.

Send questions and comments to:john.luff@penton.com