Performance Tuning

The professional video industry's #1 source for news, trends and product and tech information. Sign up below.

You are now subscribed

Your newsletter sign-up was successful

Digital video/audio needs to be handled in the environment that it is most happy to work within. By its very nature, A/V has an affinity to being recorded or played back at its real time rate.

If a system were capable of identifying the data's structure while content was being received, copied or moved; then that data could be arranged for a best fit on the form of storage that it would reside on next.

Systems could be laid out so that the data structure addressed certain chunk sizes. Then, when accessing that data, it will already be optimized for the way the system reads it.

Under the hood, what the system is actually being optimized for is its ability to respond based upon "I/O operations per second" (IOPS). This is where the real battleground is shaping up in media operations.

ALLEVIATING BOTTLENECKS

Today's all encompassing shared storage environments are tuned to address the number of IOPS needed for the application(s).

Solid-state drives allow short term data to be moved very quickly so it can be repeatedly accessed in smaller chunks during production workflows. This is not the routine record or playout of baseband A/V. Here, optimization really addresses the number of devices that access storage during operations: e.g., edit-in-place, file transcoding, graphics and digital audio post.

The challenges in meeting IOPS demands have recently been offered a new tool kit that may help alleviate bottlenecks surrounding moving data between device and storage. You may have been wondering what the real focus is for the latest in storage technologies; or why the push towards the solid-state drive (SSD).

The answer is not in "greening up" or reducing the risk of disk drive failures. The justification is that solid state drives allow short term data to be moved very quickly so it can be repeatedly accessed in smaller chunks during production workflows.

Nonlinear editing applications are handled quite differently from large bulk transfers of sequential data, such as ingest or playout. The NLE is an ideal candidate for an SSD front end.

The problem is that there are issues with SSDs that have yet to be worked out. System architecture protocols such as the operating system, peripheral short-term storage, long-term storage, the management of transfers to or from the SSD and peripheral or long term storage, are just a few. But work is underway; expect to see faster SSD caches coming to an NLE near you.

CONTENT AWARENESS

Modern storage systems need to possess "content awareness" capabilities. By this we mean that through the use of structural metadata, chunks of data can be categorized and placed onto optimized storage sets that best address the system IOPS requirements.

Storage systems are then "tuned" to meet the various read and write affinities. If your system is agile enough to address the placement of data based upon structural metadata, then the hidden management of the data types can be optimized without the user or the administrator continually shuffling the data between storage containers.

Through the use of buffering and IOPS tuning, controlled from the structural metadata information obtained by the file system, users can adjust system performance to meet either the needs of the workgroups that accesses the data, or to make modifications in bandwidth based upon what the users require for their current workflow or tasks.

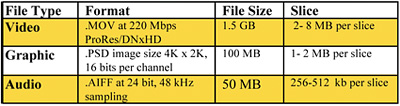

Fig. 1: These three A/V file types have three distinct and radically different dimensions. To help understand this, Fig. 1 shows these three A/V file types have three distinct and radically different dimensions.

Placing all of these files into the same, highest-sized storage bucket would not be optimal to achieving system performance based upon the media type. Instead, the storage architecture and the system IOPS would be arranged so as to meet the requirements of each media type, allowing the specifics of each file to be optimized for the workflow it addresses.

This means audio files would be segmented to the digital audio workstations clients possibly on its own VLAN so that these smaller slices are not bogged down by, for example, the 2-8 MB slices in full motion video. The same goes for the graphics system, where access is far less often.

Here workstations with Adobe Photoshop would get their best IOPS performance based upon file size and access periods.

Understanding these particulars, and then applying that knowledge to improve system performance, is the job of the "new" media-centric IT administrator. Yet these are just two of the evolving concerns surrounding how to build and operate a collaborative shared storage system.

Next we'll look at the concerns surrounding resiliency, bandwidth and latency.

The author would like to thank Matthew Rehrer of Omneon—now a part of Harmonic—for the background and inspiration of this article.

Karl Paulsen is a technologist and consultant to the digital media and entertainment industry who is with Diversified Systems. Karl can be contacted at kpaulsen@divsystems.com.

The professional video industry's #1 source for news, trends and product and tech information. Sign up below.

Karl Paulsen recently retired as a CTO and has regularly contributed to TV Tech on topics related to media, networking, workflow, cloud and systemization for the media and entertainment industry. He is a SMPTE Fellow with more than 50 years of engineering and managerial experience in commercial TV and radio broadcasting. For over 25 years he has written on featured topics in TV Tech magazine—penning the magazine’s “Storage and Media Technologies” and “Cloudspotter’s Journal” columns.