Examining Video Server Configurations

Karl Paulsen

ANOTHER DISNEY CRUISE— Video servers are becoming commodity-like components for many applications, whether for a traditional television station play-to-air system or as an element in a content delivery platform. Broadcast servers are sometimes referred to as “transmission” servers and primarily serve the purpose of collecting and replaying program, commercial and interstitial record/play content from file-based content.

Regardless of the application, the parameters assessed in the design/selection process are similar. Such basic parameters include establishing the number of concurrent record/play operations expected. Codec types, wrappers, bit-rates and profiles; plus any additional peripheral operations outside of the rec/play functions, such as file transfer, transcoding, QC or archive must also be considered.

Lastly, the usable program storage hours or terabytes of usable disk space readily accessible to all of the previously mentioned operations must be calculated.

DIFFERENT CONFIGURATIONS

Professional video server platforms offer modules that comprise record-only, playonly or a combination of play-and-record in packages. The modules are enabled as a hardware set (in a blade or slot-based configuration) or as software-based codecs. Various configurations allow for the distribution of services across different chassis make-ups, I/O-formats and server components. This further provides for varying operational uses based upon workflows.

For example, in a central ingest area more record-only ports would be needed than in a configuration that serves a live-production or a play-to-air environment. The central ingest area may also review the ingested content for visual and audio quality, timing checks and trimming the tops-and-tails of clips—thus, some playout capability is necessary.

In these instances, some server ports would include play-and-record capabilities controlled by the facility’s automation or program preparation system.

The professional video industry's #1 source for news, trends and product and tech information. Sign up below.

Presentation or transmission areas (aka “master control”) mainly employ play-only functions compared to record-only ports found in central ingest. With the server’s modular port capabilities, a user would employ mostly play-only ports. However, master control may need “just-in-time” record capabilities for those periods when all other ingest systems are in use, or when a live program needs to be delayed due to a breaking news event that supersedes the regularly scheduled program.

Studio production systems usually have a mixture of both record and playback functions; while editorial needs a similar complement of rec/play operations, although often on dedicated servers tailored specifically for post production and video editing.

Editing requirements demand entirely different sets of capabilities versus transmission and ingest, which will not be addressed here due to their uniqueness and special features not usually found in transmission servers.

Studio production may need “clip-servers” for the rec/play of short segments, bumpers or animations. Production switchers provide those capabilities using solid-state storage with motion-JPEG (or similar) files and will seldom burden production or transmission servers, which are then dedicated to longer-form content.

CODEC SELECTION

Codec selection is governed mainly by the field record medium (e.g., P2, XDCAMHD, AVC-intra) or by a given house standard. As users trend more towards high-definition formats, the choices become geared to how the content is used in post production or editorial.

For news applications, a bit-rate of 35 Mbps is acceptable for most over-the-air or cable broadcast operations. For higher-end production, such as action sports or documentary, many select 50 Mbps and up (possibly AVC-intra 100).

When the editing platform is designed around a specific codec (e.g., Avid DNxHD or Final Cut ProRes), then the transmission codec may be based on that format; often necessitating a format transcode (to XDCAM or similar) prior to release or transmission. As production tends toward more and better pixels, the ability to scale up to 1080p60 may be worth calculating before selecting a particular video server configuration. Servers for UHDTV or 4K images are considered “specialty” devices and for production purposes are offered only by a few select manufacturers.

Next, users need to consider all the other anticipated peripheral requirements, such as FTP into or out of the storage or server systems. Support for such agendas as transcoding, quality assurance (file checking) or contributions to any “out of the house” operations—for example, sending to a CDN, a hosting service or to the cloud—must be accounted for. If an archive system (tape or other nearline storage platform) is called for, these activities must be included in the peripheral activities bit-bucket bandwidth calculations.

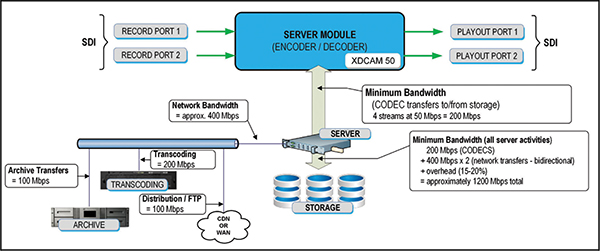

Once all the usage data is accumulated and the ins and outs are accounted for in terms of the bits ingested into the system, the restoration of content from an archive or sending bits to the playout chain or the archive, etc., a diagram (Fig. 1) describing the overall system bandwidth that must be served by the primary components is created.

Fig. 1: Example bandwidth calculations for a hypothetical server system with peripheral activities including 50 Mbps record/play, archiving, transcoding and content distribution (worst case).

These components include (a) the server management system, an IT-centric component that regulates or throttles all the activities; and (b) the data capacity requirements of the storage system relative to the amount of bits flowing to/from the disk drives.

Finally, a figure of merit (i.e., overhead) associated with the overall number of IOPS needed to keep up with peak demands (and still not drop frames or miss cues) must be added.

Once the total bandwidth and IOPS are known, the engineer calculates the minimum number of spindles (i.e., the number of physical disk drives) necessary to meet the bandwidth of the system. This is best done by analyzing the drive configurations in terms of the RAID-set type, drive count, the speed of the disk drives, the number of logical unit numbers (LUNs) needed and the drive capacity (terabytes per drive) times the usable post RAID-requirement space availability.

Drive systems can include both Flash (solid-state) and spinning magnetic media; plus the overall performance figures of merit for the transfer interface (e.g., Fibre Channel, SAS or SATA drives) and applications expected.

Professional video server system providers have all the resources necessary to figure these items out based upon their specific systems. The engineer’s task then becomes knowing how to specify each of the functional parameters necessary to support their operations.

And of course, if you want to “grow your own” video server system, you need to thoroughly understand all these issues—and probably a whole lot more!

Karl Paulsen, CPBE and SMPTE Fellow, is the CTO at Diversified Systems. Read more about other storage topics in his book “Moving Media Storage Technologies.” Contact Karl atkpaulsen@divsystems.com.

Karl Paulsen recently retired as a CTO and has regularly contributed to TV Tech on topics related to media, networking, workflow, cloud and systemization for the media and entertainment industry. He is a SMPTE Fellow with more than 50 years of engineering and managerial experience in commercial TV and radio broadcasting. For over 25 years he has written on featured topics in TV Tech magazine—penning the magazine’s “Storage and Media Technologies” and “Cloudspotter’s Journal” columns.