The professional video industry's #1 source for news, trends and product and tech information. Sign up below.

You are now subscribed

Your newsletter sign-up was successful

SAN ANTONIO, TEXAS—With immersive audio knocking on the door of broadcast television, it seems like a good time to investigate some ways to create content for the format. According to some in the industry, a look at the future of immersive audio requires us to charge up the flux capacitor, hop in the DeLorean, and travel back to 1975.

At the 2015 NAB Show, Pro Sound Effects demonstrated an Ambisonics sound effects library of London ambiences. Initially I was skeptical about the utility of these sounds, but, after previewing them and realizing the amount of control available, I walked away intrigued by the prospect of creating and using sound effects that were immersive all the way from capture to consumer.

Testing was done with the SurroundZone 2 software provided with the sound effects library to decode the B-format audio and recreate the captured sound field.

Considered by some to be a dead format, Ambisonics has resurfaced as a possible immersive format, especially since it was created to capture and reproduce 3D audio from the outset. While it’s important to look at how Ambisonics works as an immersive format, we also need to see how it holds up when listening is non-immersive. Though the London sounds previewed at the NAB Show are not yet available, a library of New York City Ambisonic sounds was released and used as test material for this column, along with some B-format recordings I made several years ago.

While we will look specifically at Ambisonics technology in this column, there are other methods of capturing multichannel sound.

COINCIDENT PAIRS

All microphones designed for capturing sound fields and ambiences are composed of some array of capsules, either spaced at a calculated distance or based on some form of coincident pair, where two microphones are placed nearly touching, facing the sound source, with their capsules angled 90 degrees (typically) to each other. X/Y, Mid-Side (M-S), and Blumlein Stereo are coincident pairs, and it, in fact, was Alan Blumlein, the famed English electronics engineer who developed this technique in the 1930s.

The benefit of coincident pairs is that timing and phase errors are eliminated because the source sound arrives at both capsules at the same time with equal amplitude if the source is directly in front and slightly early/late with differing amplitude if the sound is to either side. The elimination of timing and phase errors also means that the sound captured with coincident pairs does an excellent job of folding down to a single channel.

The professional video industry's #1 source for news, trends and product and tech information. Sign up below.

SoundField microphones, designed specifically for Ambisonics, are based on the coincident pair technique, with four capsules close together as opposed to two, in order to provide height to supplement front/back and side to side. Microphones from other manufacturers are available with different coincident pair implementations, essentially some expansion of M-S, including the Schoeps Double M-S and the Sanken WMS-5.

Researchers are experimenting with other microphones and placement options for capturing 3D sound fields, but all recordings tested here were made with the SoundField ST450 microphone system.

SoundField microphones capture raw audio (A-Format) from the four capsules (Lb, Lf, Rf, Rb) in their array, which then go into a processor that converts them to B-format signals for recording. B-format matrixes the raw audio into a more or less 360-degree sound field by recombining channel content to create one omnidirectional channel and three bidirectional channels.

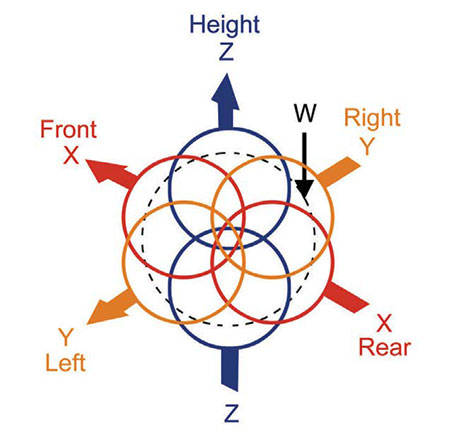

The omni channel, W, combines audio from the four capsules (Lb+Lf+Rf+Rb) and acts as a sound pressure channel. Of the bidirectional channels, X supplies front/rear imaging (–Lb+Lf+Rf–Rb); Y provides side-to-side imaging (Lb+Lf-Rf-Rb); and Z gives us up and down imaging for the all-important height dimension (–Lb+Lf–Rf+Rb).

TESTING SOFTWARE

Hardware processors or software can be used to decode the B-format audio and recreate the captured sound field. My testing was all done with the SurroundZone 2 software provided with the sound effects library. Since B-format recordings consist of four channels of audio (BWF), it seems natural to place them on a four channel-wide track, but they actually need to be placed on a 5.1 track in order to get a 5.1 output (the software currently supports up to 7.1).

Once the decoding software is inserted onto the multichannel track, you have the ability to steer the decoded channels around in the mix as well as change microphone orientation, tilt, width and other parameters. The amount of control you have as the mixer is actually quite substantial, which gives you the ability to create some very dramatic shifts in the image. The most dramatic of all for mixers may be that, despite the fact we are working with a captured 3D sound field, it collapses perfectly to stereo and mono.

There are certainly some issues with the SoundField system if the goal is to use it for truly immersive projects. First of all is the fact that the decoding hardware and software only support 7.1 and there are no current provisions for audio object placement. For post work there is already plenty of overhead involved in creating immersive audio mixes with not many workstations equipped to handle the workflow out of the box.

Even though three dimensions of audio are captured in the B-format recording, I was unable to locate any currently available tools that give us the ability to recreate those dimensions in an immersive production environment.

If there was ever a technology begging to be used for immersive audio, this is it. In a 1990 AES paper on Ambisonics, author Roger Furness stated that, “The original sound field, whether it was a live sound or one created at the mixing console, has an infinite number of possible sound directions.” That sounds more like the immersive technology coming with ATSC 3.0 than one already 40 years old.

More testing and development of Ambisonics are certainly in order, especially when incorporating the technology into immersive workflows, but downmix compatibility is already there. Expanded decoding tools are needed and despite the fact 3D audio can be captured with other microphone configurations, many of those setups are not portable, making them useful only in controlled indoor settings and unsuitable for live events, which are increasingly important as broadcasters seek to differentiate themselves from OTT services.

Jay Yeary is a broadcast engineer and consultant specializing in audio. He is an AES Fellow and a member of SBE and SMPTE. He can be contacted through TV Technology magazine or attransientaudiolabs.com.