SMPTE ST 2110-21: Taming the Torrents

This is the third installment in a series of articles about the newly-published SMPTE standard covering elementary media flows over managed IP networks. This month, the focus is again on video transport, specifically the rules that help ensure that high-bitrate video streams are well-behaved and won’t overwhelm the IP networks used to transport them nor overflow receiver buffers.

KEEPING STREAMS FROM OVERFLOWING

The full title of SMPTE ST 2110-21 is “Professional Media Over Managed IP Networks: Traffic Shaping and Delivery Timing for Video.” Both of these terms refer to the same basic topic: how are the packets transmitted over the network, from the perspective of both the sender and the receiver? In other words, how should packet flows be sent into the network “pipes” so as to not cause flooding (of packets)? Answering these questions is crucial for properly provisioning network connections to support as many signals as possible without causing packet congestion, which could lead to packet loss.

To really understand the potential issue, it helps to deal with some actual numbers. Consider a 1080p signal with a 50Hz (European) frame rate that has a bandwidth of 3 Gbps on an SDI cable. At 50 Hz, a new frame of video is created every 20 milliseconds. Using constant-size packets, each with 480 pixels, would result in 4320 packets for each video frame. Using 10-bit sampling, 480 pixels would require 1200 bytes of data, plus 90 bytes of overhead, for a total of 1290 bytes per packet.

One way that this video signal could be transmitted would be using evenly spaced packets. In this case, 4320 packets sent every 20 milliseconds would mean that one packet is sent every 4.63 microseconds. On a 10 Gbps link, each 1290-byte packet would require (1290*8=10,320) bit-times to transmit, or 1.032 microseconds, followed by a gap of roughly 3.6 microseconds.

Another way that this video signal could be sent would be sending all of the pixels within a frame all at once. (This could hypothetically be the case for a device that was, say, generating graphics.) In this case, the sender would generate a burst of packets lasting 4320*10,230 bit times, or 4.419 milliseconds. This burst of packets would be followed by a gap of (20-4.419) 15.581 milliseconds until the next video frame was ready to transmit.

[SMPTE ST 2110-10: A Base to Build On]

The professional video industry's #1 source for news, trends and product and tech information. Sign up below.

So which of these packet transmission schemes is better? Well, both of the above streams have the same long-term average bit rate, of just about 2.23 Gbps. However, the first stream with evenly spaced packets will be much easier for signal receivers, switches and other network devices to handle. Why? Because the second stream will fully occupy a 10 Gbps data circuit for a significant period of time, forcing any lower-priority data that might need to be transmitted over that link to be placed in a buffer to wait for the link to become free. If higher-priority data came along during the data burst, then video packets would need to be buffered instead. Since network devices typically have a limited amount of buffer space that needs to be shared by multiple physical data channels, any signal that places heavy demands on buffers can cause problems, such as lost or deleted packets when buffers are filled up.

This issue becomes even more of a problem when multiple senders are all trying to transmit bursts of data at the same time, as would frequently occur in applications where several video sources are locked to a common clock. Therefore, to prevent network problems and to make it easier to design signal receivers, it makes sense to set some limits on the size and duration of packet bursts. These limits are often called “traffic shaping” and/or “delivery timing” in networking jargon.

TYPE N, NL AND W VIDEO SOURCES

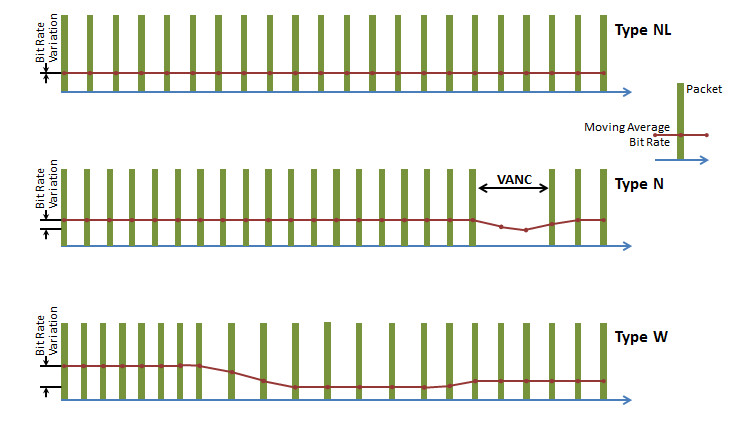

The ST 2110-21 standard defines three types of senders: N (for Narrow), NL (for Narrow Linear) and W (for Wide). These types define limits for the amount of packet delay variation (i.e. the burstiness) that a sender is allowed to exhibit in its output stream.

Type NL is the easiest to understand, and corresponds to a stream where all the packets for a video signal are evenly spaced across the duration of each video frame (i.e. a stream that is like the first example given in the preceding section). The SDP (Session Description Protocol) for this type of stream must include a parameter “TP=2110TPNL.”

[SMPTE ST 2110-20: Pass the Pixels, Please]

Type N is similar to Type NL, except that the sender doesn’t send packets during the time that would correspond to the VBI (Vertical Blanking Interval) or VANC (Vertical Ancillary data space) of the corresponding traditional SDI video signal. Thus, a Type N sender would be able to send packets in a stream that would have a noticeable gap that occurs during each video frame period. For example, in a 720p signal running at 50 frames per second, and a VANC equal in duration to 30 lines of video, the sender would deliver packets for (720/750*20 milliseconds) 19.2 milliseconds out of every 20 milliseconds, and have a 0.8 millisecond gap when no packets are sent. Note that this would be the behavior that would be the easiest to implement if an incoming SDI signal was simply converted to ST2110 packets whenever active video samples arrive. The SDP for this type of stream must include a parameter “TP=2110TPN.”

Type W senders are allowed to have a significantly greater burstiness. This category was included in the ST 2110-21 standard to accommodate software-based senders, such as a graphics generator. For Type W flows, senders can have at least quadruple the amount of burstiness as a Type N or a Type NL, and in many cases much more. While this looser tolerance should make things better for a sender (particularly one that is implemented on a virtual machine), it does have consequences for receivers, which require a corresponding increase in the size of their input buffers. This can be costly, both in terms of the raw amount of memory that is required as well as in terms of delay for an incoming signal. Type W streams will also tend to consume larger amounts of buffer space within network switches and other devices; applications with significant quantities of Type W senders will need to be implemented using network devices that have sufficiently large internal memories. The SDP for this type of stream must include a parameter “TP=2110TPW.”

Fig. 1

Click on the Image to Enlarge

Fig. 1 shows a comparison of the three different sender types. Each of the three types is shown with a simplified representation of the packet flows along with a moving average of the bit rate. Note that for type NL, the moving average bit rate is flat, showing that these flows are the best behaved. Type N shows an increase in average bit rate during active video and a decline during the VANC. For Type W, the average rate shows even larger flow rate surges and drops.

Receivers are also categorized in ST 2110-21, but this information is not required to be transmitted with SDP. A Type N receiver should be able to correctly receive a flow originating from either a Type N or a Type NL sender(but not a Type W), provided that the receiver is locked to the same clock as the sender and that sender is aligned to the SMPTE ST 2059-1 Epoch. A Type W receiver should be able to receive a stream from an N, NL or W sender, provided the receiver is locked to the same clock as the sender. A Type A (for Asynchronous) receiver should be capable of receiving a stream from any type of sender, regardless of clock source or signal phase.

PRACTICAL CONSIDERATIONS

For time-sensitive flows within a media facility, such as a live broadcast, Type N or NL senders will tend to dominate, because they are the easiest to multiplex together and require the least amount of buffering in the network and at receivers, and therefore introduce the smallest amount of end-to-end delay. If Type W senders are present in a network, receivers will also need to be Type W to be able to receive these flows. For non-frame-accurate applications, such as monitors and multiviewers, or when synchronization between senders and receivers is not present, Type A receivers can be used to accommodate any type of ST 2110 uncompressed video flow.

Wes Simpson is the president of Telecom Product Consulting. He can be reached via TV Technology.

Wes Simpson is President of Telecom Product Consulting, an independent consulting firm that focuses on video and telecommunications products. He has 30 years experience in the design, development and marketing of products for telecommunication applications. He is a frequent speaker at industry events such as IBC, NAB and VidTrans and is author of the book Video Over IP and a frequent contributor to TV Tech. Wes is a founding member of the Video Services Forum.