Trends in Storage Resource Management

The professional video industry's #1 source for news, trends and product and tech information. Sign up below.

You are now subscribed

Your newsletter sign-up was successful

No organization or individual is immune to the problems of limited storage capacity. However, many are finding new means to adapt their storage resources to alleviate the impact of not having sufficient storage to meet their operational and business needs. Some have moved to cloud storage for their archives or as mid-tier storage silos or for temporary “bursty” needs. Others have looked to virtualization technologies to help support cross utilization of both storage and compute resources.

Virtualization is bringing to industry new methods for the allocation of services across pools of resources ranging from servers (for compute and processing) to high-performance storage (for live work) or near-line (or deep) archive. Storage resource management processes help users make appropriate decisions. Applications vary from real-time storage environments to short-term “slow” storage to long-term “deep” storage for seldom-accessed archive purposes.

When organizations choose not to use public cloud services for storage or compute resources, they have likely either built their own “private” cloud or have already carved out a portion of their datacenter for on-prem storage. Storage management and virtualization can be applied to your on-prem resources in the same way as cloud solutions, but usually on a smaller scale.

DISSIMILAR STORAGE

No organization purchases “all the storage they’d ever need” at one time. The probability that there are differing sets of storage systems in any one datacenter or equipment room is high. In these cases, the groups of storage were probably acquired at different times, for different needs, and are most assuredly of different capacities, performance and drive denominations or configurations.

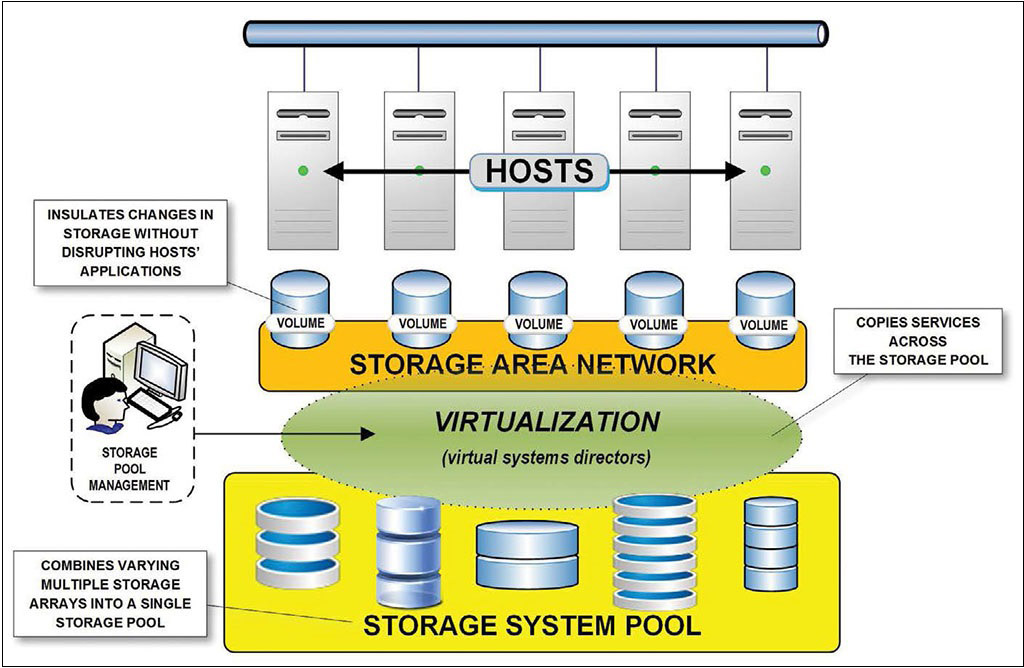

Fig. 1: Conceptualization of a storage system pool with surrounding components. The “pool” consists of various sets of existing disk arrays, which are combined into a single storage pool and managed through the virtual systems director.

Varying assortments of storage (see Fig. 1, yellow box) create sharing problems that aren’t easily managed by administrators.

In broadcast media and entertainment, post-production editing systems continue to scale in performance and demand. Recently storage and server vendors have made a renewed push toward uncompressed editing and to employ UHDTV/4K shooting and posting—driving storage capacities much higher than ever before. Any of these activities infer that storage systems will need continued updates with newer, faster arrays, and more capacity to support these higher resolutions.

The professional video industry's #1 source for news, trends and product and tech information. Sign up below.

The quandary of what to do with legacy storage systems—besides abandon it—is a question that has many factors to consider. Are the systems so old they can no longer be supported? Can they migrate older storage systems to other purposes when adding new, higher-performance storage systems to address new workflows? Is there sufficient life remaining on the existing storage system to retain value as a “pooled” resource?

Answers vary and need new considerations as storage management solutions make retention of existing or current storage systems more viable.

As capabilities in virtualization get easier to deploy, manage or become more cost-effective to implement, it makes sense to review opportunities for pooling of individual storage systems into consolidated sets of storage resources.

POOLS AND POLICIES

A storage system pool enables the grouping of similar storage subsystems as a managed, accessible set of storage, i.e., the “storage pool.” Using virtual systems directors (Fig. 1, green oval), users create a storage system pool of selected, available and appropriate storage subsystems. The virtual director, essentially a resource manager, lets authorized and authenticated users add storage to a storage system pool, permanently delete a storage system pool or edit a storage system “pool policy.” The storage system pool policy determines how the storage volumes within the storage system pool are allocated. Allocations are based on RAID level, bandwidth and storage pool preference settings.

Basic storage system pool management begins with discovering the available storage systems and resources—sometimes called the “audit.” Before beginning any automated (e.g., audit) process, users and administrators should be sure they’ve purged any latent files from the systems. MAM systems likely have garbage collection procedures built-in, which clean up duplicate, expired or scratch files. Conversely, simpler desktop editing systems usually won’t have this sophistication.

SCREEN AND ALLOCATE

Administrative procedures, whether manual or automated, should screen all the storage subsystems for any of the hundreds of file types, which may be written to servers or libraries—such as executables (.exe), MP3, GIFs, gaming or other files in quarantine. Once purged, the storage system pool process inventories of all the storage systems, reviews the applicable licenses (e.g., virtual machine control licenses installed or the time left in a complementary evaluation period, if applicable). If needed, the inventory process may allow users to purchase licenses for additional needed storage and may check for updates related to the management pool application.

Next comes the allocation process, which, once systems are appropriately configured, may be the manual segmentation of storage groups; or with a software-controlled environment, may be a “real-time” storage management solution provided by a vendor or a virtualization solution.

TEMPLATED OR REAL TIME

Storage in a virtual environment is usually managed in one of two ways: through the use of templates (or wizards), which will be “activated” in the future; and (b) in real time—where changes happen immediately or during the next restart.

When configuring storage using templates, you only create storage “definitions”—otherwise referred to as “configuration settings.” When creating “storage device definitions” as templates, no storage device is actually configured or altered. Before the definitions can take effect, they must first be “deployed.”

How the configurations are deployed depends upon how systems administrators have configured their deployment activities. By delaying deployments until qualified, any unintended activities, storage misallocations or those without authorization are prevented.

Conversely, when configuring in real time, the storage device will be active and connectivity would already have been established. All included devices would have to be discovered and any device the storage attaches to cannot be locked or made unavailable. When changes to the storage device are ready, the user opens a “panel,” which will make those changes immediately upon clicking “deploy” or “apply.”

AUTOMATED ONLINE STORAGE

When your internal/on-prem storage allocation reaches capacity, then alternatives will be needed. One alternative is “automated online storage.”

Traditionally, the managing of online storage was by “time interval scanning” of the overall workspace. Next the system performed automated checks to see how much storage remained, the amount of storage consumed during the last interval check, and included additional safety checks for unexpected situations.

Today, statistical data derived from such activities is pushed to integral databases and trending applications that watch current and past activities, then predict what may be coming. APIs may leverage third-party products, i.e., schedulers or calendars, to help analyze upcoming needs and predict future storage requirements.

With today’s unpredictable demands placed on storage, we must ensure there is sufficient storage to handle unexpected events or anomalies, which could occur between scans. Should a need for storage exceed the available allocated storage volume, resource managers will quickly add the needed storage—plus any overflow buffer—in real time. Calls to outside resource templates that stand “ready for deployment” may be enabled automatically, either on a local basis or through remote activation into the cloud.

Real-time “management implementation” may help reduce costs by mitigating the repeated environment scans and by providing up-to-date utilization analysis of data, which is then applied to maximize storage utilization rates.

Making the most of storage resources is now a routine part of overall systems management. Effective storage management requires that the correct technologies be blended with information technology practices applicable to the business’ activities (e.g., content collection and ingest, MAM, editorial and long-term productions or playout).

With the right tools, IT managers can then optimize the storage; plan steps to safeguard the data; and in turn, help reduce operational and long-term capital expenditures.

Karl Paulsen is CTO at Diversified (www.diversifiedus.com) and a SMPTE Fellow. Read more about this and other storage topics in his book “Moving Media Storage Technologies.” Contact Karl atkpaulsen@diversifiedus.com.

Karl Paulsen recently retired as a CTO and has regularly contributed to TV Tech on topics related to media, networking, workflow, cloud and systemization for the media and entertainment industry. He is a SMPTE Fellow with more than 50 years of engineering and managerial experience in commercial TV and radio broadcasting. For over 25 years he has written on featured topics in TV Tech magazine—penning the magazine’s “Storage and Media Technologies” and “Cloudspotter’s Journal” columns.