The Reality of Virtual Production

Making the unreal look real while delivering cost and time savings

The professional video industry's #1 source for news, trends and product and tech information. Sign up below.

You are now subscribed

Your newsletter sign-up was successful

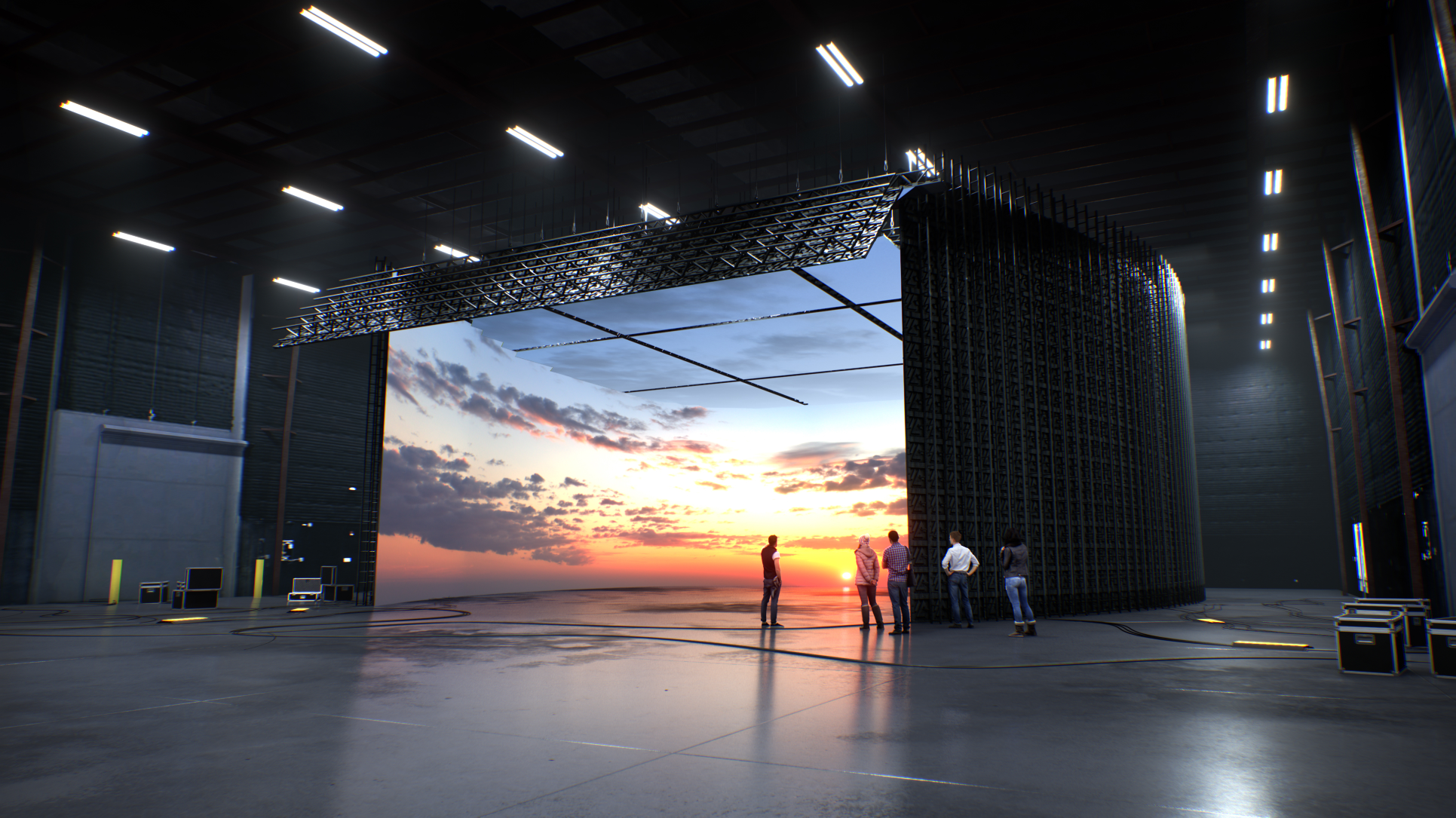

Virtual production has been around for years: Rear/front projection, matte painting, blue/green screen, chromakey, motion capture. Its latest iteration took “The Mandalorian” to bring it to the forefront. In-camera VFX virtual production (aka “Mando Style”) using LED panels to project a background that not only reflects on actors and foreground scenery, but that actors can see and interact with. It’s a seamless transition between the real and unreal.

Research firm Research and Markets reported the virtual production global marketplace was valued at $2.4 billion in 2021, growing to $3.1 billion by 2026. That’s a compound annual growth rate (CAGR) of 14.3%. But that was in February 2021. By December 2021, CAGR was increased to 17.6%. By October 2022, estimating out to 2027, CAGR was 18.7%.

Non-Virtual Savings

A term to know is “volume,” the space where virtual production happens. Volumes combined with the rest of what virtual production offers is a cost and time savings juggernaut.

According to A.J. Wedding, co-founder & director of virtual production at Orbital Studios, while the big LED wall is what amazes most people, with virtual reality headsets you can bring all department heads together to scout locations all in one place—saving on travel and time.

From a production cost standpoint, examine the FX series “Snowfall,” where Wedding served as virtual production supervisor for seasons five and six. The series realized a savings of $1 million per season.

While a 62x14-foot LED wall is used for a penthouse set, Wedding explained the savings didn’t end there. “The car process used to mean one car, one scene and one location. But for ‘Snowfall,’ it meant multiple scenes with multiple cars. That’s saving money and it looks so good. We also used a 20x12-foot LED wall on casters and moved it from set to set to show Paris or Detroit—the production didn’t have to pay for two LED walls, just the single portable.”

There’s been a radical change in who uses virtual production that will continue."

Erik Weaver

With virtual production, the set is typically ready before the actors, with turnaround sometimes 30-50% faster, according to Erik Weaver, head of virtual and adaptive production at the Entertainment Technology Center (ETC) at USC—a think-tank working on standards for the industry and the standardization of education and curriculum within the field.

The professional video industry's #1 source for news, trends and product and tech information. Sign up below.

Until recently, virtual production was limited to complex, outlandish and science fiction productions. “There’s been a radical change in who uses virtual production that will continue,” said Weaver. “The shift is from large ‘Mando’ volumes to places like Stargate Studios that purchased LEDs from Costco to use as side panels outside of train windows.”

According to Weaver, tighter pixel pitch—the distance between pixels in millimeters—will mean cameras can get closer to the LED wall. “‘Mando’ used a 2.84 pitch so the camera was 16-feet away, while a pitch of 1.5 means you can get the camera 3-feet from the wall, that’s what Orbital does. This makes virtual production economically feasible for mid to smaller-sized stages.

For the new History Channel original series “History’s Greatest Heists with Pierce Brosnan,” virtual production significantly compressed Brosnan’s time on set. “This is a really cool crime reenactment show and places Brosnan as though he was in the locations,” said Wedding. “They created the virtual environments based on all the re-creations and shot Brosnan for the entire season in three days, with 11 setups a day and working no more than six hours a day.”

Familiar Names

Last December, Amazon Studios opened Stage 15, its new volume and formation of the new Amazon Studios Virtual Production (ASVP) department. The stage accommodates an LED wall that’s 80 feet in diameter of near wraparound LEDs with two additional floating walls 26 feet tall in a 34,000 square foot space with 46-foot ceilings.

Stage 15 is fully connected into the AWS cloud, and is an integrated part of the production-in-the-cloud ecosystem. The facility provides a camera-to-cloud workflow with direct connection from Stage 15 to AWS S3 storage for cameras, post-production, remote global collaboration and compute power.

Ken Nakada, head of Virtual Production Operations for Amazon Studios says what’s learned during a project stays with ASVP. “With normal productions, the experience, crew and key learnings wrap at the end of each given project. ASVP aims to retain and build on these learnings, thoroughly document them, and share them across projects to elevate the industry’s use of this new technology.”

In August 2021, NEP Group launched NEP Virtual Studios through the acquisition of Prysm Collective, Lux Machina and Halon Entertainment. Stage 22 at Trilith Studios outside Atlanta was designed and commissioned by Lux Machina for Prysm and is powered by Lux Machina’s real-time 3D engines. It’s a fully enclosed 80-by-90-by-29.5 foot volume in an 18,000 square foot purpose-built stage, built to accommodate large set pieces wrapped 360 degrees with LED panels, including an LED ceiling.

“Stage 22 incorporates new LED, volumetric capture and structural technologies with amazing image processing capabilities to allow the utmost flexibility for today’s filmmakers,” said Wyatt Bartel, vice president of Production for Lux Machina. “We’ve seen virtual production grow significantly in recent years. This is partially due to the increasing capabilities and affordability of virtual production technology, broader understanding from filmmakers of its benefits and capabilities, and the number of virtual production companies worldwide.”

Last October, Sony Pictures Entertainment (SPE) announced its first volume located at Sony Innovation Studios. It uses Sony’s high brightness and wide color gamut Crystal LED “B-Series” display, a fine-pitch LED system co-developed by Sony Electronics and SPE for use in virtual production.

It should be noted that others work with LED manufacturers to customize their walls. Orbital Studios works closely with Planar. ETC with a variety of manufacturers.

Live Broadcast Virtual Production

“Mo-Sys has been involved in the evolution of in-camera visual effects [ICVFX] from the very beginning,” said Mike Grieve, Mo-Sys Commercial Director. “We’ve been selling solutions for ICVFX almost as long as we’ve been selling broadcast virtual set and augmented reality solutions.”

Mo-Sys sees companies using its ICVFX solutions because the real-world alternative is either too costly, too dangerous or technically impossible. “ICVFX is normally associated with LED virtual production, but it’s also used with blue/green screen,” said Grieve. “LED ICVFX is popular for commercials and drama, blue screen ICVFX for cinematic drama and green screen ICVFX for general entertainment.”

Then there’s multicamera switching. “When the director switches cameras, it takes five frames for the wall to update with the new camera’s background with the correct perspective based on the camera/lens tracking data,” said Grieve. “This delay has to be compensated for, and that’s what Mo-Sys’ Multi-Cam Switching does.”

For Vizrt/NewTek’s Martin Klampferer, R&D manager and product owner of Viz Engine, virtual production is all about live. “It’s virtual studios, blue/green screen and Viz Engine delivering the graphics,” he said. “And increasingly more about live talent in front of video walls. Viz Engine combines everything, even if using multiple cameras. Viz Engine gets the camera tracking data in 3D space, the key of the talent, the graphics and does the complete compositing, including placing elements in front of and behind the talent.”

Non-Virtual Hurdles

It’s all about education and misinformation.

“This is not an LED rental, it’s a workflow,” said Wedding. “Producers say ‘let’s rent the LED wall, let me get the individual companies needed,’ but there’s no synergy. No department head on top of it. Something always falls through the cracks and I’ve seen it multiple times. We want to be the one you fire, that way we can control it all—tell people what’s possible.”

“Mo-Sys opened a facility in Los Angeles where people could learn, train and experiment,” said Grieve. “We also started the Mo-Sys Academy in London where on-set virtual production could be taught to students, as well as cross-training experienced production people.”

Perhaps Weaver put it best: “We’re

at virtual production 1.0. It’s the difference between getting a nice pasta dinner from a restaurant to you, getting the ingredients and learning how to make it from scratch.”

Michael Silbergleid serves as president of Silverknight Consulting in Fort Myers, FL, a strategic communications firm serving the broadcast and media industries since 1999. Previously, he was editor of Television

Broadcast magazine and Sports TV Production, the first sports-dedicated trade magazine for the industry. He was manager of Educational Television and Telecommunication Engineering for the Huntsville City

School System in Alabama and has been a producer, director, video editor, chief engineer and facility designer. He holds an MA degree in Telecommunication and Film Management from the University of Alabama,

and two BA degrees in Dramatic Arts & Dance and in Speech Communications from SUNY Geneseo.