French building new ultra HD encoding system

A French project starts this week to develop a new end-to-end encoding scheme for future ultra HD services, reducing the bandwidth consumed.

This has been driven by concerns over the huge amount of bandwidth that ultra HD would consume using currently available encoding schemes such as H.264. Called 4EVER, the project involves a consortium of research laboratories including France Telecom’s Orange Labs, the Télécom ParisTech and INSA-IETR University labs, as well as transcoding vendor ATEME, state broadcaster France Télévisions, GlobeCast, TeamCast, Technicolor and Doremi.

The objective is to apply the emerging High Efficiency Video Coding (HEVC) standard for encoding ultra HD, but also to go further by optimizing the whole end-to-end video transmission cycle from contribution to final consumption. The consortium believes this will achieve further efficiency savings and bandwidth reductions beyond those possible through compression alone.

HEVC is the likely successor to H.264 under joint development by ISO/MPEG (Motion Picture Experts Group) and the ITU-T/ VCEG (Video Coding Experts Group). Currently available as a complete draft, it is due for final ratification as an international standard in January 2013, offering improved ability to trade between the key parameters of encoding complexity, compression rate, robustness against transmission errors, bandwidth, and video quality. It is designed to exploit the dramatic increases in computational power that have arrived since H.264 was conceived, so greater complexity is assumed, and it is expected to halve the bandwidth consumed by video at a given quality. This is roughly the same improvement that H.264/MPEG4 achieved over the preceding MPEG2 standard, sufficient to make the effort of development and deployment worthwhile.

The principle technical improvement lies in replacing the relatively rigid macro blocks that served as the compression units under H.264 and MPEG2 with more flexible, variable-sized coding units or CUs that allow individual frames to be partitioned into smaller sections. This, combined with new prediction algorithms, brings the ability to compress within frames as well as achieve higher levels of inter-frame compression. Put simply, a greater proportion of a frame can be compressed.

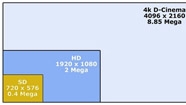

The implications for future HD services will be significant. The ITU has defined two UHDTV standards, 4K and 8K, as multiples of the existing 1080p 1920 format defined in the ITU-R Rec. 709 standard. HD 1080p, at present referred to as full HD, displays at a resolution of 1920 pixels wide by 1080 high in progressive scan, corresponding to a widescreen aspect ratio of 16:9.

4K is defined simply by doubling 1080p 1920 in each direction to yield pictures with four times the spatial resolution, at 3840 pixels wide by 2160 high, which is 8 mega pixels. 8K then doubles again to resolution 7680 wide by 4320 high, spatially 16 times 1080p, or 32 mega pixels. The bandwidth implications of these standards have alarmed some operators and broadcasters, for an 8K program running at the maximum allowed 120 frames per second would require 320 times the bit rate of many current HD transmissions, given that these are often not yet even 1080p, but either 720p or interlaced 1080i.

The professional video industry's #1 source for news, trends and product and tech information. Sign up below.

4K will arrive first, but even that at a normal frame rate could easily consume around 10x the bandwidth of current 1080i or 720p HD services. Use of H.264 reduces the bit rate by around 50 percent and the new HENC promises to halve that again.

While 4EVER has not given any precise targets, it is likely to be aiming to achieve a further 50-percent efficiency gain through optimizing the whole delivery chain, and if so would bring the bit rate required for 4K HD down to manageable proportions, around double that required for current 720p or 1080i HD. Given that 4K services are unlikely to arrive for at least five years, prospective increases in network bandwidth should be able to absorb that.

The 4EVER project is not just about bandwidth reduction, as it aims also to improve the whole enhanced HDTV experience for viewers, even when consuming content on small devices that would not benefit directly from ultra HD. So, initially a large part of the research effort will go into evaluation of the overall improvement in TV experience that can be provided not purely from higher spatial resolutions, but also higher frame rates, increased color depth, and surround sound.

Another objective is to make deployment of HEVC efficient and affordable. Here, ATEME will play an important role, having already won a number of major transcoding contracts worldwide for H.264 at both the contribution and distribution stage. ATEME’s strategy can be summarized as being to start from the best video quality possible at a given bit rate at the contribution stage, using its 10-bit H.264 technology for example, and then cascading down to lower bit rates in an ordered tree-like structure without having to continually start from the beginning by going back to the source for distribution to different target screens. This is enshrined in the latest version of its transcoding software EAVC4, which is likely to serve as a blueprint for 4EVER.

Most multiscreen encoding platforms exploit hardware parallelism to encode for different screen formats simultaneously. But, for each of the formats, the encoding process has to begin from the contributed source and compress right down to the target resolution. ATEME, though, has improved efficiency by observing that once video has been compressed to a given level, this can then serve as the source for all outputs at different lower levels if the encoding is done correctly.

Suppose, for example, a video stream had already been downscaled from 1080p full HD at 1920 x 1080 resolution to 720p HD for main screen TVs at 1280 x 720. Then, the operator wants to produce a standard-definition stream for a smartphone at 640 x 360. With ATEME’s EAVC4, it would not be necessary to go back to the 1080p, but only to the 720p and work from that.

ATEME has published test results using standard benchmarks showing that the resulting saving in computational power translates into an average threefold increase in performance at all video quality (VQ) settings. This means that operators can either encode three times faster at their current VQ level, or increase VQ significantly at the prevailing encoding speed, or a combination of both.

The main benefit for many operators will be the potential to increase capacity without having to upgrade their infrastructure. For example, VOD output could be tripled, or three times as many IPTV channels could be delivered by a given processor.

ATEME has called the new software “intelligent parallelization,” although it does not actually involve parallel processing as such. It is more of a pipelining approach, in effect encoding in a series of steps, none of which need to be repeated. The encoding, running on an Intel SandyBridge chip, uses a new patented approach, which as the company points out avoids the dependency on external intellectual property that has bedeviled the MPEG movement over the years. This also gives the 4EVER project an advantage over any other ultra HD transcoding project, assuming it does use this ATEME technology.