Audio Consoles Integrate AES67 Advances

NEW YORK—Thanks to the adoption of the SMPTE ST 2110 standard, 2018 promises to be the year when integration of audio and video becomes easier and faster. The synchronization of all streams will simplify the process of recording content, pulling apart audio and video in order to work individually on the components and then bind them back together. How will console manufacturers respond to the new requirements?

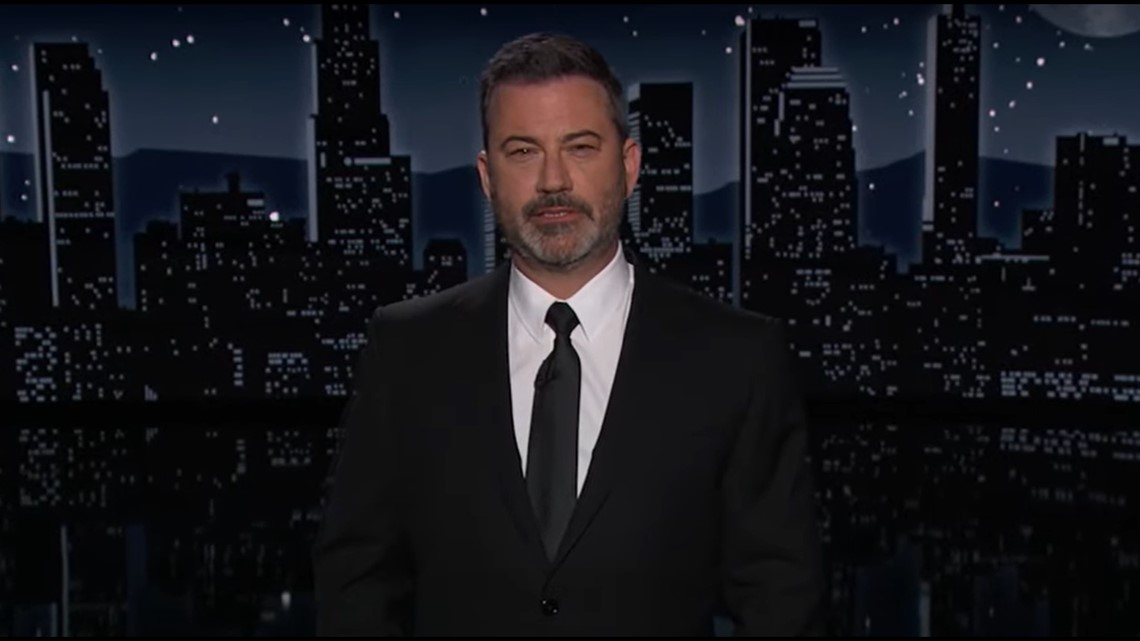

Phil Owens, senior sales engineer, Wheatstone

AES67 will be the required audio format. Every packet in an AES67 stream is time-synched, making it possible to re-synch audio and video. Phil Owens, senior sales engineer at Wheatstone in New Bern, N.C., says that the new SMPTE standard will be good for his company.

“In the past, with HD-SDI, audio was embedded in the video stream,” he said. “You had to de-embed it—which required a bunch of hardware—then re-embed it, which required even more hardware. It’s going to take a while for stations to adopt AES67, but when they are looking at new systems they will have to make sure they support the SMPTE ST 2110 requirements.

“For us, it’s good because we have a whole IP infrastructure we’ve developed over the last decade, which has been used in a large number of radio and TV facilities,” Owens continued. “Our Wheatstone Blade network is an IP audio network that’s perfectly suited to supporting the SMPTE ST 2110 spec. If a station is looking at IP-based systems, the Blade offers great connectivity between various systems.”

Owens pointed to a second benefit to AES67 adoption. “All of the different platforms that are in use—house routers, audio systems, intercoms, for example, will now have a common protocol,” he said. “We’re constantly adding new features to the Blade network. The most recent is a utility that can be built in that will let a Blade connect to a Dante network. We’re continuing to develop control surfaces—what we used to call audio boards—that take advantage of our Blade network and use it as their backbone.”

MOVING AWAY FROM THE PHYSICAL

Logitek Electronic Systems is banking on a continued migration away from physical consoles, according to Frank Grundstein, director of sales for the Houston-based company. “The world has become accustomed to touchscreens,” he said. “Whether they are in a mobile phone, on a tablet or part of an automation system, touch devices are now the norm for running applications and making menu choices. Consequently, in the audio world for local market television, we have increasingly seen a move away from large mixing consoles.”

The professional video industry's #1 source for news, trends and product and tech information. Sign up below.

Stations that rely heavily on automation tend to prefer having no physical audio console at all, according to Grundstein. “A ‘virtual’ console presented on a touchscreen supplies all the controls needed for local takeover of audio. Most control is handled via the program automation system,” he said. “While some users still prefer the convenience of a physical board, they don’t need or want an audio console that takes up half a room.”

To address these changing needs, Logitek introduced their Helix line of consoles in 2017.

The Helix line incorporates a “glass cockpit” approach to audio management as well as commonly requested physical components. Two models for television production are available: Helix Studio, a full-featured touchscreen console with multitouch capability; and Helix TV, a physical board with a mix of touch-sensitive automated faders and touchscreens for source selection, metering and other functions.

The physical console, available in configurations ranging from 6–36 faders, is compact yet provides all the audio control needed for local market TV operations. As a virtual device, Helix Studio can be configured to meet any station’s requirement for faders, source selection, monitoring, metering and more. Both products work in tandem with the Logitek JetStream Plus AoIP router, which addresses another TV market need—minimal rack space requirements—with 240 channels of I/O in only four rack units.

The cost of a system is based on the number of faders and how much audio I/O will be needed for the router, according to Grundstein.

“Most TV facilities have been buying a physical console with 24 faders,” he said. “They sometimes go up to 36 but generally don’t need that many faders. Some station groups have determined that they don’t want any physical control surfaces in their studios.”

Some operators are not comfortable working without physical faders, Grundstein added. “They may buy a physical console, but because the consoles are router-based, they buy one that has fewer faders than the ones on the board they’re replacing,” he said. “Other engineers feel that everything is going into automation and simply do not need any physical faders. Our virtual console, the Helix Studio, connects to the JetStream engine. When the automation system tells the audio engine to do something the moves reflect on the displayed fader.”

Dave Letson, vice president of sales, Calrec

REMOTE POSSIBILITIES

U.K.-based audio console developer Calrec is targeting remote broadcasting. “Traditional broadcasters are seeing huge competition from on-demand web streaming services,” said Dave Letson, vice president of sales. “To counter this, they are looking to cover more live events, and some are moving into niche programming to provide a broader choice and a more tailored viewing experience. To achieve this more versatility is being demanded from the audio console.”

Calrec’s Apollo console is a natural fit for this market, according to Ian Cookson, communications manager. “The real benefit of remote production is not really size reduction but the exact opposite,” he said. “It allows the broadcaster to do more with their resources for a nominal expenditure when compared to using traditional methods.”

For example, an Apollo console based at a sports broadcaster’s HQ can now cover multiple games from multiple locations, allowing providers to offer less popular sports that may not pull the viewing figures to warrant the expenditure of a full OB truck, Cookson noted. “As viewers are changing the ways they consume TV, there is an expectation to be able to watch whatever they desire,” he said. “Broadcasters need to keep up with this demand but don’t necessarily have the budget to use traditional methods. This way the output increases and the quality doesn’t suffer.”

Calrec addresses this need for remotely controlled audio with the RP1, which is essentially a mixing console in a 2U box, according to Cookson. The RP1 allows the broadcaster to overcome the three main hurdles for audio in a remote production workflow: latency, control and transport.

“The RP1 has DSP inside so it can mix locally at the venue so this will eradicate any latency for monitoring and IFBs,” he said. “These DSP resources are controlled from the main console at the centralized facility.”

This control data is a really small amount of information so the delay from the fader on the surface to the DSP in the RP1 is nominal. Plus the RP1 integrates with any of the backhaul technologies broadcasters use, from a traditional embedded SDI stream to emerging AoIP technologies.

“Calrec has an AoIP solution—a 1RU rackmount box that connects to Calrec’s consoles via the proprietary Hydra2 protocol like any other IO box,” Cookson said. “This acts as a gateway from Hydra2 to AoIP using the AES67 standard.”

These boxes can accommodate 1 or 2 cards, giving either 256 or 512 bidirectional signals, which is one of the highest density AoIP solutions currently available, according to Cookson.

“To the Calrec user the AoIP ports appear on the console like any other IO box and so there is no learning curve and sources can be patched to and from the AoIP box quickly and easily.”