Virtualizing Storage

The professional video industry's #1 source for news, trends and product and tech information. Sign up below.

You are now subscribed

Your newsletter sign-up was successful

A growing emphasis is being placed on managing storage. More than simply keeping track of where the files are in a system, requirements for managing future and legacy storage systems are necessary in order to see the content from one view, regardless of where that content resides. Coupled with newer file-based products, these solutions reap the benefits of their own dedicated and intelligent storage platforms.

In the past it was relatively straightforward to manage these entities in their, figuratively speaking, "own domain." The interchange of information between systems was often at the baseband level, utilizing a file-transfer protocol to move files from one system to another.

Users who couldn't manage a network transfer would pop in a USB thumb drive, copy the file, and sneakernet it over to the next device's storage island. This method does not support collaborative workflow processes now aimed at using the same content and repackaging it for another service or delivery.

CENTRALIZED STORAGE

Mapping various media server platforms to a converged network is no easy task. It involves countless hours of configuring, an ongoing attention to software updates, drivers and dealing with those pesky new products that other departments want to add into their workflow.

One solution that has been discussed, promoted and, in some cases, actually implemented is centralized storage. In a perfect world we would want a central repository everyone can access, which can harmoniously share files between all the servers and platforms in the enterprise, and requires little attention or management. However, this is unlikely without having a true commonality of all the devices in the system.

One solution that users are turning to is an up-and-coming technology called "virtualization."

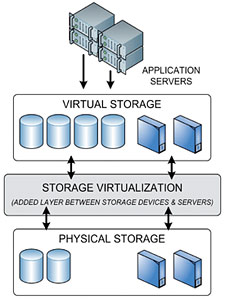

Fig. 1: Storage Virtualization inserts an additional layer between the storage devices and the application servers which serves as the interface between 'virtual storage' and 'physical storage'. The topic of virtualization, like most other technologies, can take on many different meanings, especially when used in varying contexts and for diverse applications.

Any technology that is camouflaged behind an interface that masks the details of how that technology is actually implemented could be called "virtual."

Virtualization is not new to computing environments. It can be applied to individual disk drives, clusters, storage area networks (SANs), tape libraries, and computers. Virtualization is routinely implemented in different locations and for different functions.

The basic idea behind storage virtualization (Fig.1) is to remove the storage management functions from the servers, i.e., the volume manager and file systems; and the disk subsystems (caching, RAID, remote mirroring and LUN masking); and put them into the storage network. As a result of this new positioning, a new virtualization entity is created that spans all the servers and storage systems.

One might see this as centralized storage, however virtualization need not depend on a single storage entity serving as the overall repository for all files and all applications going forward.

STORAGE VIRTUALIZATION

Instead, storage virtualization becomes those processes and tool sets that let users easily find their files, logically, without caring about where those files are located physically. In storage virtualization, media files may be on local storage platforms, on a centralized storage system, or remotely located in spinning disk farms or tape libraries. Metadata files might be located in a different serving environment from the media content files, or associated with proxy storage in yet another storage environment.

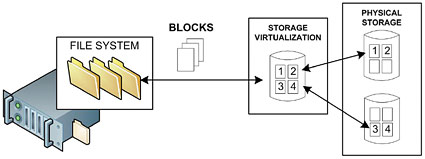

Fig. 2: Block-level Virtualization: storage is made available to the operating system or the applications (servers) by means of a virtual hard disk that is formed by the virtualization entity. From the perspective of servers needing to address various physical storage systems, virtualization is then used to abstract a view of the storage, its location(s) and its resources. Storage virtualization can be implemented in disk and tape devices, at the server, in the fabric switches and directors or through the use of appliances, which function similar to network attached (NAS) file sharing.

The latter, appliance-based storage virtualization for open system environments, can be either in-band—where the appliance sits in the data path, or out-of-band—when the server sits out of the data path as in a metadata server.

Regardless of which level of the storage network (the server, network or the actual storage device) the virtualization entity is located on, there are two basic types of virtualization—one at the block level and one at the file level.

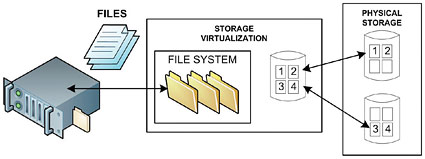

Fig. 3: File-level Virtualization: the virtualization entity provides virtual storage to the operating system or the applications (servers) by means of files and directories. At the block level, (Fig.2) storage capacity is made available to the operating system (OS) or the applications (APPS) in the form of virtual disks, where both OS and APPS then work to the blocks of that virtual disk. By contrast, at the file level (Fig.3) the virtualization entity provides virtual storage to the OS and APPS in the form of files and directories.

Storage virtualization can be applied to many operational models. It can reduce costs, space, cooling and extend the life of legacy storage systems, which would otherwise have been displaced as obsolete. When considering that next upgrade or when looking toward expanding a storage model using dedicated storage platforms (scale-out NAS, for example), also take a look at virtualization to see how it might apply to your working environment.

Karl Paulsen is a technologist and consultant to the digital media and entertainment industry. Karl recently joined Diversified Systems as a Senior Engineer. He is a SMPTE Fellow, member of IEEE, SBE Life Member and Certified Professional Broadcast Engineer. Contact him atkpaulsen@divsystems.com.

The professional video industry's #1 source for news, trends and product and tech information. Sign up below.

Karl Paulsen recently retired as a CTO and has regularly contributed to TV Tech on topics related to media, networking, workflow, cloud and systemization for the media and entertainment industry. He is a SMPTE Fellow with more than 50 years of engineering and managerial experience in commercial TV and radio broadcasting. For over 25 years he has written on featured topics in TV Tech magazine—penning the magazine’s “Storage and Media Technologies” and “Cloudspotter’s Journal” columns.