Practicalities of Object Storage

In my last column, we looked at the fundamentals of object storage as it applies to the media industry and as applicable to archiving. Object stores have become a principle solution for long-term data preservation, especially for cloud-based environments—whether for on-prem or private clouds. Object storage is used heavily in public cloud storage solutions and especially when the data is geographically disbursed for protection and accessibility purposes.

Furthermore, where tiered storage has been a trend for more than a decade, object storage brings new perspectives—particularly when addressing disk replacement management. Object storage may now be overshadowing even some of the earlier approaches to tiered storage.

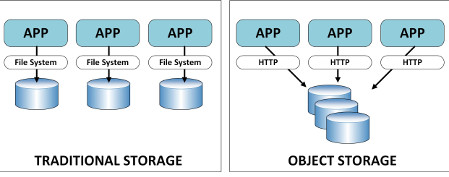

A comparison of how traditional versus object storage is implemented

Tiered stores were, for many years, an integrated overall approach to having different drives and physical media applications arranged in such a way that high-performance drives (those at the highest/top tier) were used for immediate access by applications; and lesser performing storage solutions (e.g., tape) were used for longer-term storage.

HIGH-PERFORMANCE, TOP-TIER STORAGE

Applications, for example those for post-production video editing or graphics compositing, require data delivery to be extremely fast and do so with minimal latency. Often these top-tier storage devices were Fibre Channel based (i.e., both the drives and the network switch configurations are Fibre Channel). These systems would be coupled with specific file-system controllers (aka “metadata” servers) which are designed to manage the high-volume/high-throughput transfers necessary for very rapid data movement to and from the base system application servers, local workstations or the compositing, rendering or effects servers.

The mid-tier storage was often referred to as “slow-disk” or “near-line” storage. The content data held at this level were usually the completed works (finished edits), as well as complimentary versions of the first master completed clips, stories or commercials. In addition, various “b-roll” pieces might be held in the same near-line storage since fast accessibility was not necessarily needed during content approval periods or lulls during the post-production periods. Often this storage tier was also used as the holding location for content which would ultimately be readied for archive or much deeper/long term storage.

The professional video industry's #1 source for news, trends and product and tech information. Sign up below.

The drives needed in this application need not be expensive, top-tier level (fibre channel) drives. Even the types of drives used in consumer-level PCs or laptops would suffice for this level of storage.

ARCHIVE NEEDS AND CONSIDERATIONS

The lowest tier storage was usually a linear tape storage solution or possibly cloud storage. If stored to tape, sometimes the workflows would require a local copy in a tape library be retained, and an off-site copy (e.g., an “iron mountain” version) would be created and physically shipped to another location.

It is this lowest tier, the archive tier, which is steadily being replaced by the disk-based object storage solution. The reasons for using object storage vary depending upon the current investment the archive-provider may already have in tape-based storage; or how the organization feels about shifting to newer technologies (i.e., disk storage either on-prem or offsite); or if the organization feels tape storage is too costly or they’ve never used tape-based library solutions and don’t want to pay for the costs of private or public cloud for archive.

In the latter case of cloud storage costs, this rationale will depend upon what form of the cloud you choose or if the potential time line needs for data restoration (recovery) cannot be predicted. These factors change the equation in terms of both the cost for data recovery (i.e., getting the data back to your own mid-tier storage) or how quickly, time wise, you might expect that data be needed since some archives use storage which cannot be easily just called up and returned to the user.

Deep archives are meant to take data in at very low costs and charge you much more to return that data. The more quickly you want the data recovered, or the volume of the data you need plus the time you need it back can seriously impact the costs for storing (and recovering) that data.

FAST DATA RECOVERY IMPACTS

Some organizations simply don’t know when they’ll ever want the data back or how fast (e.g., disaster recovery versus occasional return uses). For this reason, by itself, building an archive which allows for the rapid return of the data (whether from offsite or onsite tape or from the cloud) becomes a business-level trigger whereby you might want to consider object storage and exclude tape or cloud, except in a few situations.

Another capability inherent in object storage-based solutions is the cataloging methodologies utilized in the store itself. Metadata is key to search and paramount to getting just the right data back from any store. In most non-object cases, the metadata is held in an external media asset management system, which needs its own database, application servers, and integration into the entire storage and workflow solution set.

When the key metadata can be held within the object (store) itself and be searchable or modifiable by an external solution (that is, the object’s indexer), then the necessity for an archive-centric MAM changes. In objects, which are essentially “wrappers” or “containers” that hold both the content data and the rich metadata together as a single entity; only keywords and locations need to be addressed by these external MAM-like applications. This reduces hardware and software overhead, and allows the systems to be rapidly accessible. In many cases the object store solution will take less physical space (a smaller footprint) compared to the tape library and cassette storage needed in more traditional archive applications.

LONG-TERM COSTS AND BUDGETS

While this point might be arguable depending upon when, what or how much the user has invested in a tape solution—the long-term costs for object storage, once implemented, can be reduced versus tape. Why? Because tape has a finite life (both physically and technically) that mandates the data be migrated from an older format to more current higher density formats. If you have a large library, this means the older tapes (which hold far less data) are or will need to be updated; that is, replaced with the most current solutions on a recurring basis. This replace and renewal process may be every few years. So, both the physical media (tapes) are replaced and the tape-drive mechanisms will also need replacement to leverage the newer, higher density/higher capacity tape storage solution. And don’t forget, if the physical media is damaged, the data is lost forever, unless a second copy is held elsewhere.

In object storage solutions, there will be multiple drives which (in similar fashion to RAID) protect the data across many sets of drives of that object solution. In some object store chassis, which may for example, consist of 20 to 40+ drives in each group set, as many as 4 to 10 drives can fail—and all the data can still be recovered. In large data centers (or clouds) disk drives are seldom replaced since the labor to remove and replace a single drive—per instance of failure—cannot be justified. When an object group gets to the point it becomes too high of a risk to implement data recovery (that is, maybe 6 of the 8 drives in a 20-drive group have failed); a decision can be made to update that chassis, or maybe retire the chassis altogether.

MAKING BEST SENSE

Object storage makes sense when rapid access to data is necessary; when the risk to not being able to recover the data quickly is unwarranted for your operations; or if a cloud solution is not financially beneficial (coupled with either of the other two reasons). And since object storage systems allow you to replace older smaller drives (e.g., 2 or 3 TB drives) with higher capacity drives (mixing multiple size drives in the same group set or chassis), then the migration issues associated with tape vanish.

Next time you need to look at archiving content without the complications of tape or cloud, consider how an object-based system might fit into your organization’s workflows and budget.

Karl Paulsen is CTO at Diversified (www.diversifiedus.com) and a SMPTE Fellow. Read more about this and other storage topics in his book “Moving Media Storage Technologies.” Contact Karl at kpaulsen@diversifiedus.com.

Karl Paulsen recently retired as a CTO and has regularly contributed to TV Tech on topics related to media, networking, workflow, cloud and systemization for the media and entertainment industry. He is a SMPTE Fellow with more than 50 years of engineering and managerial experience in commercial TV and radio broadcasting. For over 25 years he has written on featured topics in TV Tech magazine—penning the magazine’s “Storage and Media Technologies” and “Cloudspotter’s Journal” columns.