This month I’m continuing my coverage of the 2016 IEEE Broadcast Symposium, with a focus on presentations about ATSC 3.0 and the transition from ATSC 1.0 to ATSC 3.0. As expected, ATSC 3.0 received much attention during the conference, both in the presentations and in discussions between the sessions.

In the tutorial session “The Practical Side of ATSC 3.0,” Mark Earnshaw, senior engineer at Coherent Logix, presented an excellent overview of the ATSC 3.0 Physical Layer. Earnshaw knows the ATSC 3.0 system inside and out, and I strongly encourage readers to take advantage of any opportunity they might have to catch one of his presentations. It would provide an excellent basis for a webinar or, more likely, series of webinars. The presentation is in the IEEE Broadcast Symposium Proceedings.

‘WHY ATSC 3.0?’

If you’re not yet convinced there is a need for ATSC 3.0, the presentation “Why ATSC 3.0?” by Dave Siegler, vice president of technical operations at Cox Media Group, provided some arguments for making the transition. He outlined what focus group studies show consumers want, but today’s TV can’t deliver: better pictures, better sound quality with more tracks and customization, targeted advertisements, enhanced emergency alerts, and coverage on mobile devices.

Siegler also pointed to business trends, including cord-cutting/cord-shaving, an increase in over-the-air viewing and increased usage of over-the-top services. He showed how, with ATSC 3.0, broadcasters can meet consumer wants and take advantage of the business trends. Why ATSC 3.0? “Opportunity!”

While I don’t focus on content creation in this column, I know many readers are involved with it. Skip Pizzi and others at NAB are leaders in the effort to define the framework required for delivery of ATSC 3.0 content over IP and to enable new features such as localized insertion of ads, hybrid broadcast/internet services, interactivity and more. If you haven’t been following the development of ATSC 3.0 upstream of the transmitter, try to secure a copy of Pizzi’s presentation “Content Creation for ATSC 3.0” from IEEE BTS or NAB.

Rich Chernock, CTO at Triveni Digital and chair of ATSC Technology Group 3, presented an update on the ATSC 3.0 status, noting some products should be available in 2017 with a commercial launch in 2018–2020.

Chernock cautioned that to get around the “chicken and egg” scenario—no consumer devices because no content being broadcast; no content being broadcast because of no consumer devices—broadcasters should start putting ATSC 3.0 on the air.

The professional video industry's #1 source for news, trends and product and tech information. Sign up below.

Many of the emails I receive about ATSC 3.0 from viewers and broadcasters express concern over loss of ATSC 1.0 service. In the petition for rulemaking to allow broadcast of ATSC 3.0, the proponents (broadcasters, CTA, NAB) committed to keeping stations available in ATSC 1.0 format during the transition. Without new spectrum, this will require channel-sharing for both ATSC 1.0 and ATSC 3.0 content.

OPTIMIZING ATSC 1.0

Several presentations in Friday afternoon’s “Next Gen TV” session examined the practical side of the transition to ATSC 3.0. I found the presentation “Optimisation of ATSC 1.0, an essential tool for the ATSC Transition” by Guy Bouchard, CBC senior manager for new broadcast technologies, particularly interesting. It covered a topic that will not only be essential for a successful ATSC 3.0 transition, but any station sharing an ATSC 1.0 channel.

Engineers who have had to add more channels to their 19.392 Mbps ATSC 1.0 stream have probably employed many of the techniques Bouchard described, including use of statistical multiplexing, minimizing overhead and PSIP and potentially reducing resolution. Bouchard explained the tradeoffs between these techniques, the impact on TV receivers and some suggestions for optimizing ATSC 1.0 bandwidth.

Statistical multiplexing (“stat-mux”) can cause problems for some receivers. Bouchard said some legacy TV sets that worked well with video streams with a minimum of 5 Mbps, an average rate of 7 Mbps, and a maximum rate of 9 Mbps showed glitches when the excursion was higher. Recent sets had no issue with these streams. Obviously, using such a low excursion in a statmux pool leaves little room for adding additional streams.

Reducing the null packet reserve is an important part of optimization. It requires making sure encoders don’t occasionally output higher bit rates than they should. This is primarily a problem with older encoders. After all optimizations and testing on multiple receivers, Bouchard was able to reduce null packet allocation from 0.5 Mbps to 0.1 Mbps.

This matches my experience. I’ve found 125 kbps (+/–25 kbps) of null packets works fine with encoders made in the last three years, good PSIP rate control and statistical multiplexing.

Stations’ TSIDs could cause channel-sharing problems. Each station has a unique TSID (Transport Stream Identifier) assigned by the FCC.

Bouchard found some TV sets allow multiple occurrences of the same TSID, while others dis-allow a second occurrence of a unique TSID. With several stations on the same transport stream, there will be a unique TSID in PAT, but several TSIDs in VCT. Bouchard said most receivers took the change well, but some were confused and some even had to be reset to factory settings.

Bouchard’s presentation contained useful tips and techniques for any stations considering channel-sharing as part of the ATSC 1.0 to ATSC 3.0 transition or as a result of the spectrum auction.

COVERAGE AREA

Channel-sharing on ATSC 3.0 involves more than dividing up bits, as the number of bits will impact coverage. The presentation “Coverage of Various ATSC 3.0 Transmission Modes” by Bill Meintel and Dennis Wallace at Meintel, Sgrignoli and Wallace described the many options and range of performance available for ATSC 3.0 transmission. They also provided practical examples showing population coverage with different service combinations compared with ATSC 1.0.

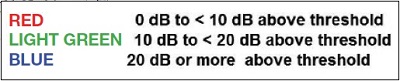

Most ATSC 1.0 coverage studies use the FCC outdoor planning factors based on a directional outdoor antenna mounted 30 feet above ground. Determining service within the coverage area is more difficult, moreso when the different thresholds for different ATSC 3.0 modes are considered. In these situations, a field strength plot isn’t particularly useful. I like the paper’s approach—show coverage-based difference between the field strength and the threshold for reception. The paper used three levels: 20 dB or more above threshold, 10–20 dB above threshold and 0–10 dB above threshold.

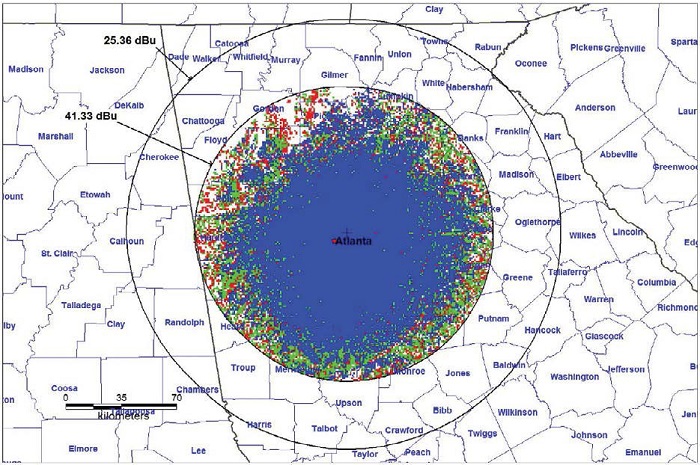

Fig. 1: PLP1 16.5 dB SNR 14.92 MBps enhancement layer LDM

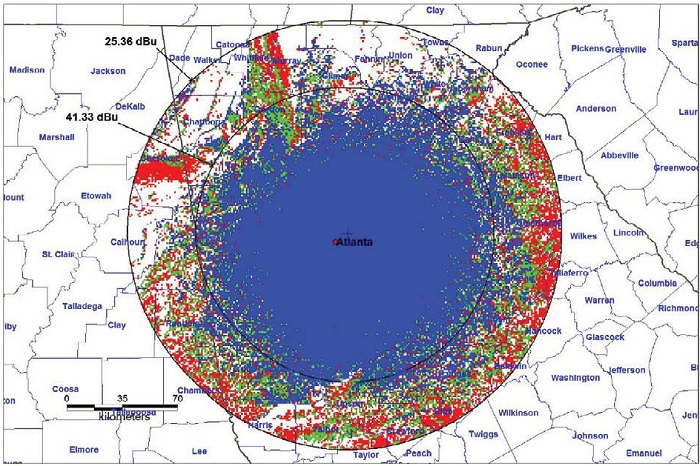

Fig. 2: PLP2 0.53 dB SNR 3.1 MBps core layer LDM

I’ll describe one of the scenarios using Layered Division Multiplexing (LDM), presented showing a likely early ATSC 3.0 transition scenario. The enhancement layer had one PLP providing ~14.9 Mbps of capacity and an AWGN threshold of 16.5 dB. With HEVC, it should be capable of carrying three or four HD+ services. Coverage is mapped in Fig. 1. The robust core layer had one PLP providing ~3.1 Mbps at an AWGN threshold of 0.5 dB, enough for two to four robust/mobile SD services plus audio. Robust core coverage is shown on the map in Fig. 2.

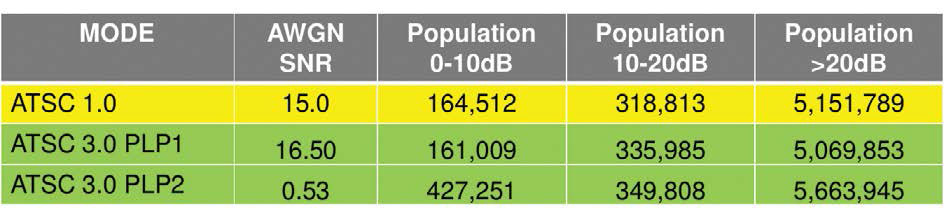

Table 1 shows the predicted service comparison for this configuration. Note that compared to ATSC 1.0, although the loss is small, not all the population predicted to receive ATSC 1.0 service will be able receive ATSC 3.0 HD+ service. However, a significantly larger population will get the robust SD and audio ATSC 3.0 service than the ATSC 1.0 service. Thanks to Bill Meintel and Meintel, Sgrignoli and Wallace for letting me use their slides.

Table 1: Predicted service comparison fixed and mobile LDM scenario

If you are interested in copies of the presentations, contact the authors or IEEE Broadcast Technology Society (http://bts.ieee.org/about-bts/contact-bts.html) to check on availability. If not a member of IEEE BTS, consider joining!

I welcome your comments and questions. Email me atdlung@transmitter.com.

Doug Lung is one of America's foremost authorities on broadcast RF technology. As vice president of Broadcast Technology for NBCUniversal Local, H. Douglas Lung leads NBC and Telemundo-owned stations’ RF and transmission affairs, including microwave, radars, satellite uplinks, and FCC technical filings. Beginning his career in 1976 at KSCI in Los Angeles, Lung has nearly 50 years of experience in broadcast television engineering. Beginning in 1985, he led the engineering department for what was to become the Telemundo network and station group, assisting in the design, construction and installation of the company’s broadcast and cable facilities. Other projects include work on the launch of Hawaii’s first UHF TV station, the rollout and testing of the ATSC mobile-handheld standard, and software development related to the incentive auction TV spectrum repack. A longtime columnist for TV Technology, Doug is also a regular contributor to IEEE Broadcast Technology. He is the recipient of the 2023 NAB Television Engineering Award. He also received a Tech Leadership Award from TV Tech publisher Future plc in 2021 and is a member of the IEEE Broadcast Technology Society and the Society of Broadcast Engineers.