Do We Need More Audio? A Primer on Immersive (3D) Sound

So you are “hearing” about new audio codecs, ones that promise to be more bit efficient as well as high quality, and will be able to deliver the “true” cinema sound experience in the home environment. You probably are thinking, “Didn’t AC-3 5.1 audio do all that?” Certainly it’s been in practice for over 15 years in ATSC 1.0, why add a new audio codec in ATSC 3.0? Before we can answer this question, I thought I’d begin by explaining what is behind this new audio experience.

IMMERSIVE AUDIO

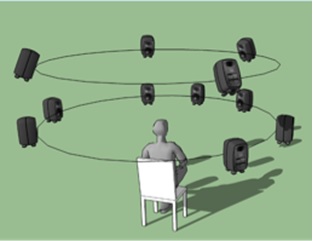

While 5.1 audio envelops the listener, there is only the horizontal dimension to the sound field (Fig. 1). While this can be compelling, the limitations of 5.1 have meant only a “feeling” of being there at the event or in the movie. Due to stereo compatibility issues (including matrix approaches), the surround channels are not used to their full extent. While 5.1 can deliver a richer sound experience than stereo, it has not been able to deliver a sound program with immersive spatial dimensionality.

Fig. 1 (Courtesy, Fraunhofer IIS)

Immersive audio goes beyond 5.1 surround sound and uses more channels to create the sensation of height (sound above). Where this gets interesting is—how many more channels? Even more interesting is an approach called “Higher Order Ambisonics” (HOA), which is a format that does not rely on channel assignments, but rather represents a sound field as a set of audio vectors that can be combined into whatever speaker configuration is desirable.

But before we leap ahead to HOA, let’s get some definitions out of the way. First, you may have heard about audio objects. Maybe you have experienced this in a theater showing a movie mixed for Dolby Atmos or Auro-3D sound. Similar to stereoscopic 3D movies, 3D movie sound is reproduced so that sound effects can be located in very specific and localized positions relative to each theater patron. Audio objects may be static or dynamic and thus panned around the theater space. One can imagine the creative effects possible with such a system.

AUDIO OBJECTS FOR TV

The professional video industry's #1 source for news, trends and product and tech information. Sign up below.

Audio objects can be much more than special effects, however. Audio objects can be elements of the sound program that the listener has control over, such as alternate language tracks, coaches’ microphones, race-car radio traffic, etc. These audio objects can be static or dynamic. The interesting feature, from a viewer perspective, is the ability for the viewer to choose as well as modify the base audio program, adding or deleting objects, controlling their level or spatial position, thus enabling interactive audio features of a television program.

You may be thinking, “Doesn’t our current 5.1/AC-3 system provide for mulitple languages as well as descriptive video tracks?” Yes, but with AC-3, these alternative objects have to be embedded within a separate audio program, so the viewer can choose to listen to the Spanish version, but only with a pre-mixed stereo program, not full 5.1. Likewise, the same problem occurs if a sight-impaired person wants to listen to the descriptive track but has to settle for a mono mix-down of the 5.1 program.

Audio objects are coded separately from the primary audio program mix and can be rendered (yes, rendered, much as on-screen graphics are rendered on your TV’s on-screen menu/guide) along with the full program mix and placed in the appropriate speaker channels, at a level that can be adjusted by the viewer.

SPEAKER CONFIGURATIONS

I mentioned above that immersive audio expands the number of speaker channels to provide for a true immersive experience. How many? Well the NHK in their UHDTV standard (which is 8K pixels) define their sound program as 22.2 channels.

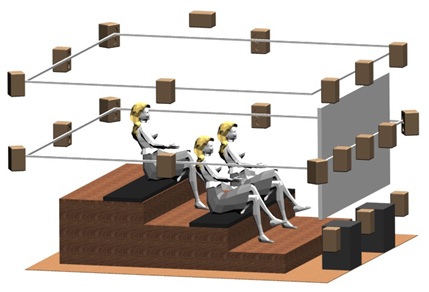

Fig. 2 (Courtesy, Fraunhofer IIS)

The speakers are placed at three levels—high, mid, and low—and includes two subwoofers (the 0.2 designation) (Fig. 2). This can provide theatrical realism, but is certainly not a living room or kitchen type of experience for TV viewers. More practical are the variations on 5.1 and 7.1, which provide four overhead speakers, for immersive presentations of 11.1 or 9.1 speaker configurations. The decoder/renderer as part of these new audio codecs, can create the downmix versions of the full immersive program to fit conventional 5.1 or stereo (and yes mono, too) speaker arrangements. The renderer, if programmed with the actual speaker locations, can also compensate for non-ideal speaker placements. And there are other novel solutions such as the 3D soundbar (an advanced soundbar that can create the effect of surround + height speakers) and “up-firing” speakers that use the ceiling as an acoustic reflector.

HOA

High-order ambisonics is a technique used to capture a unique sound field without concern for the final speaker configuration. The degree of precision to which the sound field can be captured is dependent on the order of the HOA process. For each order N, the number of audio signals required to convey the sound field is equal to (N+1)^2. Typically the order is 4, 5 or 6, resulting in 25, 36 or 49 HOA signals. Within the MPEG-H codec, these audio signals are further reduced (usually to six or eight signals) using a linear spatial compression system with very low latency prior to the core MPEG-H audio codec (USAC-Uniform Speech and Audio Coding). The decoder re-expands the spatially compressed signals and formats them for the desired speaker channel configuration.

ADVANCED AUDIO CODECS

AC-3 has been around since the early ’90s and a lot has happened in audio since then. MP3 caused a revolution in the music world, not because of better quality, but because it reduced audio file sizes where portable players—at first with hard drives and then solid-state memory—could hold thousands of songs. The follow-up to MP3 was the Advanced Audio Codec family (AAC). There are many variations, some with very high quality, some low latency, but all very efficient in reducing the bit rate of the sound signal.

The latest codecs being proposed for use by the ATSC are MPEG-H and AC-4. MPEG-H was developed by three companies— Fraunhofer IIS, Technicolor and Qualcomm—and has become an ISO standard. AC-4, developed by Dolby Labs, has been standardized by ETSI. Both codecs have similar features, such as audio objects, personalization, rendering at the player, with high compression efficiency (lower bit rates for high quality audio). MPEG-H also has a voice codec as part of the USAC core codec, the ability to transmit a sound program in HOA and a layered coding option. AC-4 has the ability to encode Atmos movie soundtracks into a broadcast program by grouping sets of objects for efficient encoding. Both systems have rich program metadata that provide for the channel definitions, loudness parameters, advance dynamic range control settings and the steering of dynamic objects.

FINAL WORDS

It’s an exciting time for television and immersive audio can provide a richer fuller viewer experience, regardless of how they choose to listen. Immersive audio can be rendered to the speakers available and provide interactive control by the viewer to tailor the program to their taste. With full immersive sound and 4K (or 8K) displays that cover the full field of view, TV viewers will not only be immersed but will enjoy features unavailable today.

Editor's Note: In an early version of the story we had the equation for the sound field as (N-1)^2 instead of (N+1)^2. The equation and subsequent results have been changed.

Jim DeFilippis is CEO of TMS Consulting, Inc., in Los Angeles. He can be reached at JimD@TechnologyMadeSimple.pro.

im DeFilippis is CEO of TMS Consulting, Inc. in Los Angeles, and former EVP Digital Television Technologies and Standards for FOX.