Part One

The IEEE Broadcast Technology Society (BTS) focused its attention on ATSC 3.0 Single Frequency Networks and virtualization during four sessions that kicked off its IEEE BTS Pulse online conference.

The session block examined the basics of ATSC 3.0 SFNs, virtualization of 3.0 broadcast gateways, extending the function of 3.0 SFNs by leveraging stream multiplexing to reach transmitter sites and the broadcast core network.

“ATSC 3 Single Frequency Networks and Virtualization,” chaired by Merrill Weiss, consultant and owner of Merrill Weiss Group, also included Benoit Bui Do, technical leader for terrestrial solutions at ENENSYS Technologies; Mark Corl, Triveni Digital senior vice president of Emergent Technology Development; and Ali Dernaika, solution architect at HPE America, addresses gateways, stream multiplexing and the core network, respectively. The session block was moderated by Joel Walsh, director of Education at SMPTE.

THE FUNDAMENTALS

Weiss kicked off the event by examining the fundamentals of ATSC 3.0 and how SFNs can be used, including an explanation of the 3.0 physical layer, framing and the bootstrap, as well as several 3.0 SFN-related technologies and techniques.

Weiss then explained how the transmission characteristics of 3.0 are “about the best there are in the world in terms of the range and the proximity” to the Shannon limit—the theoretical maximum error-free data throughput for a given amount of noise on a channel.

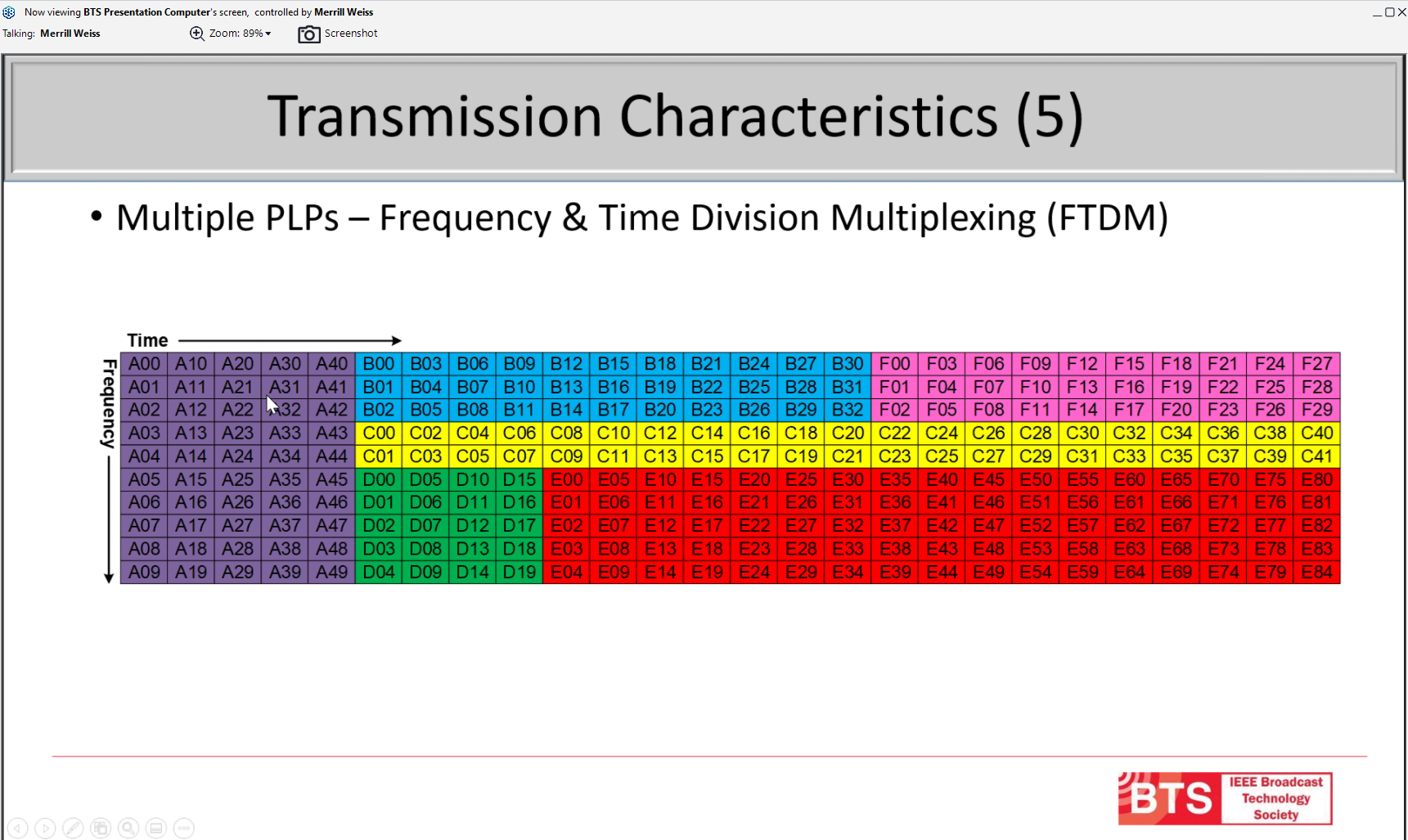

Next he reviewed 3.0 Physical Layer Pipes, which are “essentially combinations of a number of carriers,” and times in a 3.0 subframe. “So, every one of these intersections of frequency and time, you can think of as a cell … [that] carries a certain amount of information,” he said.

The professional video industry's #1 source for news, trends and product and tech information. Sign up below.

The cells can be organized in different ways, enabling frequency division multiplexing, time division multiplexing, a combination of frequency and time division multiplexing and layered division multiplexing (LDM). Putting different levels of power into different layers makes it possible for LDM layers to have their own modulation encoding.

“Depending on how you choose to set the modulation encoding, you can have more or less robustness and more or less data capacity,” he said.

Weiss then discussed data sources entering a 3.0 system via the “Data Source Protocol,” which are packed into ATSC 3 Link Layer Packets (ALP) and transferred to the Broadcast Gateway where the Scheduler packs the ALP packets into PLPs. This ensures packets use available PLP space efficiently, he said.

Three transport protocols are used to get data to the transmitter—DSTP, ALPTP and STLTP (Studio-to-Transmitter Link Transport Protocol)—to enable data to be transferred between layers and guard against what’s known as “layer violations,” said Weiss. In the case of DSTP and ALPTP, this is accomplished with information available in an information header that precedes the packet. For the STLTP, this is done with an RTP (Real-time Transfer Protocol) header.

All of these protocols provide for tunneling, which enables a number of multiplex streams to be carried inside another stream. In the case of ATSC 3’s studio-to-transmitter link, there can be up to 67 different streams, all of which are treated as one stream as part of the common tunneling protocol (CTP), he said.

CTP provides for additional services like forward-error correction and security to prevent “man-in-the-middle” attacks, similar to the “Captain Midnight” attack on HBO’s delivery of its signal to cable headends several decades ago, he added. ATSC 3.0 provides for authentication and encryption to guard against such attacks.

Two types of time are provided for in ATSC 3.0. For upstream of the broadcast gateway, UTC (Coordinated Universal Time) is used. Atomic time also is used for synchronization of time and frequency and as a parameter in the security system, Weiss explained.

Having discussed 3.0 fundamentals, Weiss turned to SFNs, which consist of multiple transmitters delivering the same signal on the same frequency to a given service area. “In order to do that,” Weiss said, “the frequencies have to be matched and the emission times have to be precisely adjusted.”

All transmitters must have the same reference bootstrap emission time, but they may be offset to shape network signal delivery, he added.

Weis also examined one way to localize datacasting in an SFN. It involves having a “normal” SFN deliver “mostly the same data on the same frequency into the overall service area but to allow certain PLPs to carry different data.”

These specific PLPs are the same for every transmitter to ensure they transmit during the same transmission period to specific areas. Because different data is placed on different cells during given PLP periods and on the same PLP frequency, the cells will interfere with one another where coverage from SFN sites overlaps, Weiss said.

“Doing this becomes an exercise in carefully adjusting the properties of the transmission. … [B]y making good choices of what the parameters are, that interference can be minimized, and these smaller service areas can be enlarged to the maximum extent possible [to enable localized data delivery],” he said.

EXTENDING SFN FUNCTIONALITY

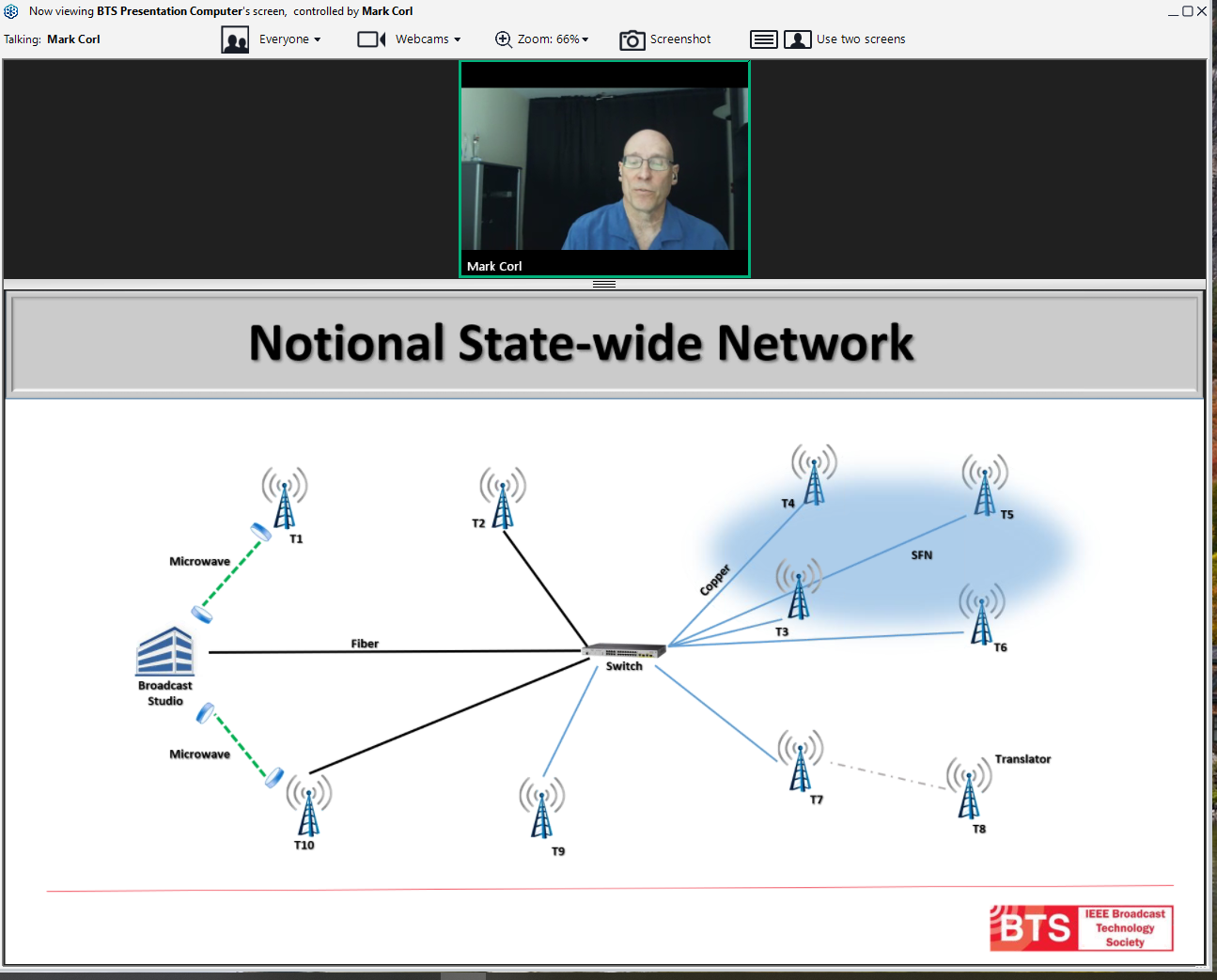

Triveni’s Corl examined the use of 3.0 SFNs as a statewide network. For the presentation, Corl looked at a hypothetical network with a broadcast studio—in some cases multiple regionalized studios across the state—connected to a set of transmitters. Some may be connected via microwave, by fiber through a switch and even an SFN at the edge.

“In the ATSC 1 world, that [the described network] wasn’t a problem, but in the ATSC 3 world there’s a lot of signing and authentication going on,” said Corl. “[S]ending the same stream to all the transmitters is somewhat problematic because all that signaling and interactive content and all the things you might want at these different transmitters has to be signed.”

Having private keys “floating around” at the transmitter sites where there may be no one to attend to them isn’t a good idea. “So, you can’t actually regionalize things unless you have multiple STLs—separated all the information out into multiple STLTP streams,” he said.

This would be necessary because at the exciter level it’s not possible to “crack open” the STLTP and “tear things apart or add things,” Corl explained.

Another consideration is that in many statewide networks, especially those of public broadcasters, most of the data is redundant. There are some primary channels and occasionally some tertiary channels, but the broadcaster might want to do region-specific, ancillary text crawls, he said.

Bandwidth restrictions in the network can be a problem. “Sending a couple—maybe three or four—STLTPs to transmitters over fiber is no big deal … [where] they typically have a gig of bandwidth,” he said. “But when … the connections are only, say, 10BASE-T … you really have to start paying attention to the network’s capabilities.”

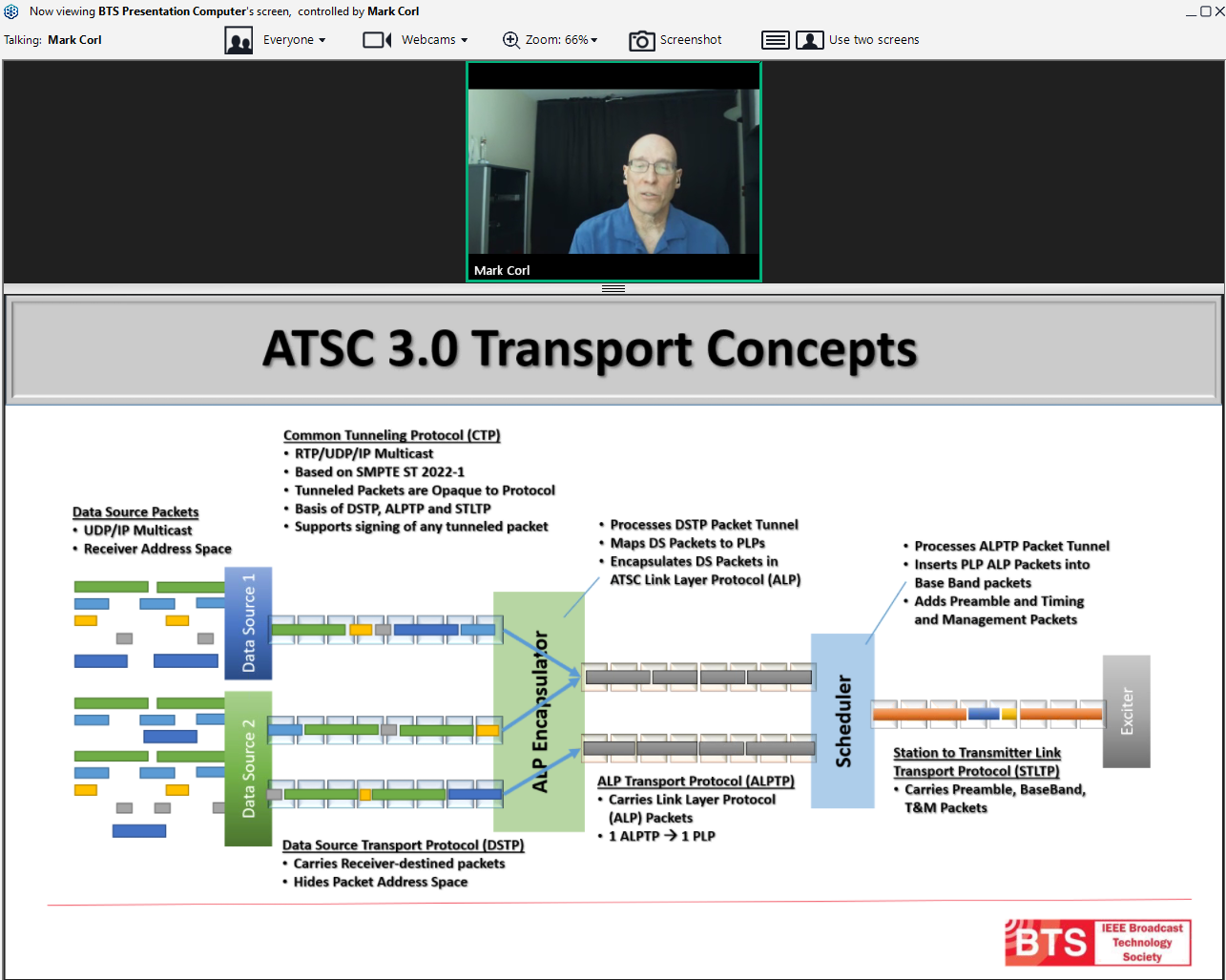

Before laying out the statewide SFN network solution in detail, Corl reviewed basic ATSC 3.0 transport concepts, illustrated with a diagram that started on the right with Data Source Packets and ended on the left at the exciter.

In between was a visual representation of the Common Tunneling Protocol (CTP) and Data Source Transport Protocol packets feeding the ALP Encapsulator. The CTP uses SMPTE ST 2022, which enables use of forward error correction and a fixed packet size. “They’re all RTP, UDP IP multicast packets, but they are completely opaque to what they are carrying,” said Corl.

One important quality of the DSTP—which carries receiver-destined packets—is that the packet address space is hidden. This is important because it allows duplicate Data Source Packets to be sent without conflicting with one another or needing a receiver to understand there are differences, he explained.

The ALP Encapsulator processes the DSTP packet tunnel and strips out everything and puts the internal packets carried through the tunnel into “out packets,” which are data packets with a particular header that use the ATSC Link Layer Protocol (ALP). This allows the packets to be hidden on the receiver side and in the Scheduler. At this point, ALP Encapsulator maps Data Source Packets to PLPs—one ALPTP to one PLP. The encapsulator then sends ALP packets to the Scheduler, he said.

It, in turn, processes the ALPTP packet tunnel, inserts PLP ALP packets into baseband packets, adds the preamble, timing and management packets and turns them into STLTP packets, which carry the preamble, baseband and time and management packets to the exciter. In each phase, packets are signed, he added.

Corl emphasized that ATSC 3.0 is IP-based, which means the stream doesn’t simply need to be data intended for receivers—audio, video and closed captioning data, but it is also possible to put other data into the stream that’s not intended for regular off-the-shelf receivers, he said.

Corl laid out different scenarios supporting the hypothetical statewide SFN, beginning with in-band distribution to individual SFN transmitter sites. “Essentially what you’re doing is carrying a backhaul for the SFN,” he said. “You’re carrying the STLTP in the actual broadcast.”

The STLTP carriage can be in a separate PLP. LDM may be used, announced as a private data stream in the low level signaling (LLS). A dedicated antenna tuned to receive this signal from the source transmitter is used. This setup also provides a redundancy mechanism if the network is lost. “The exciter doesn't actually have to be connected to a network or as well, it can be connected to the network and actually see this other STLTP carriage as a backup,” he explained.

The second scenario leverages regionalized PLPs. Here ALPTP is sent over the broadcast with common AV services, such as SD and HD, and some NRT (non-real time) data carrying configuration data. There is also a regional AV service in a secondary PLP. Receivers in the broadcast coverage area won’t know what to do with the secondary streams and ignore it.

At the SFN receive site, the configuration data will be used to program two functions that “essentially are equivalent to a Broadcast Gateway,” said Corl. This approach enables ALPTP streams and NRT to be privately signaled and carried in another PLP. Another ALPTP stream could be carried to supply a PLP to remove ALP encapsulation.

A third scenario allows for regionalized PLPs, but focuses on the content. “In each stage … we’re basically taking and carrying different aspects and different control information to the point where we can fine tune it down to the point where we have different signaling for each of the transmitters,” he said, adding that the signal doesn’t need to be signed at the regional SFN transmitter because it was signed at the studio and passed to the SFN transmitters.

“We can actually have very specific transmitters and essentially build an entirely new broadcast at each stage simply by taking very selective IP streams out of the network and carrying this information,” he added.

In all three scenarios, everything coming over the air can be signed and validated to make sure a nefarious actor is not replacing content or changing any of the signaling. Additionally, with physical security in place at SFN sites—locks on transmitter buildings—there is no need for a private key for the entire network at these remote sites. “You just need it to be able to decode whatever’s coming in,” he said.

Finally, Corl laid out a backup plan to reach all SFN sites, which is already in place with the 3.0 SFN deployment.

“Backup information could be running all of the time, and you could be sending out the information all of the time, even though your networks were there,” he said. “So failure of the network or even partial failure of the network would easily be just switching over to the other mechanisms and carriage.”

(Editor’s note: Part two will examine the Broadcast Gateway and Core Network presentations.)

Phil Kurz is a contributing editor to TV Tech. He has written about TV and video technology for more than 30 years and served as editor of three leading industry magazines. He earned a Bachelor of Journalism and a Master’s Degree in Journalism from the University of Missouri-Columbia School of Journalism.