A new, more efficient standard for MPEG systems

The professional video industry's #1 source for news, trends and product and tech information. Sign up below.

You are now subscribed

Your newsletter sign-up was successful

Widely adopted video-coding standards are subject to obsolescence that follows a different form of “Moore’s Law.” Although silicon speed roughly doubles every 18 months, video-coding efficiency doubles about every ten years. The longer time span is influenced by other factors, such as the time needed to replace a vast and expensive content delivery infrastructure. MPEG-2 video compression (essentially an update of MPEG-1) was first released in 1995, with digital satellite delivery a major application, followed soon afterwards by deployment on DVDs and digital terrestrial service. Although MPEG-4/AVC (aka MPEG-4 Part 10 or H.264) was released only a few years later (and yielded about a 50 percent bit-rate savings), it took well into the 2000s for the codec to become entrenched into professional and consumer applications, on satellite, cable and Blu-ray discs.

And now, the next codec is nearly upon us: High-efficiency Video Coding (HEVC). This next-generation video standard is currently being developed by the JCT-VC team, a joint effort between MPEG and the Video Coding Experts Group (VCEG). The finalized HEVC standard is expected to bring another 50-percent bit-rate savings, compared to equivalent H.264/AVC encoding. HEVC should be ready for ratification by ISO and ITU — ISO/IEC 23008-2 MPEG-H Part 2 and ITU-T Rec. H.265 — by the end of January 2013. HEVC codecs are then expected to be adopted quickly in many devices, such as camcorders, DSLRs, digital TVs, PCs, set-top boxes, smartphones and tablets.

HEVC

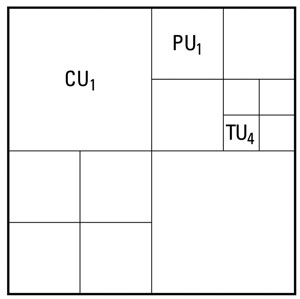

Figure 1. HEVC incorporates a new framework encompassing coding units, prediction units and transform units, which replaces the macroblock structure of previous video coding standards with one or more prediction units (PUs) and transform units (TUs).

The HEVC standard incorporates numerous improvements over AVC, including a new prediction block structure, and updates to the toolkit that include intra-prediction, inverse transforms, motion compensation, loop filtering and entropy coding. A major difference from MPEG-2 and AVC is a new framework encompassing coding units (CUs), prediction units (PUs) and transform units (TUs). Coding units (CUs) define a sub-partitioning of a picture into arbitrary rectangular regions. The CU replaces the macroblock structure of previous video coding standards, and contains one or more prediction units and transform units, as shown in Figure 1. The PU is the elementary unit for intra- and inter-prediction, and the TU is the basic unit for transform and quantization.

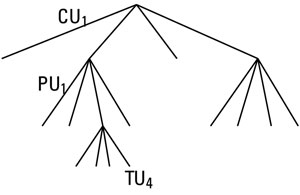

Overall, this framework describes a treelike structure in which the individual branches can have different depths for different portions of a picture. Each frame is divided into largest coding units that can be recursively split into smaller CUs using a generic quad-tree segmentation structure, as shown in Figure 2. CUs can be further split into PUs and TUs. This new structure greatly reduces blocking artifacts, while at the same time providing a more efficient coding of picture-detail regions.

Figure 2. With HEVC, video frames are divided into a hierarchical quad-tree coding structure that uses coding units, prediction units and transform units.

MPEG-2 intra-prediction employs fixed blocks for transform coding and motion compensation. AVC went beyond this by allowing multiple block sizes. HEVC also divides the picture into coding tree blocks, which are 64 x 64-, 32 x 32-,16 x16-, or 8 x 8-pixel regions. But these coding units can now be hierarchically subdivided all the way down to 4 x 4-sized units. In addition, an internal bit-depth increase allows encoding of video pictures by processing them as having a color depth higher than eight bits.

The professional video industry's #1 source for news, trends and product and tech information. Sign up below.

HEVC also specifies 33 different intra-prediction directions, as well as planar and DC modes, which reconstruct smooth regions or directional structures, respectively, in a way that hides artifacts better.

Parallel processing

The picture can be divided up into a grid of rectangular tiles that can be decoded independently, with new signaling allowing for multi-threaded decode. This supports a new decoder structure called Wavefront Parallel Processing (WPP). With WPP, the picture is partitioned into rows of treeblocks, which allow decoding and prediction using data from multiple partitions. This picture structure allows parallel decoding of rows of treeblocks, with as many processors as the picture contains treeblock rows. The staggered start of processing looks like a wave front when represented graphically, hence the name.

Four different Inverse DCT

Transform sizes are specified with HEVC: 4 x 4, 8 x 8, 16 x 16, and 32 x 32. Additionally, 4 x 4 intra-coded Luma blocks are transformed using a new Discrete Sine Transform (DST). Unlike AVC, columns are transformed first, followed by rows, and coding units can be hierarchically split (quad tree) all the way down to 4 x 4 regions. This allows encoders to adaptively assign transform blocks that minimize the occurrence of high-frequency coefficients. The availability of different transform types and sizes adds efficiency while reducing blocking artifacts.

A new de-blocking filter, similar to that of AVC, operates only on edges that are on the block grid. Furthermore, all vertical edges of the entire picture are filtered first, followed by the horizontal edges. After the de-blocking filter, HEVC provides two new optional filters: Sample Adaptive Offset (SAO) and Adaptive Loop Filter (ALF). In the SAO filter, the entire picture is treated as a hierarchical quad tree. Within each sub-quadrant in the quad tree, the filter can be used by transmitting offset values that can correspond either to the intensity band of pixel values (band offset) or to the difference compared to neighboring pixels (edge offset). ALF is designed to minimize the coding errors of the decoded frame compared to the original one, yielding a much more faithful reproduction.

Advanced motion compensation

Motion compensation is provided by two new methods, Advanced Motion Vector Prediction (AMVP) and Merge Mode, both of which use indexed lists of neighboring and temporal predictors. AMVP uses motion vectors from neighboring prediction units, chosen from both spatial and temporal predictors, and Merge Mode uses motion vectors from neighboring blocks as predictors. To calculate motion vectors, Luma is filtered to quarter-pixel accuracy, using a high- precision 8-tap filter. Chroma is filtered with a one-eighth-pixel 4-tap filter. A motion-compensated region can be either single- or bidirectionally interpolated (one or two motion vectors and reference frames), and each direction can be individually weighted.

The JCT-VC team is also studying various new tools for adaptive quantization. After this last lossy coding step, lossless Context-adaptive Binary Arithmetic Coding (CABAC) is carried out, which is similar to AVC’s CABAC, but has been rearranged to allow for simpler and faster hardware decoding. Currently, the low- complexity entropy-coding technique called Context-Adaptive Variable-Length Coding (CAVLC), which was available as an option in AVC, is not available in HEVC.

With all of these improvements comes a price: Both encoding and decoding are significantly more complex, so we can expect more expensive processors on both sides. On the decoding side, this means a higher density of silicon and/or software, both requiring faster chips, and higher power consumption, but Moore’s Law should help. As for deployment in portable devices, it will be an interesting challenge to realize the efficiency benefits of HEVC in devices that are demanding increasing amounts of video content.

—Aldo Cugnini is a consultant in the digital television industry.