What Is This 'Broadcast Quality' Thing Anyway? Part I

ALEXANDRIA, VA.—If you were at the Hollywood SMPTE Annual Technical Conference and Exhibition last October, you might have sat in on a paper authored by the CMO and co-founder of Archmedia Technology, Josef Marc. The paper (“What Does ‘Broadcast Quality’ Mean Now?) examined changes in the industry during the past decade or so, especially the impact of UHD, HDR, expanded color gamut and the like, and described how evaluation of imagery can be highly subjective (“color-challenged people may be more sensitive to compression artifacts than people with normal color sensitivity, memory seems to improve image quality, etc.”). Marc noted that this also carries over into evaluation of audio.

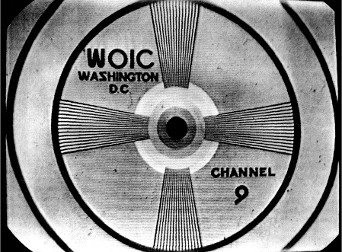

This off-air photograph of a 1940s station test pattern was included in its FCC “proof-of-performance” documentation. Under careful examination, a number of defects and shortcomings are evident including telecine camera tube shading issues, less than perfect scan linearity and sweep adjustments and a slide that’s quite dirty. However, the station obviously prided itself as offering a “broadcast quality” signal to viewers.

After hearing the presentation, I’ve been pondering the paper’s implications, especially the statement in its abstract that “‘broadcast quality’ used to be a meaningful standard for communicating a quality level sufficient to satisfy the broadest range of requirements.”

Now I’ve been involved in the broadcasting business one way or another since I was a teenager (a long time ago), and the term “broadcast quality” was one of the first things (if not the first) that I tucked away in my ever-growing list of “buzz words.” It was drilled into me by upper management, as I’m sure it was you, that our product always had to be of “broadcast quality.” (And no, this wasn’t a reference to bleeping any of George Carlin’s forbidden words.)

NICE BUT VAGUE

So what really defines “broadcast quality” and has such a thing ever really existed?

Well, when you think it over, it’s right up there with such terms as “ample” or “adequate.” They sound nice, but are vague and their meaning can vary with circumstances. Yes, the FCC had its set of rules and regulations governing TV and radio station technical quality, transporters of network signals such as AT&T. Long Lines had their own system operating standards, and I recall (not so fondly) more than once conducting the lengthy SSOG (Satellite Systems Operating Guide) satellite path “proof of performance” to ensure that everything met spec. Now don’t get me wrong, I’m not against standards and specifications. No industry could exist without them. However, as evidenced by lots of practical experience, nothing is really set in stone.

The professional video industry's #1 source for news, trends and product and tech information. Sign up below.

In the 1960s, if you asked a radio station listener about “broadcast quality,” he or she might tell you that the station’s music sounded better than their phonograph records at home. There was probably a measure of truth here, as larger stations obtain multiple copies of the “top hits” from record pluggers and promptly replaced any disks that were beginning to sound worn or had gotten scratched. (Also the station may have added just a little “processing” in their audio chain.) However, if you asked the owner of some expensive “hi-fi” gear (something of a status symbol in the 60s), that person would likely have an entirely different (and not particularly flattering) definition of “broadcast quality.” They would likely point out that the limited audio passband, turntable rumble, and residual noise and distortion in the transmission process resulted in audio that was inferior to what their upper-end home hi-fi systems could deliver.

Ditto video. When affordable video cameras and home recording finally became available, folks were thrilled that they could preserve their children’s christenings, birthday parties, graduation ceremonies and the like for posterity, or record their favorite network television shows for future viewing. To hear owners of this technology talk, the video quality delivered by these devices was really wonderful, and curiously (or maybe not), the majority of consumers bought into the format that produced the poorest-quality video. On the other hand, we smug broadcasters looked down our nose disdainfully at the so-called “VHS quality.” We had “broadcast quality” quad and 1-inch video recorders. Anything else was, well, not worthy of consideration. We didn’t really talk much about 3/ 4-inch U-matic video recording, with 300-line or so resolution and really horrible color performance (remember those “plastic faces” that you saw on just about every newscast?) However, U-matic machines were sold as “broadcast” VTRs by several companies and were used by most broadcasters for ENG until something better came along.

A REALISTIC AND UNDERSTANDING FCC

Some of us may remember too the FCC’s crackdown on stations for violating established blanking intervals when U-matic machines began to be deployed in large numbers as the ENG movement took off in the 1970s. (It wasn’t exactly “broadcast quality” according to FCC regs, but there were few viewer complaints).

After handing out a lot of “pink slips,” the Commission finally backed off, saying “we are persuaded that the strict enforcement of our blanking interval standards tends to work a severe hardship on station licenses and, to some extent, deprives the public of some otherwise valuable programming.”

Translated, this meant that “even though you’re supposed to be maintaining ‘broadcast quality’ signals, we’ll look the other way, as it’s more important to get local news video on the air than to quibble about a line or two of vertical blanking or a few microseconds of horizontal.”

It’s true; neither stations nor the Commission caught any flack for airing slightly-less-than “broadcast quality signals.”

In the final part of this two-part series, we’ll look at how new and emerging monitoring technologies have altered the meaning of “broadcast quality” in the 21st century.

James O'Neal is a retired broadcast engineer who worked in that field for some 37 years before joiningRadio World’ssister publication,TV Technology, where he served as technology editor for nearly a decade. He is a regular contributor to both publications, as well as a number of others. He can be reached via TV Technology.