Mixed Results for National EAS Test

WASHINGTON—On Thursday, Nov. 10 at 2 p.m. EDT, the Federal Emergency Management Agency carried out a national test of the Emergency Alert System (EAS). It was the first nationwide EAS test since the program's inception 14 years ago. Prior to Nov. 10, EAS typically had been used by individual states to notify citizens of approaching weather systems such as tornadoes and flash floods.

The November test was intended to evaluate the President's ability to address the entire nation with an audio and text message via all broadcast outlets. While the system clearly would be of paramount importance should there ever be a national catastrophe, the test unfortunately could hardly be called a success.

Across the United States, viewers and listeners experienced what could be politely termed "mixed" results. While some received the correct message at the correct time, others received video or audio only, others received the message late, and some received no message at all.

Just as the results of the test were varied, so was the news coverage of the test's unfolding. NBC News' Brian Williams spent only about 10 seconds to explain that the test took place with mixed results, while ABC devoted a package explaining how and why the event went wrong.

Broadcast engineers were also quick to join the dialogue, wasting no time in analyzing what happened. From Hawaii to Maine, the industry began to weigh in on the successes and failures of the test and even the system itself.

DECODING WHAT HAPPENED

At WRAL in Raleigh, N.C. the EAS system fired off at 2:00, while several of the Capital Broadcasting group stations' systems waited until 2:03, according to Pete Socket, director of engineering operations for the CBS affiliate. "One station's EAS receiver rebooted itself shortly after beginning the message," he said.

WRAL's engineering department is known for its use of cutting edge technology, and this event sent the engineering staff looking for answers. "We dug a little bit deeper and the first thing we found was that even though the test came down right at 14:00 EDT, the header of the message had a time stamp of 14:03," Socket explained.

The discrepancy led the engineering team to analyze the group's EAS equipment, which was a mix of new Digital Alert Systems' DASDEC models and older Sage Alert Systems ENDEC boxes. Depending on which version of software they were running and how the time settings were pre-selected, each unit acted differently.

A new EAS IPAWS -CAP-compatible encoder/decoder at Raycom-owned WMC , the Memphis, Tenn. NBC affiliate. "Some units have the ability to be configured to follow strict time rules or loose time rules," said Socket. WRAL decided early on to implement loose time rules as they felt discrepancies in time could occur throughout disparate clocks in the EAS. The units that were set to loose time rules fired off their alerts at 14:00 rather than 14:03.

The Raycom Media group, which has stations from Hawaii to Virginia, found that the 47 stations in its group had slightly different issues. "The older generation EAS units acted immediately because they do not have the ability to delay a message," explained Raycom Vice President and Chief Technology Officer David Folsom. His station group primarily uses newer DASDEC units, but it has kept some older units in service as backups. "The timestamp located in the metadata of the alert message has the ability to tell the newer decoders to hold a message until a specified time and then send it," Folsom said. "Most of our newer boxes played the message at three minutes past." A bigger problem, however, was that not all of them received the message, Folsom added.

INCONSISTENT RESULTS

Some state EAS directors reported that the stations depending on National Public Radio (NPR) for their message trigger never got the message. As a primary distributor of the EAS message, the loss of a proper trigger signal from an NPR broadcaster meant many stations never ran the alert. "Our station in Alabama, which depends on Alabama Public Television for its signal, did not trigger," said Folsom.

"There are weak links in any daisy chain system," Folsom said, adding that he has two concerns with the daisy chain approach being currently used to distribute the signal. The first is that stations downstream can be left out if a primary has any kind of issue. "If you have 10 participants and any one of them does not play the message, then that is where it will stop," he said.

Folsom sees the second problem as the "trickle down" approach that can delay the message at the farthest ends of the chains. Overall Folsom feels that the system is more complicated than it needs to be, with too many points of failure.

The quality of the message was also a big issue for the Raycom station group. Almost a quarter of its stations had garbled audio or no audio at all. "Of our 47 stations, three quarters had problems, the least serious was audio issues and the worst was no signal from the upstream primary," Folsom said.

"It seems that most stations either aired what sounded like doubled audio or had their box mute upon receiving the second set of digital tones under/over the initial announcement," said Scott Mason, CPBE, CBNT, education chairman for the Society of Broadcast Engineers and director of engineering for CBS Radio West Coast. He noted that the SBE received varied test results from its membership nationwide, with some reporting areas where issues did arise.

"Certainly there were no A's here, however numerous stations did prove their equipment was working properly and passed the information that was delivered to its input," Mason explained.

KEEP TESTING

Lee McPherson, director of engineering for KTVU, the Cox-owned Fox affiliate in San Francisco believes that station operator inexperience is one of EAS' main problems. "Everybody is rusty on this system, it just doesn't get used much, so we need more practice," he said. McPherson feels the test was a success because it showed where the weak links in the systems exist. His station's DASDEC system went off at 14:03. "People need to properly set their configurations and be aware of what they are," he added.

"This nationwide test served the purpose for which it was intended—to identify gaps and generate a comprehensive set of data to help strengthen the ability to communicate during real emergencies," wrote Damon Penn, FEMA assistant administrator for National Continuity Programs, in a blog on the agency's website just hours after the test. But Penn also conceded that although much of the country did get the message, many others did not see it or hear it.

From an engineer's standpoint, "I think it was very successful as tests go," said Sockett. "This is why you have tests." Sockett explains that when a broadcast engineer puts in a new piece of equipment, he tests it. "We have never done a presidential test [of the EAS], and we probably should have done one long ago," he said, adding that he feels the success of the test lies in the fact that it shows where the system's shortfalls are and gives the government a chance to fix it before the system is really needed.

Raycom's Folsom believes that the test demonstrates that more work needs to be done figuring out the proper distribution network. More sophisticated and robust ways of distribution to more devices needs to be addressed.

In Michigan they are already making use of a digital delivery system which uses both satellite and the Internet for EAS alerts. The goal is for emergency communications to reach and inform residents, no matter what kind of media devices they are using. This is a goal that should be the directive for all involved in the next EAS test. The technology to improve the system is available and there is a willingness among broadcasters to retest the system until they are certain it works properly for everyone.

EAS: One Broadcaster's Experience

by Joey Gill

PADUKAH, KY.—When the FCC announced its first "nationwide" EAS test for Nov. 9, I had mixed feelings. As a chief engineer, I thought, "oh great, more paperwork," but on the other hand, it seemed like a good idea: It hadn't been done before.

In the weeks before the test, I tried to sift through all of the FCC press releases, industry news, and professional publications to learn more about what I needed to know as an NP (national participating) station. Following the instructions in the National EAS Test handbook, I filed the first of three required reports on Nov. 3, (completion of report two was required on Nov. 9, while report number three is due Dec. 27, 2011).

The professional video industry's #1 source for news, trends and product and tech information. Sign up below.

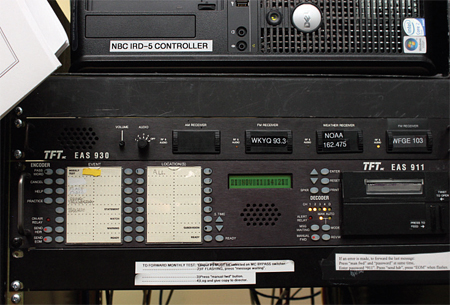

WPSD 's EAS setup includes a TFT930 receiver and EAS 911 unit.END OF THE CHAIN

WPSD-TV sits in extreme western Kentucky, and is at the end of the state EAS chain. The PEP (Primary Entry Point) for national alerts into Kentucky is WLW, Cincinnati, which is monitored by WHASAM, SP-1 (State Primary) Louisville Ky. At WPSD, we monitor two FM radio stations, WFGE and WKYQ, along with the National Weather Service (just for fun).

Our EAS toolset includes a TFT model 930 receiver and a TFT model 911 EAS box (vintage 1996) for reception/detection. The 911 box feeds a large Beta Brite display for MC operator notification, and also provides video image data and digital audio out to our Evertz HD9725LGA keyer (also known as "NBC NameDropper"). When an EAS event is triggered, the Evertz keyer generates the message "crawl" and embeds emergency audio onto WPSD's regular programming.

Like most broadcasters, our EAS equipment is tested several times every week, with RMT's (required monthly test) monthly, and our TFT 911 unit was updated and serviced at the factory in 2010. I fear no test, as I know our equipment works… (I think). WPSD's TFT 911 unit was upgraded and serviced at the factory in 2010, and has been in top working order ever since. According to the National EAS Test Handbook, the nationwide test could have been forwarded either in "Manual" mode or "Automatic" mode.

Because our EAS control point (master control) is monitored 24/7, we keep the unit in "Manual," so we were originally going to do a "Manual" forward to keep things familiar. However, according to our interpretation of Step 4 in the National EAS Test Handbook, WPSD would be required to interrupt programming (completely). In order to not disrupt normal programming any more than necessary, we decided to operate in "Automatic" forward on game-day.

THIS IS ONLY A TEST

A few minutes before 1 p.m. (Central) on the day of the test, the operations manager and I joined the duty operator in master control. You could cut the tension with a knife: would we receive the test? Would we be able to forward the test? To add more pressure, I had just been assigned to write this story... would our potential failure be the muse of the entire broadcast industry? With much less sincerity than the "reentry" scene from the "Apollo 13" movie, we watched the clock click off the seconds. At 1:03:42, the TFT 911 unit came alive! The unit activated the BetaBrite display, and the "Automatic" forward did function. We were transmitting the familiar tones, and the printer on the 911 unit was already spitting out its familiar "white tongue" with header information. Near the top of the WPSD broadcast screen, the white text crawled along on a red background: "A National Emergency Action Notification has been issued for the following counties/area: District of Columbia…."

After about 15 seconds, our MC operator had started our local crawl along the bottom portion of the screen with a dark gray background: "This is a test, this is only a test." From the beginning to end, the "National" part of the crawl lasted about 25 seconds, while the local crawl continued until the EOM tone, about 1:02 after the event started.

While all data and text parameters worked flawlessly, the audio portion of the test was less than pristine. While the message was intelligible, the level was low and there was much distortion. I was able to hear "This is a test of the Emergency Alert System, this is only a test, the message you are hearing..." As far as I was concerned, I could understand the message. I did note however, that our system was not set up to properly attenuate the regular programming audio, as I heard it playing as well. That is a function of the Evertz unit, and we will be addressing that issue. After the test I went to the FCC's website and filed report number two. Over the next few days, my staff and I discussed the test, and I gathered information that I expected to need to file the last report. I was prepared to give a detailed account of the test, including all comments and observations.

When I logged onto the filing site, I was surprised to find just a few questions to answer, including "did we receive the test?" "Which facility did we receive the test from, and were we able to forward the test?" On Nov. 15, I filed the third and final report. I guess that with the large number of stations responding, simpler is better. In short, we did receive and pass through the test. I wonder if to test the audio quality next time, the audible message shouldn't include some "authentication" words to reflect a pass/fail grade on the day-of report. While many pundits jumped early on to call the test a flop, I consider the test a resounding success, even in the states where the test apparently failed. The purpose was to measure the health of the system, and it did just that.