Current Events Validate Virtualized Playout

“COVID provided a business imperative that turned out to be a proving point.”

The professional video industry's #1 source for news, trends and product and tech information. Sign up below.

You are now subscribed

Your newsletter sign-up was successful

LONDON—The COVID-19 crisis forced broadcasters and facilities operators to take decisive action so they could carry on activities by having technical-creative personnel work on projects from home.

In addition to audio and video editing, visual effects and color grading, aspects of live production have also been carried out remotely during lockdown. Less obvious, however is the all-important but more background task of playout being controlled remotely off-premises.

The fact that channels have stayed on air would seem to validate the concept of virtualized playout but, as many of the leading manufacturers in this market observe, it was more a confirmation of what the broadcast market had been realizing already.

“You don’t need a disaster to prove a concept,” comments Adam Leah, creative director at nxtedition, a Swedish-based developer of broadcast operations software. “People hadn’t thought about VPN [virtual private network] infrastructures with fat enough pipes but once everyone got over that idea, sending everyone home was simple.”

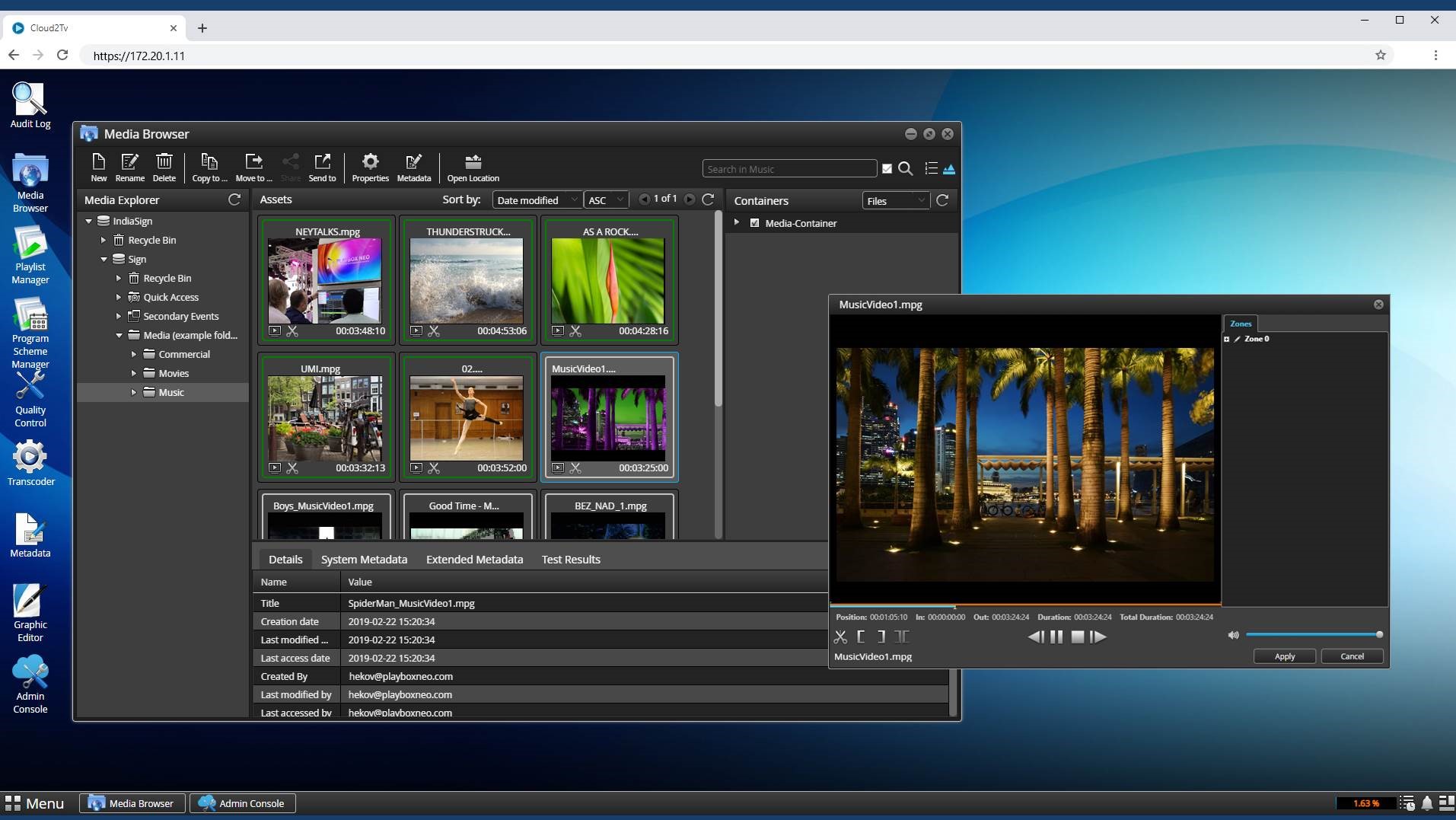

Van Duke, U.S. director of operations for PlayBox Neo, sees the pandemic as a “side issue” to what had been happening already.

“Virtual control, network-attached storage and remote working have been part of the broadcast industry for years,” he says. “Many broadcasters delegate MCR [master control room] operations to third-party playout service providers, typically in another city, another state or even another time zone.”

As Steve Reynolds, president of Imagine Communications, observes, in responding to COVID-19, broadcasters reduced the number of people at broadcast centers, retaining only essential staff.

The professional video industry's #1 source for news, trends and product and tech information. Sign up below.

“Operational jobs were being done remotely using cloud and IP tools,” he said. “These were all things that were going to happen anyway over time but COVID-19 provided a business imperative that turned out to be a proving point. It’s shown how everything can work and there is no turning back because it’s changed the way the industry operates.”

LEGITIMIZED THE CLOUD

While agreeing that the move to virtualization and the cloud would have happened without the impact of the pandemic, James Gilbert, chief executive of Pixel Power, thinks other factors had to be considered as well.

“Remote working is not wholly dependent on virtualization,” he said. “It’s more about the capabilities of the playout system itself. Broadcasters with old automation systems that couldn’t be operated remotely had projects accelerated but probably people at the highest level had been thinking about working off-premises and the cloud from a business perspective already. The situation this year has legitimised the concept of the cloud but it won’t necessarily accelerate adoption.”

Regardless of the necessity brought about by COVID-19 to rethink broadcast distribution operations, Gilbert adds that replacing traditional playout installations is a big project. There are also different ways to approach the move away from the traditional model of a playout center, with several automation systems housed in physical equipment racks, to a decentralized operation based on software-defined systems.

While the cloud is now regarded as the obvious way to remove such overheads, it is not always a key component for a virtualized playout system, according to Jan Weigner, president and chief technology officer of Cinegy.

“There is a difference between the cloud and virtualization,” he said. “The front-end can run on a thin client or a PC using a web browser, with the playout engine in a data center. Of course it could also be in the cloud but where the playout element is makes no difference. It could be in the same building or 100 miles away or 1000 miles away and you wouldn’t know the difference.”

WHAT ABOUT MICROSERVICES?

Harmonic emphasizes the concept of microservices over the blanket term of virtualization. These include ingest, search functions, branding, graphics, scheduling and live source capability based on SMPTE ST 2110 or TSoIP (Transport Stream over IP).

“Software-based playout can be built up using microservices and orchestration,” explains Eric Gallier, vice president of video solutions at Harmonic. “We are seeing a shift to cloud native working, either in the public or private cloud. But as well as the software running on Microsoft Azure or Amazon native cloud platforms, it can also run on Dell, Hewlett Packard or Intel servers.”

Orchestration, observes Bea Alonso, director of product marketing at Dalet, is important for supporting hybrid infrastructures.

“Many facilities need to continue paying off existing investments and so the ability to orchestrate on-premises hardware and software tools with cloud deployments is key, at least in the medium term,” she says. “Much of the current infrastructure is still hardware-based, so there will be a transition where hybrid approaches will drive the evolution to fully virtualized playout.”

Duke says demand for virtualization in playout is coming from all levels of the broadcast market, although many medium-sized operations are continuing to use traditional workflows with either SDI or IP outputs.

“At the larger end, many established broadcasters appreciate the ability to introduce additional channels without having to expand existing hardware overheads. In terms of system design, the key difference is the physical location of the primary server and any supporting servers.”

...AND MASTER CONTROL?

With more remote working and cloud storage, it is conceivable that the playout center of the future could be very different to what it is today. A big question though, is what does this mean for master control?

“MCRs are still necessary but the ratio of people to channels is different because there are new techniques such as monitoring by exception,” Pixel Power’s Gilbert said. “That means the system only alerts the technical operator when something is wrong, not just when it is working. There will still be a MCR in the future but where it will be physically located is immaterial. With remote systems it could be in an operator’s spare room.”

Imagine’s Reynolds believes the MCR will sustain for some time to come, partly due to technical practicalities and partly because of the human factor. “There will always be a need for teams to work together,” he concludes. “That includes the transmission team as much as those on the creative and production sides. Collaboration is necessary but if online, remote operations work better it will happen. At the moment not all the technology is there to enable that kind of workflow but if and when it does happen, it will be a business decision, not a technical one.”

Kevin Hilton has been writing about broadcast and new media technology for nearly 40 years. He began his career a radio journalist but moved into magazine writing during the late 1980s, working on the staff of Pro Sound News Europe and Broadcast Systems International. Since going freelance in 1993 he has contributed interviews, reviews and features about television, film, radio and new technology for a wide range of publications.