ATSC 3.0: Where We Stand

ALEXANDRIA, VA. — Technology doesn’t stand still; instead, it evolves at an ever-growing pace. This is especially true in the area of bringing media to viewers.

In the past, video entertainment in the home was relatively simple, consisting of sitting in front of the TV to watch broadcasts when they were scheduled. Today, people want the capability to watch nearly anything they want, on any device, wherever they are—with content delivered over the air, over cable or satellite, via the Internet or locally stored. It is clear that the broadcast industry must evolve to accommodate this desire.

The current work on the ATSC 3.0 next-generation broadcast standard is meant to address this issue, using advanced transmission and video/audio coding techniques to bring new and creative services to viewers.

It has become clear that to adapt to consumers’ changing habits and demands, a new system is needed by broadcasters to support new viewing behaviors. Such a system must include the capability to evolve with consumer demands, and thus provide extensibility that permits future adaptation.

“Television” is now “viewed” in a variety of ways, through a growing range of media sources and delivery platforms. Among these, the Internet has become a major source of television content for consumers. Developing a new DTV system that incorporates all these elements is now not only desirable, but has become essential.

Flexibility in service options is a keystone of the next-generation ATSC 3.0 DTV broadcast system, including the opportunity for terrestrial broadcasters to send hybrid content services to fixed and mobile receivers seamlessly—combining both over-the-air transmission and broadband delivery. Options such as “multiview” and “multiscreen” are also important, as is the option of choosing among standard definition, HD and Ultra HD resolutions.

The ATSC 3.0 system also must adapt to future innovations. “Scalable,” “interoperable” and “adaptable” are some of the key words that describe the general principles behind ATSC 3.0.

Although work is already underway to enhance the existing ATSC TV system with Internet compatibility and caching capability for storing programs (a backwards-compatible suite of enhancements dubbed “ATSC 2.0”), the future needs of viewers and broadcasters is the focus of the ATSC 3.0 initiative. Technologies developed for ATSC 2.0 are expected to be supported in the new ATSC 3.0 system.

Because ATSC 3.0 is likely to be incompatible with current broadcast systems, it must provide improvements in performance, functionality, and efficiency significant enough to warrant implementation of a non-backwards-compatible system.

It is important to remember that the original A/53 DTV standard was launched in 1996. A number of significant developments have occurred since then, notably:

• Spectrum is becoming increasingly scarce

• Major improvements have been made in video coding efficiency

• A strong desire exists for higher-resolution images

• Audio has become more efficient and immersive

• Interactivity has become expected on the part of consumers

• Delivery paths other than broadcast have become commonplace

• Mobile devices have proliferated

• Tablets are in widespread use

These developments, taken collectively, have reshaped the television landscape, with the development of ATSC 3.0 as a response. The work on ATSC 3.0 has been broken up into a number of layers, as discussed below.

PHYSICAL LAYER

The physical layer is the core transmission system that is the basis for any over-the-air broadcast service. The physical layer is focused on modulation and coding, emission waveforms and other common system elements.

Multiple types of TV receivers, including fixed devices (such as traditional large-screen living room and bedroom TV sets), handheld devices, vehicular screens and portable receivers are being considered in the work on ATSC 3.0. A primary goal of the ATSC 3.0 physical layer is to provide TV service to both fixed and mobile devices.

Spectrum efficiency and robust service are some key focus areas. Increased data rates to support new services such as Ultra HD are a priority as well.

Furthermore, mechanisms for extensibility of the ATSC 3.0 system are being explored so that advancements and technologies that may be developed in the future can be accommodated without redefining the entire system. Determining mechanisms for graceful and agile evolution are an integral part of the ATSC 3.0 work.

In addition to traditional fixed services, ATSC 3.0 is intended to provide robust mobile services to devices that move, such as phones, tablets, laptops and personal televisions. Since these devices are likely to move across borders, it is highly desirable that the specification contains core technologies that will have broad international acceptance and enable global interoperability.

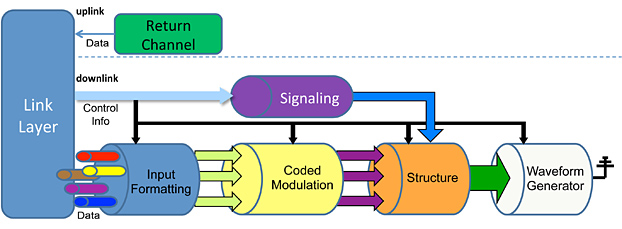

Figure 1: Physical layer skeleton architecture

The overall physical layer skeleton architecture is illustrated in Figure 1. Currently, the following baseline features are amongst those that have been tentatively agreed to:

• OFDM-based modulation, with a wide range of guard intervals to mitigate multipath

• LDPC based FEC, with a wide range of code rates in two code lengths (supporting mobile and fixed)

• Wide range of constellation sizes

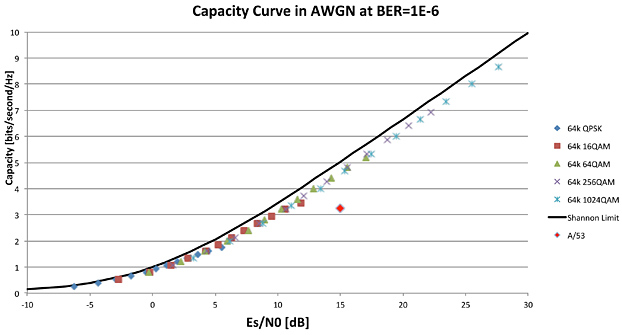

The ATSC 3.0 physical layer is expected to provide a large range of possible operating points for broadcasters, all of which are very close to the Shannon limit (the theoretical limit of how much information can be carried over a noisy channel) as illustrated in Figure 2, below. Basic operating tradeoffs include selecting a lower data capacity/more robust service and/or higher data capacity/less robust service, or points in-between.

Figure 2: Example capacity curve for ATSC 3.0 physical layer

Broadcasters have the opportunity to choose operating points that support their business models. Through the use of multiple physical layer pipes (PLPs), it is possible to use different operating points simultaneously—for example, devoting a portion of the emission bandwidth to UHD services and the remainder to mobile services.

MANAGEMENT AND PROTOCOLS LAYER

The management and protocols layer is the plumbing connection between the physical layer and presentation layer, supporting service delivery and synchronization, service announcement and personalization, and interactive services and companion-screen services.

A consensus has been reached on the use of Internet Protocol transport for broadcast delivery of both streaming and file content. The use of IP transport (instead of MPEG-2 transport as used in the previous DTV system) provides a large degree of commonality with other delivery mechanisms. Streaming content (for example, live TV) will be delivered in chunks (using ISOBMFF as a content format), rather than a continuous stream of bits.

Again, this provides commonality with other delivery mechanisms, as well as making things such as localized or personalized ad insertion relatively simple.

ATSC 3.0 is being designed to allow the seamless use of broadcast combined with broadband to deliver services and components of services. One example of this might be delivering video and one audio language (which might be expected to be used by a majority of viewers) in broadcast, with alternate language audio streams delivered via broadband—allowing the viewer to select among a number of options. One enabling technology for hybrid delivery is the use of UTC (or some other form of “absolute” time) for synchronization and buffer management.

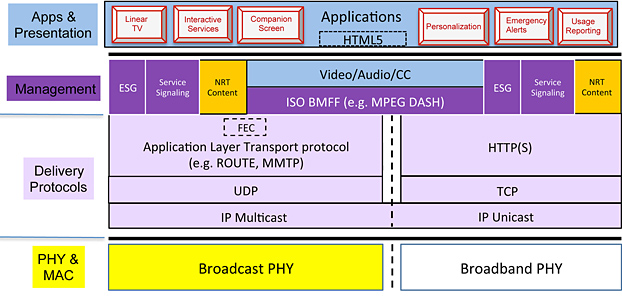

Although the details are still being worked out, an example protocol stack for ATSC 3.0 is shown in Figure 3, below. As can be seen, there is significant commonality between delivery via broadcast and delivery via broadband, especially above the delivery protocol layer, with complete convergence in the Application Layer.

Figure 3: Example protocol stack model for ATSC 3.0

In a number of situations, the receiver may only have access to uncompressed audio and video; e.g., via an HDMI cable connected to a set-top box. For ATSC 3.0, additional components and services are desired that may not make it all the way to the receiver in the main delivery path.

Automatic Content Recognition can enable the receiver to identify what is being viewed. ACR methods include fingerprinting and watermarking. ACR-aware receivers with a broadband connection could request and retrieve additional content via broadband.

APPLICATIONS AND PRESENTATION LAYER

The applications and presentation layer represents essentially the elements that the viewer experiences, including video coding, audio coding and the run-time environment. The service model for ATSC 3.0 allows for more complex services to allow broadcasters to evolve their business. Major elements include:

• Enhanced linear TV, plus on-demand support

• Subscription and pay-per-view (PPV) support

• Conditional access and digital rights management (DRM) capabilities

• Mobile and fixed device, plus companion device support

• Hybrid delivery (broadcast and broadband), combined with pushed content

For video coding, UHD and HD enhancements are a key initial goal, with 4K support at the start and 8K support possible later via extensibility. HEVC (H.265) has been selected as the core video codec. Portable, handheld, vehicular, and fixed devices in both indoor and outdoor settings are all targeted, and hybrid integration of broadcast/broadband delivery is a required capability for broadcasters.

Physical layer “pipes” (PLPs) may enable the flexible trade-off of robustness vs. throughput for each component. Layered (scalable) coding is under consideration, possibly on multiple PLPs. The latter situation may allow delivery of an HD version for core service over a robust pipe and an enhancement layer over a higher-bitrate pipe to bring the video to UHD.

For audio coding, new personalization features are envisioned that include control of dialog, use of alternate audio tracks and mixing of assistive audio services, other language dialog, special commentary, and music and effects. Furthermore, normalization of content loudness and contouring of dynamic range, based on the specific capabilities of a user’s fixed or mobile device and unique sound environment, is expected.

An enhanced immersive experience is envisioned, with high spatial resolution in sound source localization (in azimuth, elevation, and distance), for an increased sense of sound envelopment. Features will include targeted services to various devices (fixed, mobile) and speaker set-ups, support for hybrid broadcast/broadband delivery, and support for audio-only content as well as audio/video content.

The runtime (or application) environment will likely be based on HbbTV 2.0, with modifications as needed to accommodate differing needs and requirements. Some aspects from the ATSC 2.0 application environment may be used as well.

EXTENSIBILITY

Although the ATSC 3.0 standard is meant to last, technology continues to advance and consumer demands will evolve in ways that are difficult to predict. As a result, methods must be included in the ATSC 3.0 standard to facilitate a graceful evolution from the initial technologies to newer, more advanced technologies that may be developed in the future.

Signaling is being developed that will permit new receivers to take advantage of new technologies when they are available. This signaling begins at the physical layer and extends through to the application/presentation layer. The physical layer will have a very basic, highly robust form of signaling that can indicate what technology is used for the physical layer itself. At a minimum, each layer will have the ability to signal what technologies are used in the layer above.

Signaling and announcement information will include the ability to indicate the capabilities necessary to successfully render services, with a distinction between those considered essential (by the content creator) and those considered optional.

GET INVOLVED

Work on ATSC 3.0 has been underway for more than two years. Much work remains, and many decisions are yet to be made.

Participation in ATSC is open to all with a direct and material interest in the work. Organizations interested in joining ATSC will find more information on the ATSC web site (www.atsc.org).

Rich Chernock, chief science officer of Triveni Digital, holds an Sc.D. degree in nuclear materials from MIT. He is one of the principal developers of the ATSC 3.0 standard. Rich can be reached at rchernock@trivenidigital.com.

The professional video industry's #1 source for news, trends and product and tech information. Sign up below.