Software-Defined Systems & Virtualization

The professional video industry's #1 source for news, trends and product and tech information. Sign up below.

You are now subscribed

Your newsletter sign-up was successful

Karl Paulsen

Of the many components found in a media-centric operating environment, storage might be categorized as one of the more evolving systems in the overall architecture. As administrators attempt to sort out the directions and requirements for their organization’s expanding needs, technological approaches continue to change, and so do the means and methods of storage provisioning.

Operators and system technology architects often need to make a variety of decisions when it comes to storage upgrades. Continuing to support islands of storage, file systems and arbitrary naming conventions are no longer appropriate when addressing incremental changes in workflow, storage capacity, availability or accessibility. Today, with geographically diverse operations, resource sharing and changing business objectives, operators find that new storage models must be utilized to meet the demands for productivity, changes in workflow and requirements for acquisition and distribution.

Storage systems now must address a host of applications, databases (metadata and transactional), operating systems and platforms and file systems. In the data-centric world, software models and business applications are redefining foundational architectures, moving them from a static to a dynamic model. This shift from hardwarecentric to software-centric environments, often framed around virtualization, is finding its way into both networks and storage systems. In this model, software becomes the characterization for how a system is configured as opposed to the hardware methods of switches, disk drives and controllers of previous architectures.

VIRTUALIZATION

From a computing perspective, virtualization is the creation of a virtual platform (network, resource, operating system or storage device) that is not an actual, i.e., dedicated or real, device. Software plays an extremely important factor in virtualization, enabling the abstraction of a system or systems to extend beyond single purpose, singularly dedicated configurations. For network systems this is referred to as a “software-defined network” or SDN. For storage systems, the industry has coined the term “software-defined storage” (SDS).

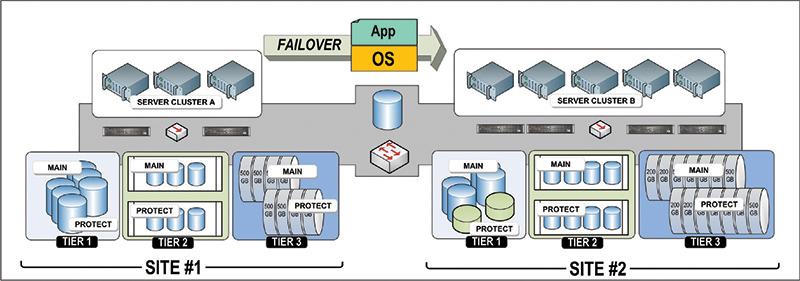

Fig. 1: Through software-defined storage and virtualization, local operations can be protected even with differing storage architectures. The concepts can be leveraged at two physical datacenters within the same location or when located at differing remote sites. Virtualization allows for the applications and the operating systems at Site #1 to failover to Site #2 even though server clusters and storage system make ups are different.

At the root level, compute-systems perform basic tasks based around their operating system (OS). For example, applications running on a general purpose computer depend upon the OS to be the interface between devices such as keyboards, displays, file-organization and file-tracking. The OS becomes the traffic cop, i.e., the communications chain among other peripheral devices including storage and I/O. Running more than one operating system on the same device is a complex challenge, usually requiring that only one OS is fully functional at any given time. When multiple operating systems are enabled to run on the same hardware, the term is referred to as “paravirtualization,” which is unlike “full-virtualization,” where an entire system is emulated. In the latter, the paravirtualization’s management module is known as the “hypervisor” or “virtual-machine-monitor,” which operates with an operating system modified to work in a virtual machine.

The analogies of SDN and SDS have even gone to the extent that we now have the “software-defined data center” which combines storage, servers and networking into a conglomerate that extends from the physical boundaries out into the cloud. This software-centric thinking is prevalent everywhere, it’s just called by different names and understood in varying concepts.

The professional video industry's #1 source for news, trends and product and tech information. Sign up below.

RESOURCE POOLS

Software-defined storage employs intelligent software that abstracts and transforms network switches, drive arrays, including flash memory systems, and servers into pools of resources, which are then mapped and provisioned to the applications, hosts and workflows of the organization or entity. Through virtualization, automation and the use of dynamic management toolsets, these systems become more flexible, increasing productivity levels and in turn optimizing the application experience overall.

Physical and virtual disk configurations arrange redundant SAN paths, synchronize mirroring, manage caching, load balancing and are an enabler of thin-provisioning, . Pools of storage can be arranged in tiers, ranging from very fast solid-state “hot” memory through RAID sets of SATA drives (as “warm storage”) and even into active private clouds, cloud service providers or as a “cold” storage archive. Hosts can be managed over a range of operating systems such as Windows, UNIX and Linux.

With the variant activities now prevalent in enterprise-class systems, tracking and metering performance becomes more complicated and is critical to understanding the efficiency of the servers, storage and networks. Monitoring systems are common in virtual environments. They observe, on a tier-by-tier basis, system activity so as to identify hot spots, such as when excessive disk I/O operations occur. In this case, the tools sets will then automatically load balance disk blocks between the active drives to prevent bottlenecks.

Infrastructure functions for like and unlike devices are manageable from dashboards that reveal how each host (operating system) is attached to each tier of storage. The dashboard shows in graphic form, what is happening at an overview level so administrators can then drill down to the details to reveal where systems are being overdriven, or through the use of trending tools to diagnose how a bottleneck was generated.

SDS and SDN approaches are gaining popularity in the data center and computecentric world; some in the media world feel these concepts will work their way into the media centric domain. Whatever opinion is expressed, as virtualization gains momentum we’ll need more toolsets and more management abilities to keep these systems going. Watch for more development in software-defined systems in coming years.

Karl Paulsen (CPBE) is a SMPTE Fellow and the chief technology officer at Diversified Systems. Read more about other these and other storage topics in his recent book “Moving Media Storage Technologies.” Contact Karl atkpaulsen@divsystems.com.

Karl Paulsen recently retired as a CTO and has regularly contributed to TV Tech on topics related to media, networking, workflow, cloud and systemization for the media and entertainment industry. He is a SMPTE Fellow with more than 50 years of engineering and managerial experience in commercial TV and radio broadcasting. For over 25 years he has written on featured topics in TV Tech magazine—penning the magazine’s “Storage and Media Technologies” and “Cloudspotter’s Journal” columns.