SMPTE ST 2110-10: A Base to Build On

The professional video industry's #1 source for news, trends and product and tech information. Sign up below.

You are now subscribed

Your newsletter sign-up was successful

This is the first installment in a series of articles that describe some of the key technical features of each section of the new SMPTE ST 2110:2017 suite of standards for “Professional Media over Managed IP Networks.”

ORANGE, CONN.—As of this writing, 2110 consists of multiple parts, with more to come in the future. The first three parts to be released are:

• ST 2110-10 System Timing and Definitions

• ST 2110-20 Uncompressed Active Video

• ST 2110-30 PCM Digital Audio

Two more parts, on video traffic shaping and ancillary data, are due in the near future.

To begin this series, a good place to start is ST 2110-10. We begin defining a set of common elements that apply to the rest of the standards in the 2110 family. In particular, ST 2110-10 defines the transport layer protocols, the datagram size limits, requirements for Session Description Protocol, and clocks and their timing relationships.

DATAGRAMS FOR 2110

The Real-time Transport Protocol (RTP, as defined in IETF RFC 3550) was selected for use in 2110 applications because it includes many features needed for moving media signals over an IP network and does not have some of the undesirable aspects of TCP (transmission control protocol). Using RTP, uncompressed media samples are grouped into datagrams, which have both an RTP and a UDP header attached before being passed to the IP layer for further processing. ST 2110-10 defines a standard UDP datagram size limit of 1,460 bytes (including the UDP and RTP headers), which is enough room for over 450 24-bit audio samples, or about 550 pixels of a 4:2:2 10-bit uncompressed video signal. 2110 also defines an extended UDP size limit of 8,960 bytes, which could be useful on networks that support Ethernet Jumbo Frames.

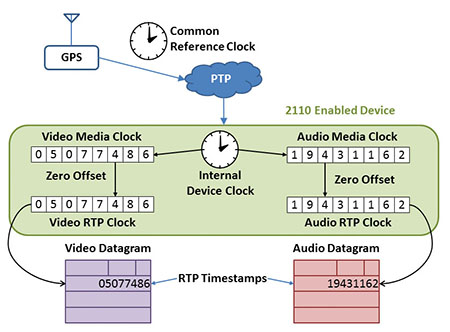

Fig. 1: Clocks defined in ST 2110-10

The professional video industry's #1 source for news, trends and product and tech information. Sign up below.

TIMING IS EVERYTHING

The most important aspects of ST 2110- 10 are likely related to clocks and timing. Unlike SDI and MPEG Transport Streams, each type of media in a 2110 system is carried as a separate IP packet stream, which creates the need for a mechanism to realign the signals to their proper timing relationships once they have traveled through a network. Fig. 1 shows several different clocks that are defined in the specification; each one has a specific role to play:

• Precision Time Protocol is used to distribute a Common Reference Clock (accurate to better than a microsecond) to every device on the network;

• Every signal source maintains an Internal Device Clock, which can (and should) be synchronized to the Common Reference Clock;

• Each signal type is associated with a Media Clock that advances at a fixed rate which is related, to the frame rate or sampling rate of the each media signal, and

• An RTP Clock is used within each signal source to generate the RTP timestamp that is contained in the header of each RTP datagram for each media signal. RTP Clocks are synchronized to their associated Media Clock.

To understand how these clocks are related, consider an example of an audio source that is using 48kHz sampling. Inside the audio source (such as a network adapter for a microphone), an Internal Device Clock will be running to provide an overall timebase for the device. This clock should be synchronized to the exact Common Reference Clock time that is being delivered through the network using PTP, which in most cases will be synchronized in turn to a GPS receiver. The Internal Device Clock will then be used to create a Media Clock that is running at exactly 48kHz, so that each “tick” of the Media Clock counter will correspond to the time of one audio sampling instant. The RTP Clock used for the audio stream will then be directly associated with the Media Clock and used to generate the RTP timestamps in each datagram header.

RTP timestamps used in 2110 systems represent the actual sampling time of the media signals contained in each datagram. In the case of an audio datagram (which normally contains more than one sample of each audio channel), the RTP timestamp represents the sampling time of the first audio sample contained in the datagram. In the case of video datagrams (which normally require multiple datagrams to carry each video frame), a single RTP timestamp could be shared by hundreds or even thousands of datagrams. Note that the individual datagrams will have unique packet sequence numbers and thus can be distinguished from each other for purposes such as loss detection and error correction.

As datagrams travel through IP networks, they will normally experience a varying amount of delay in transit. This could be due to buffering within the IP networking equipment or it could be due to different processing steps taken for various types of media. At their destination, the timestamps within the RTP datagram headers can be used to align all of the different media samples along a common timeline, permitting the various audio and video signals to be properly synchronized for processing, playout or storage.

This ability to accurately synchronize multiple media types at any point along the broadcast chain provides one of the key benefits of 2110. It allows each media stream to be processed independently. This means, for example, that a device that is performing audio level normalization no longer needs to de-embed audio from an SDI video stream, then process the audio and then re-embed it back into the SDI. Instead, each type of media signal can follow its own path through just the required processing steps for that media type and still be able to be re-synchronized to other media whenever necessary. Plus, if all the Media Clocks are referenced to a GPS grandmaster, it becomes possible with 2110 to have two cameras that are hundreds of meters or hundreds of kilometers apart genlocked together, without requiring a sync signal to be sent from one to the other. How cool is that?

SDP (Session Description Protocol) will be covered in a future installment of this series.

Wes Simpson is President of Telecom Product Consulting, an independent consulting firm that focuses on video and telecommunications products. He has 30 years experience in the design, development and marketing of products for telecommunication applications. He is a frequent speaker at industry events such as IBC, NAB and VidTrans and is author of the book Video Over IP and a frequent contributor to TV Tech. Wes is a founding member of the Video Services Forum.