Digital audio

Last month, we looked at some of the audio processors used in today's digital broadcast plants. This month, we'll dig a bit deeper into multichannel audio encoding for DTV transmission.

Surround is all around - isn't it?

Artificial or matrixed surround, such as Dolby Surround or ProLogic, is an analog process that creates a multichannel experience by matrixing surround information onto a stereo pair, then decoding this at the receiver to produce additional surround channels. Matrix refers to the mathematical operation whereby three input signals — left, right and surround — are transformed into two channels — left total (Lt) and right total (Rt) — for transmission. Separation between the main and surround channels varies and can be as low as 3dB.

True 5.1 multichannel sound is encoded by means of five channels plus low-frequency effects (LFE). With Dolby AC-3 (Dolby Digital) and MPEG-2 audio compression, 5.1 is encoded onto six discrete information channels, all with perfect separation between them. Often, a broadcaster will not send any of the LFE information, resulting in what is commonly called 3/2 encoding, which is three front channels plus two rear surround channels.

Due to the large amount of legacy stereo content available, many broadcasts are still sent in 2/0 mode, or regular stereo. This is also true for a large amount of new content because of the extra workflow (read: expense) involved in creating a five-channel mix. However, much stereo content has already been generated with Dolby Surround encoding, and this will benefit from appropriate decoding. Thus, broadcasters will often take existing Dolby Surround-encoded material and transmit it in 2/0 mode. ATSC A/52 includes a 2-bit field in the bit stream info (BSI) header that can indicate whether the transmission is matrix surround encoded. Many (perhaps all) AC-3 decoders include Pro Logic decoding when 2/0 content is received, so such a transmission will be reproduced in Dolby Surround if the transmission mode is set and the receiver is equipped to do so.

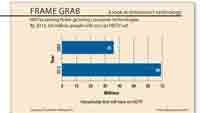

Some broadcasters will just lock the AC-3 encoder into 3/2 mode and send the stereo material in L/R. With no audio on the other channels, the encoder will allocate very few bits to them, so this is no big waste of bandwidth. However, a bigger problem exists, as can be seen in Figure 1 on page 20. If surround-encoded 2/0 Lt and Rt content is put into the L/R of a 3/2 delivery, this will only come out of L/R of the five-channel decoder, the Pro Logic decode function will not activate, and it will just come out in stereo with a hollow center (also known as the hole in the middle). Thus, broadcasters should always switch the transmission to 2/0 if only two channels are sourced.

Some broadcasters also will generate a pseudo-surround by synthesizing surround from a stereo signal and transmitting it in 3/2 mode. This is an equally bad practice, as the downmix to a stereo playback will ruin the original stereo mix.

The professional video industry's #1 source for news, trends and product and tech information. Sign up below.

The ATSC standard includes a method for carrying AC-3 within a serial digital audio stream. By conforming to the IEC-958 logical format, AC-3 can be carried on AES3 interfaces as well as S/P-DIF and embedded with video into SDI. Dolby recommends that networks distribute their 5.1-channel programming using Dolby E, a technology designed for optimal transmission of multichannel audio through stereo infrastructures. Like AC-3, Dolby E can be carried over discrete or embedded interfaces. At the local stations, the Dolby E stream can be routed or switched as necessary before being decoded and then encoded into Dolby Digital 5.1 for DTV broadcast to consumers.

Metadata can be used to carry channel configuration information, allowing proper switching of audio coding modes. However, there is no guarantee that this metadata (or the embedded audio) will be carried pristinely through all SDI devices. This is a particularly vexing problem with ad hoc systems that must be set up and taken down due to dynamic requirements. For this reason, there must be a standard protocol set up for all devices to be used in the video and audio chain. Also, installation of each piece of equipment must be followed by a rigorous confidence check, to ensure that all audio content and metadata are carried properly.

Maintain proper audio levels

The subject of dialog level and how to set the Dolby Digital dialnorm parameter has been covered in this magazine extensively, and shall not be repeated here. However, one situation has come to mind that should never be tolerated. Never process your audio with a standard NTSC broadcast limiter! Such a device was designed to account for the high-frequency pre-emphasis of analog NTSC audio transmission and therefore applies limiting based on a rising pre-emphasis curve. This is not a good idea! If there is a piece of legacy analog equipment that you just can't part with — for whatever reason — make sure that it does not process audio based on any kind of pre-emphasis or equalization curve. Study the operation manual (or better yet, the schematics, if possible) to convince yourself of this.

Similarly, the dynamic range of your audio should be judiciously maintained. NBC Universal (NBCU) and other broadcasters are now implementing procedures to help station engineers establish and maintain proper station loudness levels, and NBCU has urged the industry to adopt similar procedures. Subjectively producing proper levels is a labor-intensive process, so it's important to at least use an appropriate loudness-sensitive processor to maintain the proper dialnorm and dynamic range levels.

Monitoring is not simple

While various tools exist to monitor multichannel audio, and these should be used to verify that all channels are present and accounted for, surround production inherently produces a dynamic situation that cannot be deemed correct by a simple viewing of a display. (See Figure 2.)

For example, since dialog will not always be present, it is not unusual for the center channel to be zero for periods of time. Similarly, while a production will almost always have audio on the left and right channels, the surround levels will often change widely, and may also be absent for considerable lengths of time.

These tools can monitor various aspects of the audio, such as levels, interchannel phase and loss of audio. They can also be placed at various key points throughout a broadcast plant, allowing for a centralized monitoring of the signals. Currently, however, the best these tools can do is to monitor and display the various parameters of these signals. There is no way to sound an alarm when the condition is different from the way it was originally produced. For example, how do you know if a channel that has been quiet for five minutes isn't supposed to be that way?

The right tools are available

Thankfully, there are products available today that can solve many of the problems mentioned here, combining dynamics processing for loudness control with surround sound upmixing, handling metadata, processing Lt-Rt downmixes and ensuring that two-channel audio being sent to consumers is not wrongly signaled as 5.1-channel. Work remains to be done in the area of automated monitoring and should include a combination of metadata and signal processing. The best way to operate a multichannel sound facility is to give maximum fidelity to the original production, whether it was monophonic, stereophonic or true multichannel. The best promise of digital television is to enhance the prior art, not to use gimmicks to pretend that something is what it is not.

Aldo Cugnini is a consultant in the digital television industry.

Send questions and comments to:aldo.cugnini@penton.com