The IP revolution reaches playout

Television facilities are gradually evolving and are becoming more IP/networking-centric in many areas. IP networking infrastructure has been built up throughout facilities primarily to handle the exchange of files from one storage device or file-based system to another. Files have been successfully navigating the IP domain within television and playout facilities for some time.

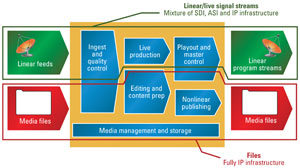

IP networking makes perfect sense for file-based processes, but what about real-time program streams? There are many areas where real-time or linear program streams are in use. (See Figure 1.) Today, we commonly find IP networking used for real-time program streams on the edges of the facility, typically in the incoming feeds area or at the point of outbound transmission. At these points, the stream is encoded and compressed for transport. Take that farther, and here is the killer question: Could IP networking be used throughout a facility?

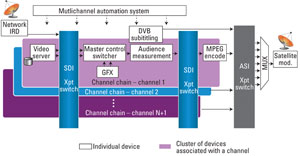

Figure 1. In many areas today, real-time or linear program streams are used, resembling a model like this one.

Headends take an IP plunge

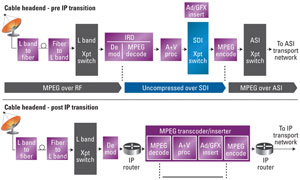

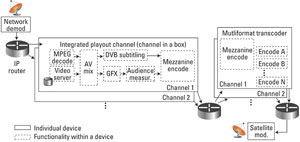

Anyone unsure about the suitability of IP networking infrastructure to carry real-time program streams need only look to the radical transformation that has occurred in cable, DTH and IPTV headends in the past 10 years. (See Figure 2.) In the beginning, these headends would accept a combination of compressed and uncompressed signals, and employed a combination of SDI and ASI infrastructures. Now, these headends have transitioned to using IP networking infrastructures for several reasons. Namely, the signals they transport are now compressed when they arrive and remain so either all the way to the home or, for cable systems that still support analog tiers, are decoded close to the home where the signal is modulated to RF. But, why did TV delivery headends transition to IP?

Figure 2. This shows the transition to IP infrastructure in headends.

With the functional integration of devices leading to the development and proliferation of compressed domain splicer systems, the transition to IP was facilitated. Today, traditional MPEG decoders and encoders are being replaced by integrated systems that feature IP inputs and outputs, and combine ad and graphics insertion and transcoding from one compression format to another, or from one bit rate to another, inside a single box. With these integrated transcoder systems, a signal can be demodulated at the entrance of the facility but stay IP until it hits the integrated processing chain. Here, it is internally transcoded, while allowing processing and insertions to take place, thus allowing routing to remain in the IP realm. This integration has allowed the scalability to more channels. Also, it allows for ease of redundancy within a flexible, routable environment, compatible with telecom networking gear already in use there. Having switched the video infrastructure to IP, headends have the ability to share the same infrastructure for TV, telephony and data for Internet services.

The professional video industry's #1 source for news, trends and product and tech information. Sign up below.

Could IP replace SDI?

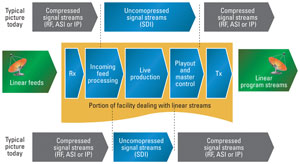

In cable headends, the case was clear. But, what about a TV station or multichannel origination facility? Some in the industry, and many from outside the industry, think Ethernet/IP networking should be replacing SDI everywhere in a TV plant. Many think that the transition from SDI to Ethernet will happen gradually. In considering this transition, we follow something of a common sense rule that states, “If the real time video signal is already encoded/compressed, then it is practical to carry it over an IP infrastructure. But, if you are inside a facility, and you have to encode a signal just to switch and distribute it within the facility, then it may be less practical or economical to use an IP infrastructure.”

Based on this rule, there are specific areas within a television production and origination facility where Ethernet/IP networking for real-time programs makes sense, and other areas where SDI/HD SDI makes more sense. (See Figure 3.)

Figure 3. This shows the use of compressed and uncompressed linear signal streams in a typical television broadcast/production facility.

Production

There are several reasons why an IP/Ethernet infrastructure may be less practical today in a live production environment. Many sources in a live production environment are not natively compressed (cameras, graphics, etc.), and there is a desire to keep them uncompressed to maximize quality and, more importantly, to avoid encode/decode delays. One could consider leaving these sources uncompressed and routing them using Ethernet/IP equipment instead of SDI. But, the high bit rates associated with uncompressed video, and the large number of sources in a modern live production, make this impractical today.

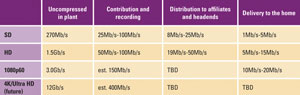

The bit rates for uncompressed video are quite large, ranging from 270Mb/s for SD, 1.5Gb/s for HD and on up to 12Gb/s for 4K. (See Table 1.) Now, if we were only talking about a few uncompressed sources, then using IP/Ethernet equipment would be workable. But, it is common to have 1000 x 1000 matrices in a large production facility. And, some larger facilities are now at 2000 x 2000 matrices. The Ethernet networking gear currently available is just not economically viable at those rates and fabric sizes, but it will become more feasible as the price per 10GigE port decreases.

Table 1. Typical bit rates for uncompressed video can be large, ranging from 270Mb/s to 12Gb/s.

IP’s next use in a facility

Based on our rule that if signals are compressed, then it is practical to use IP infrastructure, we think and IP networking infrastructure begins to make a lot of sense in the playout area. This is the area where automation systems, playout servers, branding, subtitle insertion and delivery encoding combine together to deliver real-time program streams to increasingly diverse distribution platforms. Consider a typical multichannel playout system as it exists today in an SDI-centric world and a more IP-centric approach. (See Figure 4.)

Figure 4. Shown here is a multichannel playout system in an SDI-centric world.

Why migrate playout to IP?

Three main motivational factors influence migration to an IP infrastructure in playout. The first factor is integration. In the past, a playout chain consisted of many discrete devices each interconnected by SDI and supported by SDI routing to allow reassignment of resources and redundancy protection. Today, broadcasters, particularly multichannel broadcasters, use integrated playout systems, often called channels in a box, that combine most of the functionality of a channel chain in a single device, typically software on a standard computer server. These integrated systems accept one or two inputs for live programming but perform all other master control functions inside the box. The output of the integrated channel box typically is a delivery-ready real-time stream on SDI. A few vendors of these integrated channel boxes now provide an option for an encoded (compressed) output over IP.

The second factor enabling IP in playout is that multiple “deliverable” bit rates are becoming required as facilities need to feed secondary outputs for OTT formats along with primary outputs, such as main distribution systems using, for example, ATSC or DVB-T for terrestrial transmission or DVB-S for satellite transmission. Where a standalone encoder is used now to compress the channel’s stream for one stream, in the future, encoders will be replaced by transcoders that support IP inputs and outputs, and provide multiple delivery formats. Like in cable headends, these IP in/IP out transcoders enable the transition to IP infrastructure.

From a topology perspective, there is often a separation between playout and uplink or delivery functions. With the availability of IP connectivity between those points, it creates a natural place to start migrating the interconnect between playout and uplink to IP.

Within this context, it is now possible and sensible to link the integrated playout device to the transmission transcoding devices using an IP infrastructure. The output of the channel chain is encoded within the playout chain and delivered to the transmission transcoding device over IP. But, rather than have the playout device provide a final delivery grade encode, it provides a higher bit rate mezzanine encode.

Mezzanine encoding

A mezzanine encode is a low-compression encode resulting is an intermediate bit rate. The ideal mezzanine rate is high enough to maintain maximum quality for multigeneration transcoding. At the same time, the rate should be low enough for efficient, cost-effective transportation around the facility and be transcoded easily to multiple output formats for playout distribution.

Preferably, it would be a common format used for recording the original video, which is good enough for editing. One example would be Sony XDCAM-HD. It is common and supports good-quality 4:2:2, 8-bit compression, and it is easy and economical to encode. XDCAM HD is also compatible with MXF, allowing carriage of multiple audio tracks and SMPTE 436M ancillary data. In the context of the playout application, the mezzanine encoding technique is sensible because it keeps encoding in the integrated playout device simple and provides a maximum base quality for the transcoder to work with.

Benefits and challenges

The benefits of an IP networking structure are abundant. First, the flexibility and absolute routability of all signals is simplified. All source signals are wrapped up and compatible with all destinations. We are able to create a truly redundant architecture, which allows protection against points of failure. We are able make use of generic IP switches, which are present already in many facilities and easy to acquire. Since the switches support IP inputs and outputs, they are easy to integrate with other IP systems, such as subtitle insertion devices.

Monitoring can be accomplished for many points with standard IP monitoring tools. Creating an IP infrastructure may eventually allow us to virtualize the playout device as a software application in a virtual machine, allowing several instances to run simultaneously and further enhance scalability.

There also will be challenges moving to an IP-based playout infrastructure, similar to those faced with the TV delivery transition to an IP infrastructure. (See Figure 5.) If we think of the structure physically, it will become much more complex. Before, it was simple when one wire carried one signal to one port. Now, with potentially several signals per wire, routing becomes less obvious. Where exactly is each signal? Can I just take this wire and plug it in elsewhere? No. There is not a simple way to just patch around a point of failure because it is no longer a single signal. If there is a failure, it is difficult to fix, meaning it fails big time.

Figure 5. Challenges exist when moving to an IP-based infrastructure like this one.

Luckily, IP networks have the ability to provide excellent redundancy to prevent a potential massive failure. IP network topologies can ease the creation of truly redundant architectures, which prevent many catastrophes. By simply combining two channel chains along with two switches, every routable path becomes redundant. A failure in one chain or one switch will not prevent the signal passage. Actually, we could lose both a switch and a channel chain and still be OK. This redundancy allows the broken node to be serviced without disrupting normal operations.

Managing signal routing also becomes more complex. With SDI routing, it is common to find simple router control panels that allow operators with basic training to quickly make changes to signal routing. In an IP routing environment, routing is typically a system administration task. Tools will have to be developed to make setting of routes simpler and more operational.

Conclusion

In the future, as IP/networking technology evolves, Ethernet port speeds increase and port costs decrease, IP migration will naturally move to other areas. As technology becomes increasingly IP-centric, following this path will leave facilities prepared for whatever follows. Before then, however, now is the time to become more IP-centric and knowledgeable.

—Sara Kudrle is a senior software engineer with Miranda, and Michel Proulx is the former chief technology officer for Miranda.