Signals and formats

Digital broadcast systems are increasingly dependent on signal and format conversion. Interoperability has plagued many installations and stalled system commissioning due to unexpected signal and format compatibility problems in new, unfamiliar equipment.

In the analog world, signal conversion included processes such as transforming between serial and parallel control; producing subcarrier, horizontal and vertical pulses from composite sync; modulating a baseband signal on an RF carrier; and frequency up/downconversion. Format conversion generally meant converting between RGB and Y, R-Y, B-Y.

DTV offers a toolkit and leaves presentation and compression format choices to each broadcaster. Video can be 1080p, 1080i or 720p. Compression formats include DV, AVC, VC-1, HDV, JPEG2000, MPEG-1 and MPEG-2. Audio can be mono, stereo, 5.1, PCM, AES3, Dolby E, AC-3 or MP3. Usually, there is a mixture of these formats.

As challenging as this is, the influx of IT- and computer-based broadcast systems have introduced new technology layers (networks, storage, various operating systems and security) as well as new format interoperability issues.

This technology diversity has exponentially increased the types of signal and format conversions required for daily broadcast operations compared with the predigital era. Solving unanticipated issues after a system is installed and is at least partially operational costs time and money. It's better to consider all conversion scenarios before committing to a design.

Signal or format?

One engineer's format conversion is another's signal. Is SDI a signal or a format? The differences between signals and formats can be defined in a simple, but precise manner. Signals are physical, while formats are methods used to convey information.

The professional video industry's #1 source for news, trends and product and tech information. Sign up below.

Physical attributes that constitute a signal include voltage level, technology — such as Transistor-Transistor Logic (TTL), low-voltage differential signaling (LVDS), etc. — or medium (optical or electrical). A signal conversion changes any or all of these characteristics.

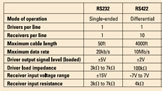

A description of RS-232, RS-422 and RS-485 standards illustrates the differences between signals and formats. Physical attributes of each standard are listed in Table 1. Figure 1 on is a conceptual schematic diagram of how each standard is implemented on the physical layer. Figure 2 shows the common data format used by all three standards. Note that there is a clear distinction between the different physical signals, while the same data format is used.

Yet, digital data communication has a signal component — the method used to physically convey ones and zeros. Types of digital signals include non-return-to-zero (NRZ), non-return-to-zero-inverted (NRZI) and Manchester/biphase mark. (See Figure 3, below.) Another consideration is that logic signals can be positive, where a high voltage value represents a one or negative and a low voltage is a one. A difference in physical signals is why some equipment can lock to an SDI (NRZI) input while ASI (NRZ) causes problems.

Presentation and compression formats

The term format is harder to describe and often used loosely. Two broad categories cover the way the term is used with respect to audio and video. First is the concept of presentation format. For video, this includes pixel grid, refresh rate, scanning method, color space and aspect ratio. For audio, it includes the number of speakers, delivered services and sample rate.

The need to support multiple presentation formats has complicated reference (sync) conversion. In the analog domain, NTSC and PAL sync were sufficient. DTV requires 1080i, 720p 59.94, 50, 23.98 and more reference signals to be routable. Then there are the added challenges of specialty syncs such as trilevel and Dolby Black.

MPEG-2, AVC, VC-1, AC-3, Dolby Digital Plus and AAC are used on a daily basis in broadcast production workflows and transmission air chains. Conversion between compression formats is an integral part of broadcast operations and best handled transparently without explicit operator intervention.

Media file conversions

With the increased dependence on computing resources, network distribution, file storage and software, new dimensions have been added to the conversion scenario. For example, if graphics production is Mac-based, will content be portable to PC-based editing and compositing systems?

Consider the SMPTE/EBU definition of content as essence plus metadata. Essence and metadata (wrapper) format support can lead to interoperability problems among servers from different vendors. A conversion from an MXF MPEG-2 file to QuickTime AVC, for example, consists of two independent format conversions: essence and wrapper. The receiving device must be able to transform both the essence and metadata wrapper for the file transfer to succeed.

Compression conversions are complex. For example, MPEG-2 uses 8 × 8 blocks of pixels for compression processing. MPEG-2/AVC allows pixel blocks to be various sizes. Hence, an MPEG-2/AVC format converter must make decisions based on motion vectors and determine which block size results in the largest data reduction and best image quality. This takes massive amounts of processing power and delays in the video signal chain. Lip sync can be affected, further complicating system design.

Now consider metadata wrapper formats. In simple terms, there are two basic essence wrapping techniques. In one approach, the media file structure consists of a header, essence and possibly a footer. The entire file must be transferred to a device before playout can begin. In contrast to this technique, a frame-based methodology treats each frame as a file. This enables streaming. Playout can begin as soon as a complete frame file is received.

Equipment evolution

Manufacturers are integrating what were initially standalone devices with numerous types of format conversion into single pieces of equipment. Connecting multiple devices together into a processing chain is no longer always necessary.

However, functionality consolidation comes with a price. When a unit fails, it can knock out an entire conversion processing chain. This puts an increased emphasis on the need for redundancy. Additionally, not all conversion functions may be needed in a particular workflow, so devices might be underutilized.

A modular, frame-based system offers the flexibility to implement required conversions independently. Processing cards can be added or replaced as new conversion capabilities are required with little or no disruption to rack layouts.

Conversion steps reduction

Reduction of conversion steps by using devices with integrated conversion capabilities simplifies system design and leads to efficient production workflows. This implicit conversion eliminates conversion bottlenecks that may develop at peak usage times.

A solution to many SD/HD presentation conversion issues can be found in production switchers that feature integrated format conversion capabilities. These multiformat switchers accept SD-SDI and HD-SDI inputs, perform mixing operations and then simultaneously generate both SD and HD program outputs. This eliminates the need for discrete conversion devices and simplifies circuit design.

In plants that still have analog infrastructures, routers can be populated with I/O cards that implicitly convert analog audio and video (composite or component) to SDI or AES and back again, eliminating discrete converters and making conversion transparent. However, this can require feeding an advanced reference signal to the source in order to maintain proper vertical coincidence timing.

Servers and archive systems are incorporating transparent essence and wrapper conversion. The process is automated and converts content based on user-defined profiles. Formats, bit rates and the directory where the converted file will reside can be specified. For example, servers can simultaneously convert production formats such as DV100 to MPEG-2 long-GOP for transmission and H.264 for Web dissemination.

Jack fields, routers and distribution amps

Design issues can be reduced to a few fundamental choices. Conversion equipment can be centralized so that signals are routed to and from a device, or dedicated conversion devices can be placed in-line with signal flows.

Routed conversion equipment enables flexible conversion workflows without the need to modify the existing infrastructure. Conversion devices can be added to the router. The danger of this approach is that at peak usage, sufficient numbers of devices that can perform a required conversion may not be available. Again, back timing reference may be required to eliminate frame bumps. Further timing adjustments may be required to maintain lip sync.

A complementary approach places conversion equipment in-line with content workflows. For example, consider an all-HD production facility where content exists only in the house HD format. An SD-only VTR will have an upconverter placed at its output before hitting the house router. This judicious placement of conversion equipment in the workflow path will ensure conversion equipment availability.

In the real world, the workflow flexibility of routing and in-line equipment can be maximized by the strategic placement of jack fields and distribution amps at the inputs and outputs of routers and conversion devices. By using these techniques, conversion resources can be patched or routed to where they are needed.

Best practices

Workflows should always strive to reduce the number of format conversions. Use equipment with integrated conversion capabilities wherever possible. Specify acceptable presentation and compression formats for outside-produced editing, graphics, audio and other packages as part of the contract terms. Retire as much legacy equipment as possible. This will help limit both the types and amounts of format conversion required and simplify system design.

Important factors that will determine the actual design and implementation of conversion systems are cost, content quality and workflow efficiency. Careful workflow and conversion requirement analysis is the starting point. An enlightened design, with a process-efficient, conversion-friendly infrastructure is the goal. Prudent deployment of conversion devices along with distribution amps, jack fields and routers results in an agile conversion infrastructure that adapts at a moment's notice to any required signal or format conversion.

Phil Cianci is a design engineer for Communications Engineering Inc.