What Content Providers Need to Know About OTT Monitoring

As OTT (Over-The-Top) technology has gotten more mature and established robust standards over the years, the concept of OTT monitoring is gaining popularity. With customer expectations soaring, it’s vital for OTT providers to deliver superior-quality content. To deliver Quality of Experience (QoE) on par with linear TV broadcast, the entire system, starting from ingest to multi-bitrate encoding to delivery to CDN must be monitored continuously.

Streaming service providers and broadcasters understand the complexities involved in OTT delivery and the media distribution chain—from content acquisition to actual transmission. OTT monitoring primarily involves monitoring the status of all key elements in the pipeline starting with the media source, encoders, decoders, output of Content Distribution Network (CDN), etc. The amount of media consumed over OTT is growing at a staggering pace, and people’s viewing habits are rapidly moving towards a unified multiscreen experience. These rapid changes demand a strong comprehensive monitoring solution that takes care of all the aspects in the OTT chain and makes it easy for people at various levels in broadcast operations to monitor and control the entire system.

The primary goals of monitoring OTT are to guarantee Quality of Service (QoS), Quality of Experience (QoE) and compliance monitoring. These quality metrics include media quality for confidence monitoring and delivery of captions, both for primary and secondary languages, to increase user engagement. In addition to QoS and QoE monitoring, broadcasters have another challenge to track viewership using ID3 Tag technology.

A comprehensive monitoring system includes the following:

- Encoder status, start/stop streaming, alarms, etc. It is essential to ensure proper encoding of content that comes in from multiple sources. As opposed to traditional broadcasts, live cloud OTT workflows are much more complex as it involves delivery to individual devices with multiple profiles. It is critical to monitor for all possible issues like frame misalignment, syntax errors, over-compression, absence of markers & metadata, dropped packets, and compliance slips as these issues can negatively impact a positive viewer experience. A monitoring system should be able to monitor end-to-end workflow, raise alarms, report streaming status, and notify issues that occur anywhere in the end-to-end system. This helps to quickly resolve issues before the end customers get affected.

- CDN status (concurrent connections, origin, visitors’ statistics, etc.) The CDN plays a crucial part in delivering content to end customers. It is critical to monitor the origin of the content, viewers’ statistics, and CDN edge.

- RTMP Surveillance and analysis of local encoders' output

- External report on streaming services availability at CDN outputs, ping, etc.

- Monitoring of website accessibility, CDN status, network status, etc.

- Video-on-Demand accessibility and Quality Control Analysis. In addition to live streaming, VOD clips must be monitored for quality.

- End-user experience status report

- End-user experience report for developers

- Server-Side Ad Insertion

- Captions

OTT Monitoring Advances

OTT monitoring is evolving at a rapid pace. Early on, OTT monitoring had largely been limited to IT infrastructure monitoring, and to some extent, monitoring streams at edge locations as a proof of delivery.

With the market growing, more and more OTT broadcasters are starting to see the value of monitoring. Efforts are on to converge OTT platforms and optimize streaming protocols.

The professional video industry's #1 source for news, trends and product and tech information. Sign up below.

OTT providers use various streaming protocols for media delivery, including Real Time Streaming Protocol (RTSP), Real-time Transport Protocol, Smoothing Streaming, etc. Often, the receiving devices support only one or two protocols requiring service providers to stream their content in multiple protocols. Nowadays, the media streaming process is largely being performed in the cloud, making use of the CDN. The data that goes in and is delivered out from the CDN needs to be monitored to guarantee QoE.

Also, transmission errors can occur while transporting media data using the IP network; errors could pertain to packet latency, jitter, packet loss, etc. This makes it important for service providers to introduce complete quality monitoring solutions, including video monitoring at the CDN data centers and at the edge locations.

Cloud-based monitoring is extremely efficient as it works like a virtual machine (VM) that can be moved to any place within a particular network. This makes it easier to store, manage and process data, thereby saving a lot of time, effort, and money. Cloud-based monitoring allows monitoring in geo-restricted streams and regions as well as scaling up monitoring, particularly with server-side ad insertions.

The State of Logging and Compliance

Logging and compliance of traditional broadcasts are being done by a lot of companies. However, these types of loggings neither cover OTT content nor do they cover content that has advertisements inserted at the MSO. Relying on logs to understand what happens to the content could turn out to be wrong.

OTT operations have changed the way that advertisements are delivered; they can specify ads for a specific market or a community. However, these broadcasts generally are poorly monitored, or at times not monitored at all compared to traditional broadcasts.

Thus far, there has been no major push from the industry to monitor OTT due to minimal FCC compliance requirements and a lack of an industry standard on OTT. Rapid innovations are being made in OTT and broadcast video delivery. Content owners are under constant pressure to respond to the surge in OTT content consumption.

OTT video service delivery technique is making use of dynamic adaptive video streaming over HTTP (DASH). Over the past year, MPEG-DASH has seen rapid growth and has also gained strong ground in OTT technologies.

The ATSC 3.0 specification is making use of DASH for Hybrid Broadcast-Broadband TV. ATSC 3.0 offers broadcasters the opportunity to deliver broadcast and broadband services to provide better a television experience.

Statistical multiplexing (Statmux) helps in providing high-quality ATSC 3.0 streams and facilitates efficient use of available bandwidth and supports to optimize bitrates and video quality of channels that share the same physical layer pipes. OTT content travels via a broadband connection in the receiver and DASH acts as the enabler in this scenario.

OTT Monitoring Best Practices

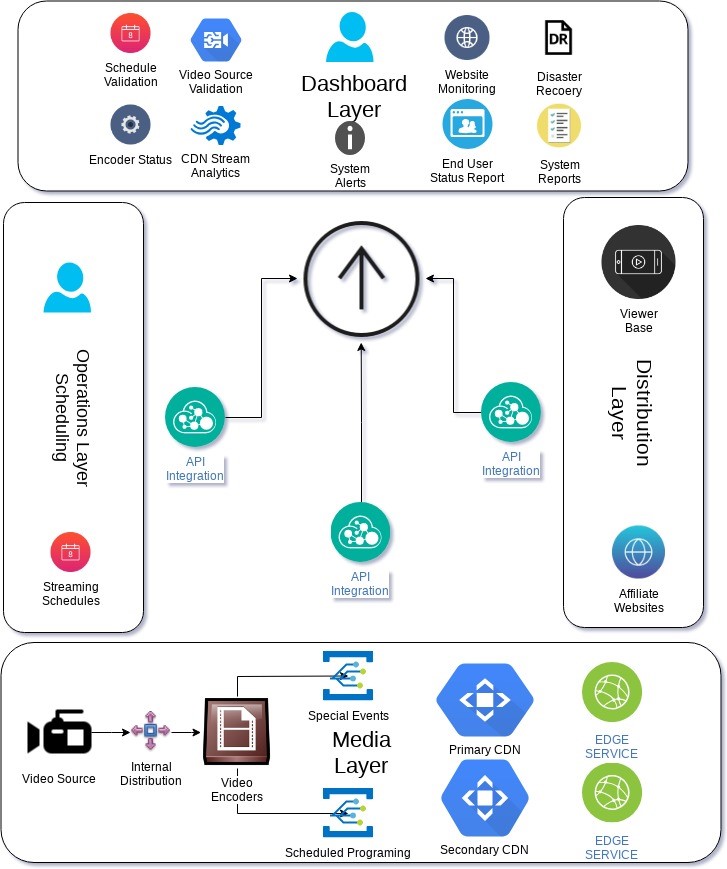

According to the image above, the entire OTT video delivery process is carried out in three different layers. The three OTT Layers: Operations Layer, Media Layer, and Distribution Layer are monitored by three groups of API Integration. These API integrations monitor content throughout the workflow right from content acquisition to content consumption to content delivery. Monitoring includes minute observations for all touch points of the workflow and provides all the technical error data, which works as an alarm, and helps provide users uninterrupted video.

The key to improving operational efficiency is to create a fault-tolerant OTT streaming infrastructure. In case of an eventual fault, reducing the resolution time to minimum is fundamental. As highlighted in the diagram above, a comprehensive and easy- to-use dashboard that captures and controls the health of the entire infrastructure real-time can add value to the overall operation.

Current Cloud-based Challenges

- Multiple protocols being used to support a wide range of devices OTT providers use multiple streaming protocols for media delivery, and the receiving devices support only one or two protocols, which prompts OTT service providers to stream their content in multiple protocols. The data that goes in and comes out from the CDN needs to be monitored to guarantee the quality of experience (QoE).

- Last mile monitoring cloud-based OTT monitoring must handle the problem of last mile monitoring. Cloud-based applications must be monitored at various levels including user experience. Physically, there is a considerable distance between where the application is running and where the users are. Conventional monitoring, being part of the application environment, makes it technically difficult to monitor how an application behaves at the user’s end—be it a mobile app or a web browser. Internet issues specific to each region are to be monitored. Bad internet connectivity could impact user experience.

Multiple multi-bitrate results in an increased resource requirement. Another major challenge of OTT content streaming is that the devices and networks are far more diverse than those assembled in controlled environments like satellite, cable, etc. An adaptable architecture is necessary to address the varied needs of network conditions and device requirements.

Streaming videos are being delivered to a range of devices, including computers, smartphones, and tablets, making the monitoring process more complex. Bitrates and profiles with which each variant is encoded are different. So, from a monitoring and quality control perspective, operators need to put in more efforts as they have many versions for each piece of content.

Networks used by some of the devices need to tolerate dynamically fluctuating characteristics. Also, the underlying technology used in adaptive bitrate streaming is not standardized.

The aforementioned are not specific to all cloud-based applications, but to OTT monitoring in general.

Future OTT Monitoring Fixes:

- Simulating last mile bandwidth variations

- Convergence of protocols

- Limiting the number of manifests and reducing error rates while switching from one manifest to another

Future Advances in Cloud-based Monitoring of OTT Content

- Converging of streaming protocols, a widely adapted industry standard; MPEG-DASH for instance

- A protocol based on segmented delivery with near zero latency

- Advances in video compression, partly promised by H265 and efficient utilization of available bandwidth for best QoS

Hiren Hindocha is the CEO of Digital Nirvana.