Understanding Virtualization and Containers

This column will review the key aspects of compute virtualization and application containerization as used in the data center and the cloud. Not familiar with these ideas? If you care about the future of the media facility and infrastructure, then stay tuned.

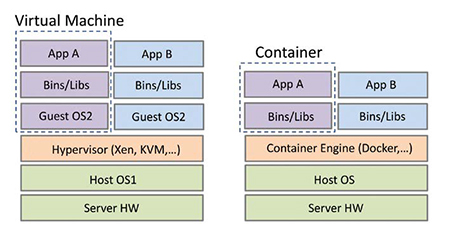

Compute virtualization is a kingpin for cloud computing and the modern data center. Virtualization began in the 1960s for mainframe computers at IBM, but reemerged starting in about 2001 as reinvented by VMware. Basically, it is a method of logically dividing a server’s resources (CPU, I/O, RAM) for use across isolated applications (see Fig. 1).

Fig. 1: Compute virtualization software stacks The essential idea is to create an environment where isolated apps and/or services can appear to own the same server when in fact they share it. The hypervisor or virtual machine manager creates each virtual machine (VM). There are several types of hypervisors and we will consider type 2 (or hosted) as shown in Fig. 1. In this case, only two VMs are shown, but there could be more. Starting in 2005, CPU vendors added hardware virtualization assistance to their products; this enabled VMs to share the CPU with fewer cycles of overhead. Think of a VM as a personal server. Note, there are several popular hypervisors (from VMware, Microsoft, and open source such as Xen and KVM) each with their own performance curves.

Each VM looks like an application running on top of the Host OS. Each Guest OS runs concurrently and can be the same or different (Windows, Linux or other). The Bins/Libs layer supplies software libraries and services needed by the top layer apps. If a VM faults to a “blue screen of death” the other VMs continue blissfully on. Yes, there are other methods of server virtualization, with tradeoffs between them. But for basics, just remember that they all enable apps/services to independently share the CPU cores, I/O and local RAM.

Typical loading is about four to five VMs per core. So, with a 10 core Xeon-based server, expect about 45 VMs to run simultaneously. Is it wise to run this many? It depends on storage access rates, network access rates and performance required per app. If a single VM/app is doing real-time 4K video compression, all the cores may be used to support just one VM.

VM servers are easy to instantiate, destroy, move or scale. Their agile nature is what has made them the de facto standard for cloud computing. VMs enable improved physical server efficiency by managing their VM/app loading. Many vendors specialize in managing the flocks of VMs that make up the modern data center. See for example, ansible.com, getchef.comor pup petlabs.com to learn more about data center automation tools.

CONTAINER WORLD

Another way to share compute resources and create personal servers is by using software containers (see Fig. 1). This is a server virtualization method (but not a VM) where the kernel of an operating system allows for multiple isolated user space instances, instead of just one. Such instances are often called “containers.” In a nutshell, containers/apps share the same OS. In fact, each container thinks it owns the OS, including the file system root directory, but it does not.

The professional video industry's #1 source for news, trends and product and tech information. Sign up below.

Yes, containers are similar to VMs in their goals, but the hypervisor has a performance tax due to its overhead to manage each VM. There can be approximately a 25-percent (or more) performance hit with some benchmark applications with less than a 5-percent tax being more common. This tax is different depending on the I/O, compute requirements and storage requests per VM. In addition, spinning up a VM can take 5–10 seconds, whereas container creation is nearly instantaneous. The container tax is usually less than 2 percent.

The container idea is based on the OS providing isolated kernel and user spaces. Until recently, only Linux offered this feature called Cgroups. These groups completely isolate an application’s view of the operating environment, including process trees, I/O and file systems.

In October 2014, Microsoft announced support for containers in their Windows Server OS. Google has used them for more than five years. If you check Gmail, it’s running in a Google container, not a VM. They have stated that they are running two billion containers per week—now that’s validating the idea.

Currently the most popular container engine is called Docker (docker.com). This is open source software. It offers the promise to a developer to build/test an app, distribute the container to one or more platforms and run these containers at will. Containers have less performance variation than VMs so the confidence to run a container with known performance is very high. Frankly, Docker is hot and Amazon, Google, IBM, Microsoft and VMware already support it in their clouds. Docker container management can use the same VM tools cited above; the management problems are similar. Of course, there are other tools and Google’s Kubernetes cluster management is a prime example.

VMS, CONTAINERS IN THE MEDIA FACILITY

So which method has the advantage in the media facility? This depends on many factors, not the least your policies for managing them. It’s not a good formula to have a mix of virtualization methods spread about without a governance policy in place. Of course, there are technical and process tradeoffs between these two methods, though it’s beyond our scope to compare them further.

Most observers agree that the future of the data center (cloud, especially) will use VMs and containers. So when shopping for applications and software services, ask vendors questions such as:

• Do you support running your app/service in a container or VM?

• If so, which containers have you tested on? Docker? Which VMs? On what OS?

• What app/service configuration did you use for testing? (This should stress I/O, CPU and RAM use.)

• What is your level of continuous support for the tested container/VM?

• Have you tested your app/service on cloud vendor Z? Under what conditions and configuration?

Asking these and related questions will help you migrate into the world of clouds, VMs and containers.

Al Kovalick is the founder of Media Systems consulting in Silicon Valley. He is the author of “Video Systems in an IT Environment (2nd ed).” He is a frequent speaker at industry events and a SMPTE Fellow. For a complete bio and contact information, visitwww.theAVITbook.com.