Avoiding 'Amusing Ourselves to Death' with the Intelligences We Create

While AI holds great promise for improving our lives and solving complex problems, it also poses significant risks and challenges that we must address

The rapid evolution of AI technologies has the potential to transform industries and significantly impact our daily lives. However, it is imperative to prioritize ethical considerations and ensure that these technologies are developed and deployed in a responsible and safe manner, as should be done with any medium, by mitigating potential risks and harms.

This calls for ongoing dialogue and collaboration between policymakers, industry leaders, researchers, and other stakeholders to ensure that the benefits of AI are realized while minimizing potential risks and harms.

The proactive approach of governments towards addressing the potential risks and ethical concerns associated with AI, such as the recent White House AI summit with CEOs of leading AI companies, is a positive step towards ensuring that AI is developed and deployed responsibly and safely.

While governments and industry leaders are taking proactive steps to address these concerns, it's also important to consider the potential risks of AI from different perspectives. This is where medium theory comes into play. Medium theory examines how different communication technologies shape human experiences and behaviors, and provides insights into how AI may affect our ability to think critically and engage in meaningful debate.

Neil Postman's book "Amusing Ourselves to Death" offers a notable example of medium theory in action. In his seminal book, published in 1985, Neil Postman argued that the rise of television as a dominant medium of communication has had a profound effect on public discourse and culture, resulting in the erosion of critical thinking skills and the trivialization of public debate. Postman's insights are more relevant than ever as we confront the challenges and opportunities of the digital age, in which artificial intelligence plays an increasingly important role.

While AI holds great promise for improving our lives and solving complex problems, it also poses significant risks and challenges that we must address. One of the most pressing concerns is the possibility that intelligence, both human and artificial, may lead us to our demise, as we become overwhelmed by the complexity and scale of the challenges we face.

The allure of intelligence is seductive: it promises to help us understand the world, predict the future, and solve problems that seem insurmountable. However, as we become more intelligent, we may also become more vulnerable to hubris, overconfidence, and tunnel vision.

The professional video industry's #1 source for news, trends and product and tech information. Sign up below.

We may come to believe that we have all the answers, that we can control the forces of nature, or that we can engineer our way out of any problem. This can lead to a dangerous complacency and a neglect of the unintended consequences of our actions.

Moreover, as AI becomes more sophisticated and autonomous, it may outstrip our ability to understand and control it. We may create AI systems that are more intelligent than we are, but also more unpredictable, inscrutable, and potentially dangerous.

We may not be able to anticipate or prevent their failures, or to regulate their behavior in a way that aligns with human values and goals. We may inadvertently create "black boxes" that we cannot open or comprehend, but that have the power to shape our destiny.

Furthermore, as we rely more on AI to solve problems and make decisions, we may lose our own ability to think critically and make ethical judgments. We may become dependent on machines to guide us, and to provide us with answers and solutions, without fully understanding the underlying assumptions and trade-offs.

We may lose sight of the human dimension of problems, and of the need for empathy, creativity, and intuition to address them. We may become detached from the natural world and from our own humanity, as we immerse ourselves in virtual reality and cyberspace.

In this sense, intelligence can be a double-edged sword: it can enhance our capabilities and improve our lives, but it can also lead us to our demise if we fail to use it wisely and responsibly. We need to cultivate a "critical intelligence" that combines rigorous analysis, deep reflection, and a sense of humility and awareness of our limitations.

We need to develop a "moral intelligence" that values human dignity, compassion, and justice, and that recognizes the interconnectedness and interdependence of all life on earth. We need to foster an "ecological intelligence" that respects the natural world and seeks to preserve and enhance its diversity and resilience.

If we fail to do so, we may end up amusing ourselves to death, as Postman warned, but this time not just through television, but through AI-mediated virtual reality, social media echo chambers, and algorithmic decision-making. The stakes are high, but so are the opportunities for creativity, innovation, and progress, if we can harness our intelligence in a way that serves the common good and the flourishing of life.

Ensuring that we avoid 'Amusing Ourselves to Death' with the intelligences we create is an indispensable consideration in the development and deployment of AI technologies. As we create and deploy AI technologies, we must be mindful of their potential impact on human experiences and behaviors, avoid becoming passive consumers of the technologies, and take steps to ensure that they are developed and used in ways that promote critical thinking, meaningful discourse, and do no harm to society as a whole, intended or unintended.

Ultimately, it is up to us as humans to use AI technologies in a way that benefits us as human beings. It is crucial to recognize that while AI can assist us in many tasks, it is our creativity, emotional intelligence, and critical thinking that sets us apart as a species.

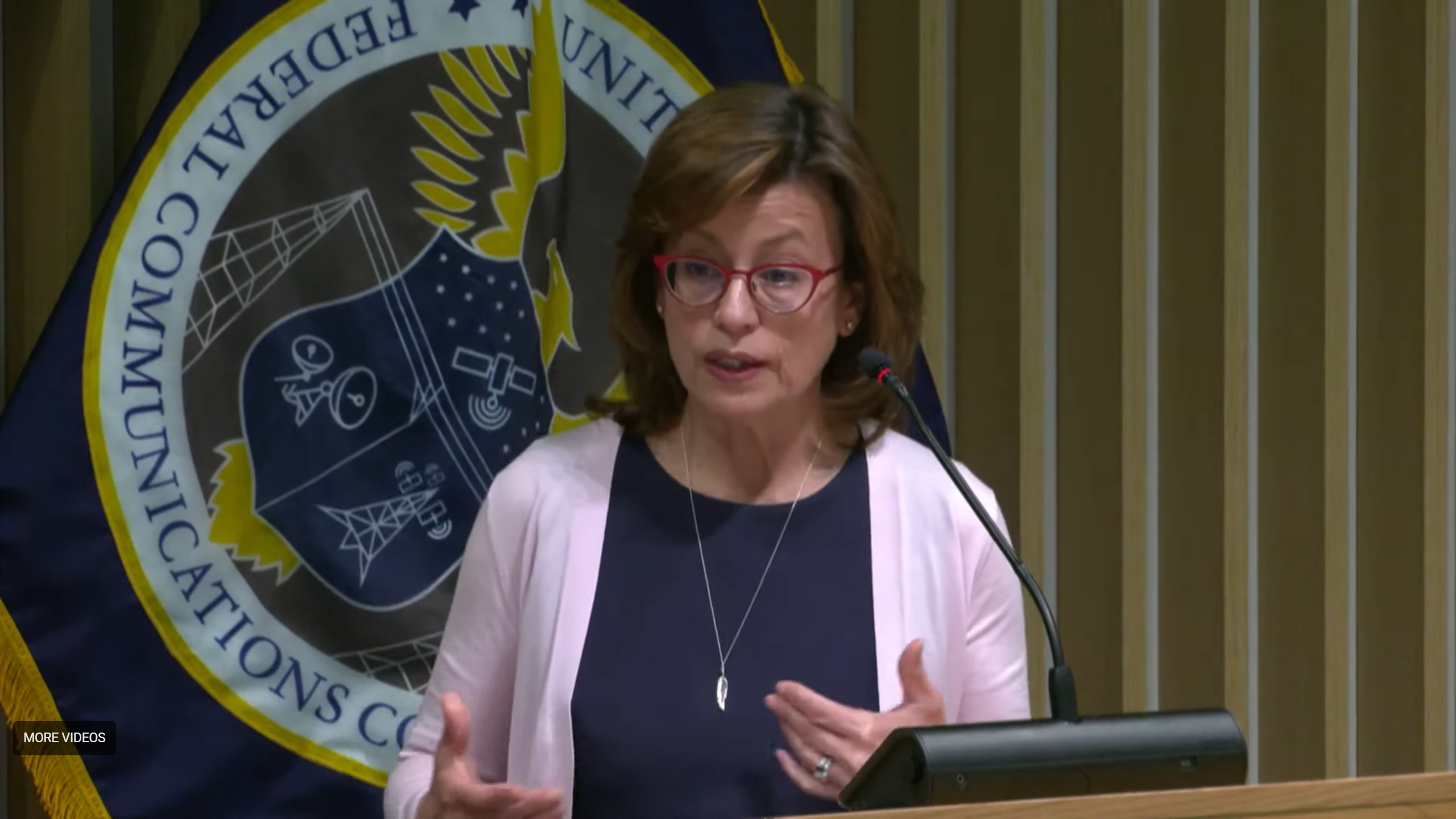

Ling Ling Sun is Vice President of Technology for Maryland Public Television and former CTO at Nebraska Public Media from 2014 to 2025.

A respected industry leader, Sun serves as a director on the ATSC Board and is an active member of the NAB Television Technology Committee. Her commitment to public broadcasting is evidenced by her two consecutive terms chairing the PBS Engineering Technology Advisory Committee (ETAC) from 2013 to 2018, alongside her service on the PBS Interconnection Committee. Sun further demonstrated her leadership by heading the NAB Broadcast Engineering & Information Technology (BEIT) Conference Program Committee in both 2023 and 2024. Additionally, she has contributed her expertise to the Technical Panel of the Nebraska Information Technology Commission.