Ensuring Correct AV Sync

In perusing AES standards recently, I came across this document "AES-R11-2009: AES standards project report — Methods for the measurement of audio video synchronization error."

Finally, I thought, the long-awaited standard for measuring A/V delay was on the way. As it turned out, a standard was not to be, but not for lack of trying.

Project AES-X177 of the SC-02-01 working group of digital audio measurement techniques unfortunately needed to close, mainly due to time constraints of key participants.

"I started the project in the AES SC as a small step on the way to solving the problem of A/V sync," said Andrew Mason of the BBC, and chair of Project AES-X177. But, "nobody else involved in the AES SC was willing to step forward to continue leading the work in the standards committee, so it could not continue."

But the effort was not for naught. The project report the group issued contains plenty of useful information.

GATHERING INFORMATION

The document consists of two BBC white papers, "Factors affecting perception of audio-video synchronisation in television," by Andrew Mason and Richard Salmon; and "Managing a Real World Dolby E Broadcast Workflow," by Rowan de Pomerai.

"The aim of the document was to collect some useful information that had been produced during the lifetime of the AES SC project," Mason said. "My colleague Rowan de Pomerai addressed some specific problems that had arisen in the BBC's operations; and my work looked at more fundamental questions, prompted by one in particular: 'Does the finer spatial resolution of HDTV make the requirement for A/V sync tighter than it is for SDTV?'"

The Mason and Salmon paper summarizes the numerous factors, equipment, and systems that can produce A/V sync errors. The de Pomerai paper describes Dolby E and some solutions to issues the BBC faced in implementing this technology. Included are sections on A/V sync for both the broadcast facility and home systems, and how the author went through the various subsystems at the BBC to ensure lip sync was maintained everywhere throughout the signal chain.

A generic schematic block identifying delays on single-line drawings. Reproduced with permission, from BBC Research & Development White Paper WHP 175, de Pomerai, 2009 The information presented in the project report can be used as a guide for facilities to systematically map out and test their systems to ensure correct A/V sync.

Following de Pomerai's lead, start by identifying every device and system that can present audio or video delays. You'll probably find that includes just about everything, such as satellite integrated receiver decoders (IRDs), multiplexers and de-muxes, encoders and decoders, frame syncs and standards converters, delay devices, editing systems, cameras, video production switchers and audio mixing systems, video displays, servers, VTRs, digital video effects and other kinds of video and audio processors, all the way to emission encoders.

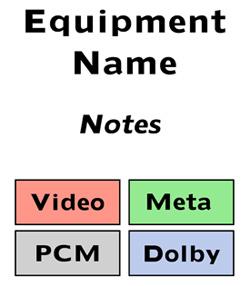

Then, as de Pomerai suggests, it's helpful to create single line drawings for each system with equipment represented by boxes. In each of the boxes, note delays for video, digital audio (PCM), Dolby E and metadata.

BEWARE OF HIDDEN DELAYS

Watch out for "hidden" delays in many of these devices. Many processors, such as embedders or encoders, have internal delays, obviating the need for external ones.

de Pomerai noted that some devices accept Dolby E may, by default, automatically add a frame of video delay to compensate for the audio delay. VTRs may have the option of recording Dolby E in sync with the video, or a frame ahead of the video.

Using this information, meticulously go through each subsystem, measuring and setting delays until you can declare with certainty, "it's fine leaving here."

There's more to this report, such as issues with home systems and perception of lip sync errors. These will be discussed in a future column.

But in the meantime, if you're in New York for the AES convention in October, check out the workshop "Lip Sync Issue," moderated by Jon Abrams of Nutmeg Post. The conference runs October 20–23, 2011 (see p. 17 for a preview).

This workshop promises a panel of experts who will discuss such things as where latency issues lay, types of measurements and correction techniques, video displays and lip sync, and who is ultimately responsible for maintaining and ensuring lip sync.

"A forum like the AES can bring together numerous parties who all have a role in A/V sync," Mason said. "Awareness of the problem is important; operational practices are important; equipment design is important. By helping everyone understand the possible causes of the problem, we can try to find solutions that work. I am aware that there are some new proposals coming along to try to solve the problem, so a forum like the AES is a good place to tell people about these."

Mason said that, while publishing the BBC white papers is important to make this information available, "having them as part of the AES SC report also highlights that standardization has a very important role too."

Mary C. Gruszka is a systems design engineer, project manager, consultant and writer based in the New York metro area. She can be reached via TV Technology.

RESOURCES

The project documents can be found online.

AES-R11-2009 is available at the AES Standards Store

www.aes.org/publications/standards/

The individual white papers can be found at the BBC Research and Development white paper collection:

Dolby E

downloads.bbc.co.uk/rd/pubs/whp/whp-pdf-files/WHP175.pdf

A/V sync

downloads.bbc.co.uk/rd/pubs/whp/whp-pdf-files/WHP176.pdf

Get the TV Tech Newsletter

The professional video industry's #1 source for news, trends and product and tech information. Sign up below.